1. Preface¶

1.1. Who Should Use This Guide¶

EXPRESSCLUSTER X Getting Started Guide is intended for first-time users of the EXPRESSCLUSTER. The guide covers topics such as product overview of the EXPRESSCLUSTER, how the cluster system is installed, and the summary of other available guides. In addition, latest system requirements and restrictions are described.

1.2. How This Guide is Organized¶

2. What is a cluster system?: Helps you to understand the overview of the cluster system and EXPRESSCLUSTER.

3. Using EXPRESSCLUSTER: Provides instructions on how to use a cluster system and other related-information.

4. Installation requirements for EXPRESSCLUSTER: Provides the latest information that needs to be verified before starting to use EXPRESSCLUSTER.

5. Latest version information: Provides information on latest version of the EXPRESSCLUSTER.

6. Notes and Restrictions: Provides information on known problems and restrictions.

7. Upgrading EXPRESSCLUSTER: Provides instructions on how to update the EXPRESSCLUSTER.

1.3. EXPRESSCLUSTER X Documentation Set¶

The EXPRESSCLUSTER X manuals consist of the following five guides. The title and purpose of each guide is described below:

EXPRESSCLUSTER X Getting Started Guide

This guide is intended for all users. The guide covers topics such as product overview, system requirements, and known problems.

EXPRESSCLUSTER X Installation and Configuration Guide

This guide is intended for system engineers and administrators who want to build, operate, and maintain a cluster system. Instructions for designing, installing, and configuring a cluster system with EXPRESSCLUSTER are covered in this guide.

EXPRESSCLUSTER X Reference Guide

This guide is intended for system administrators. The guide covers topics such as how to operate EXPRESSCLUSTER, function of each module and troubleshooting. The guide is supplement to the Installation and Configuration Guide.

EXPRESSCLUSTER X Maintenance Guide

This guide is intended for administrators and for system administrators who want to build, operate, and maintain EXPRESSCLUSTER-based cluster systems. The guide describes maintenance-related topics for EXPRESSCLUSTER.

EXPRESSCLUSTER X Hardware Feature Guide

This guide is intended for administrators and for system engineers who want to build EXPRESSCLUSTER-based cluster systems. The guide describes features to work with specific hardware, serving as a supplement to the Installation and Configuration Guide.

1.4. Conventions¶

In this guide, Note, Important, See also are used as follows:

Note

Used when the information given is important, but not related to the data loss and damage to the system and machine.

Important

Used when the information given is necessary to avoid the data loss and damage to the system and machine.

See also

Used to describe the location of the information given at the reference destination.

The following conventions are used in this guide.

Convention |

Usage |

Example |

|---|---|---|

Bold

|

Indicates graphical objects, such as fields, list boxes, menu selections, buttons, labels, icons, etc.

|

In User Name, type your name.

On the File menu, click Open Database.

|

Angled bracket within the command line |

Indicates that the value specified inside of the angled bracket can be omitted. |

clpstat -s[-h host_name] |

# |

Prompt to indicate that a Linux user has logged on as root user. |

# clpcl -s -a |

Monospace |

Indicates path names, commands, system output (message, prompt, etc.), directory, file names, functions and parameters. |

|

bold

|

Indicates the value that a user actually enters from a command line.

|

Enter the following:

# clpcl -s -a

|

italic |

Indicates that users should replace italicized part with values that they are actually working with. |

|

In the figures of this guide, this icon represents EXPRESSCLUSTER.

In the figures of this guide, this icon represents EXPRESSCLUSTER.

1.5. Contacting NEC¶

For the latest product information, visit our website below:

2. What is a cluster system?¶

This chapter describes overview of the cluster system.

This chapter covers:

2.1. Overview of the cluster system¶

A key to success in today's computerized world is to provide services without them stopping. A single machine down due to a failure or overload can stop entire services you provide with customers. This will not only result in enormous damage but also in loss of credibility you once enjoyed.

A cluster system is a solution to tackle such a disaster. Introducing a cluster system allows you to minimize the period during which operation of your system stops (down time) or to avoid system-down by load distribution.

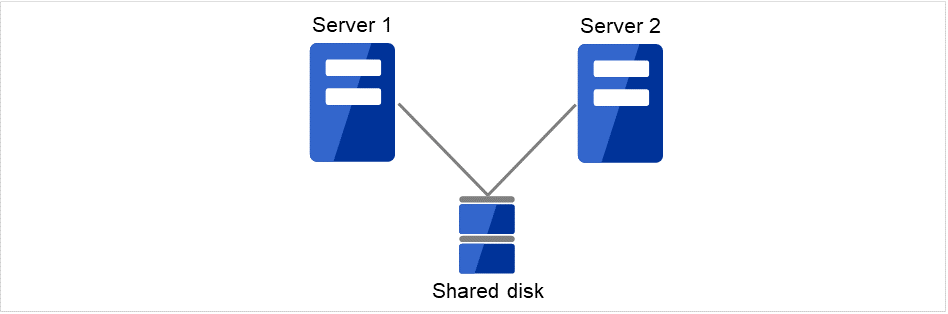

As the word "cluster" represents, a cluster system is a system aiming to increase reliability and performance by clustering a group (or groups) of multiple computers. There are various types of cluster systems, which can be classified into the following three listed below. EXPRESSCLUSTER is categorized as a high availability cluster.

High Availability (HA) Cluster

In this cluster configuration, one server operates as an active server. When the active server fails, a standby server takes over the operation. This cluster configuration aims for high-availability and allows data to be inherited as well. The high availability cluster is available in the shared disk type, data mirror type or remote cluster type.

Load Distribution Cluster

This is a cluster configuration where requests from clients are allocated to load-distribution hosts according to appropriate load distribution rules. This cluster configuration aims for high scalability. Generally, data cannot be taken over. The load distribution cluster is available in a load balance type or parallel database type.

High Performance Computing (HPC) Cluster

This is a cluster configuration where CPUs of all nodes are used to perform a single operation. This cluster configuration aims for high performance but does not provide general versatility.Grid computing, which is one of the types of high performance computing that clusters a wider range of nodes and computing clusters, is a hot topic these days.

2.2. High Availability (HA) cluster¶

To enhance the availability of a system, it is generally considered that having redundancy for components of the system and eliminating a single point of failure is important. "Single point of failure" is a weakness of having a single computer component (hardware component) in the system. If the component fails, it will cause interruption of services. The high availability (HA) cluster is a cluster system that minimizes the time during which the system is stopped and increases operational availability by establishing redundancy with multiple servers.

The HA cluster is called for in mission-critical systems where downtime is fatal. The HA cluster can be divided into two types: shared disk type and data mirror type. The explanation for each type is provided below.

2.2.2. Data mirror type¶

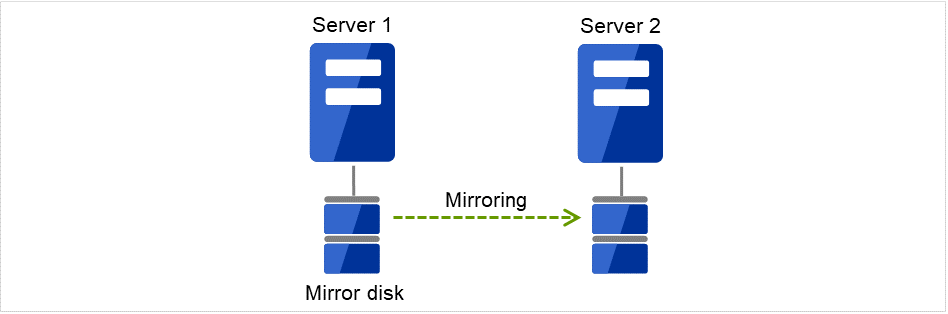

The shared disk type cluster system is good for large-scale systems. However, creating a system with this type can be costly because shared disks are generally expensive. The data mirror type cluster system provides the same functions as the shared disk type with smaller cost through mirroring of server disks.

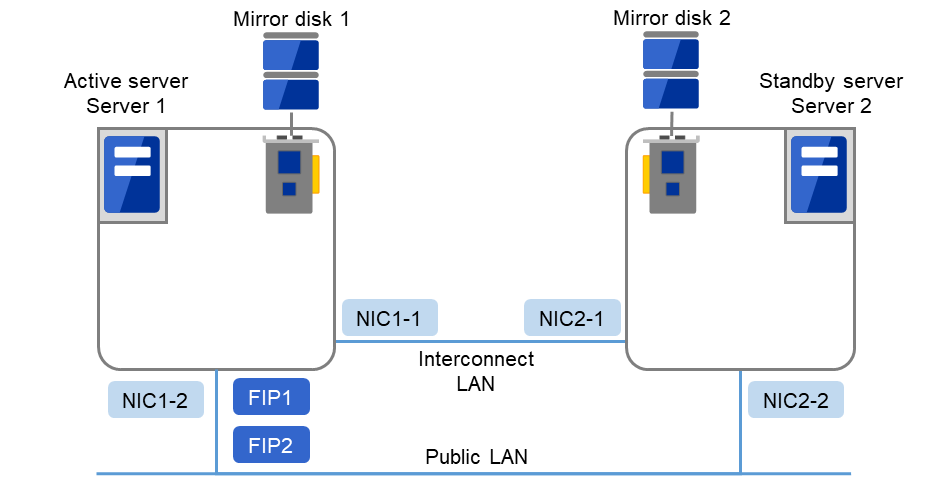

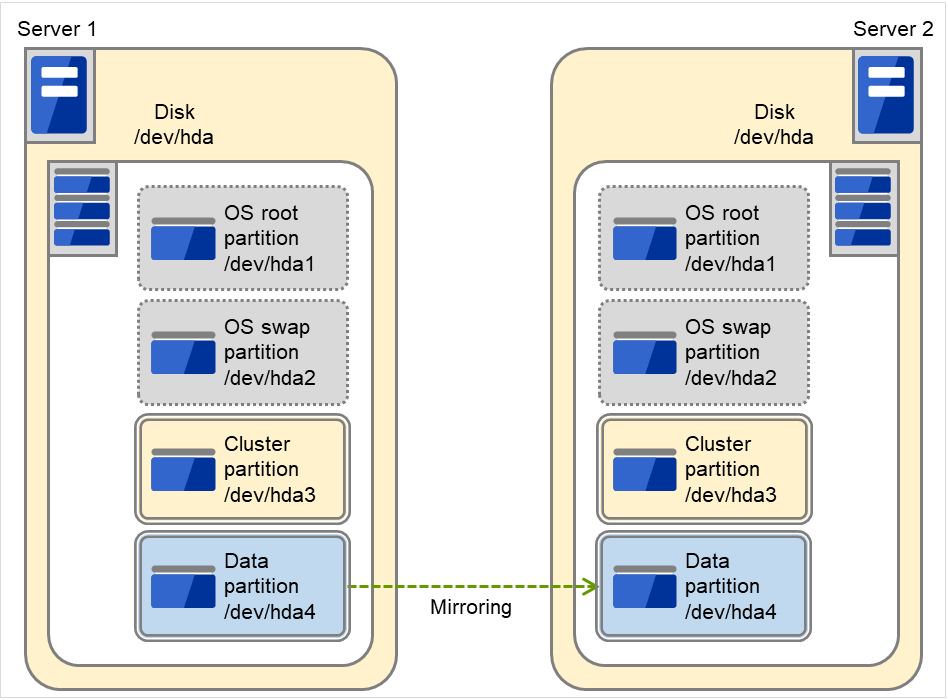

Fig. 2.5 HA cluster configuration (Data mirror type)¶

Cheap since a shared disk is unnecessary.

Ideal for the system with less data volume because of mirroring.

The data mirror type is not recommended for large-scale systems that handle a large volume of data since data needs to be mirrored between servers.

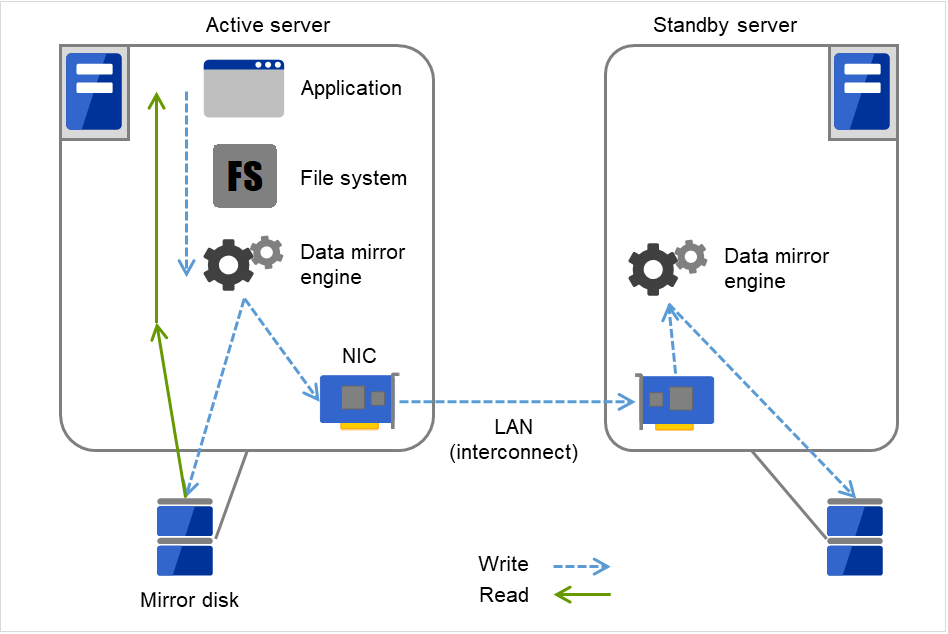

When a write request is made by an application, the data mirror engine not only writes data in the local disk but sends the write request to the standby server via the interconnect. Interconnect is a network connecting servers. It is used to monitor whether or not the server is activated in the cluster system. In addition to this purpose, interconnect is sometimes used to transfer data in the data mirror type cluster system. The data mirror engine on the standby server achieves data synchronization between standby and active servers by writing the data into the local disk of the standby server.

For read requests from an application, data is simply read from the disk on the active server.

Fig. 2.6 Data mirror mechanism¶

Snapshot backup is applied usage of data mirroring. Because the data mirror type cluster system has shared data in two locations, you can keep the disk of the standby server as snapshot backup without spending time for backup by simply separating the server from the cluster.

Failover mechanism and its problems

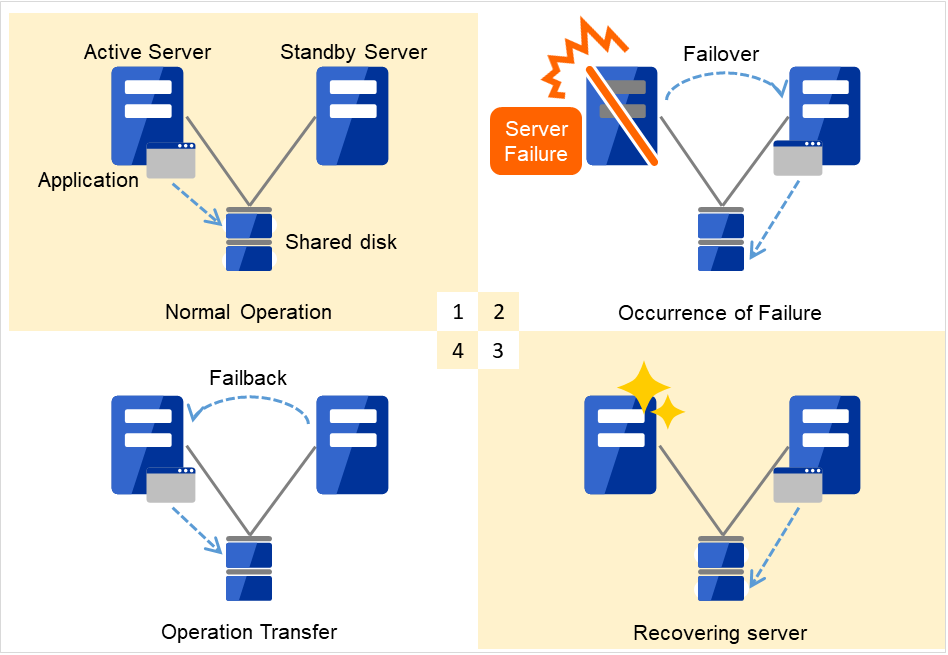

There are various cluster systems such as failover clusters, load distribution clusters, and high performance computing (HPC) clusters. The failover cluster is one of the high availability (HA) cluster systems that aim to increase operational availability through establishing server redundancy and passing operations being executed to another server when a failure occurs.

2.3. Error detection mechanism¶

Cluster software executes failover (for example, passing operations) when a failure that can impact continued operation is detected. The following section gives you a quick view of how the cluster software detects a failure.

Heartbeat and detection of server failures

Failures that must be detected in a cluster system are failures that can cause all servers in the cluster to stop. Server failures include hardware failures such as power supply and memory failures, and OS panic. To detect such failures, heartbeat is employed to monitor whether or not the server is active.

Some cluster software programs use heartbeat not only for checking whether or not the target is active through ping response, but for sending status information on the local server. Such cluster software programs begin failover if no heartbeat response is received in heartbeat transmission, determining no response as server failure. However, grace time should be given before determining failure, since a highly loaded server can cause delay of response. Allowing grace period results in a time lag between the moment when a failure occurred and the moment when the failure is detected by the cluster software.

Detection of resource failures

Factors causing stop of operations are not limited to stop of all servers in the cluster. Failure in disks used by applications, NIC failure, and failure in applications themselves are also factors that can cause the stop of operations. These resource failures need to be detected as well to execute failover for improved availability.

Accessing a target resource is a way employed to detect resource failures if the target is a physical device. For monitoring applications, trying to service ports within the range not impacting operation is a way of detecting an error in addition to monitoring whether or not application processes are activated.

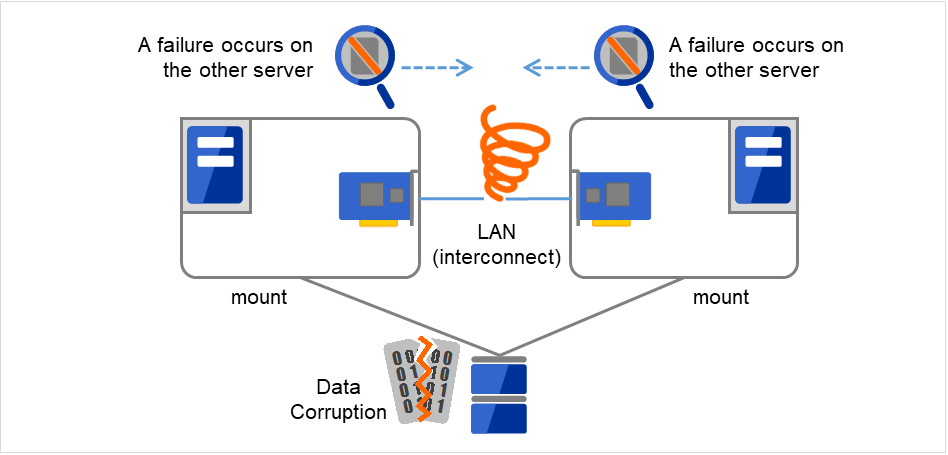

2.3.2. Network partition (split-brain-syndrome)¶

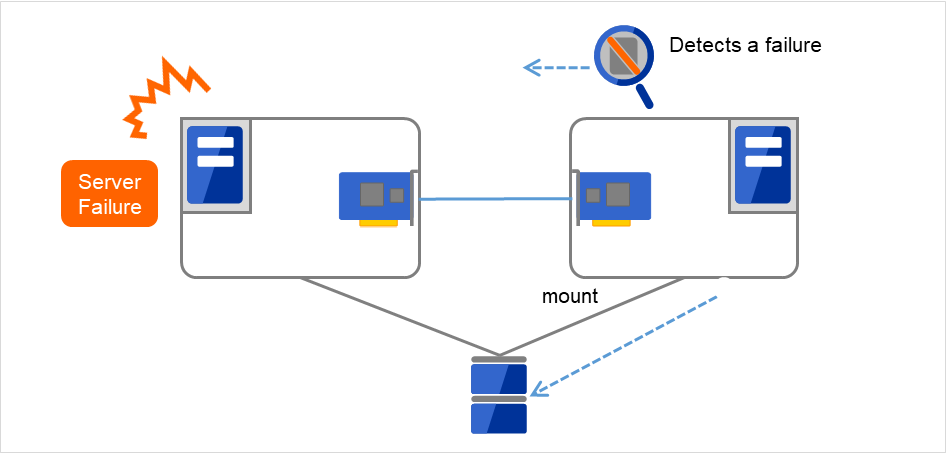

When all interconnects between servers are disconnected, failover takes place because the servers assume other server(s) are down. To monitor whether the server is activated, a heartbeat communication is used. As a result, multiple servers mount a file system simultaneously causing data corruption. This explains the importance of appropriate failover behavior in a cluster system at the time of failure occurrence.

Fig. 2.8 Network partition problem¶

The problem explained in the section above is referred to as "network partition" or "split-brain syndrome." The failover cluster system is equipped with various mechanisms to ensure shared disk lock at the time when all interconnects are disconnected.

2.4. Taking over cluster resources¶

As mentioned earlier, resources to be managed by a cluster include disks, IP addresses, and applications. The functions used in the failover cluster system to inherit these resources are described below.

2.4.1. Taking over the data¶

Data to be passed from a server to another in a cluster system is stored in a partition on the shared disk. This means data is re-mounting the file system of files that the application uses on a healthy server. What the cluster software should do is simply mount the file system because the shared disk is physically connected to a server that inherits data.

Fig. 2.9 Taking over data¶

"Figure 2.9 Taking over data" may look simple, but consider the following issues in designing and creating a cluster system.

One issue to consider is recovery time for a file system. A file system to be inherited may have been used by another server or being updated just before the failure occurred and requires a file system consistency check. When the file system is large, the time spent for checking consistency will be enormous. It may take a few hours to complete the check and the time is wholly added to the time for failover (time to take over operation), and this will reduce system availability.

Another issue you should consider is writing assurance. When an application writes important data into a file, it tries to ensure the data to be written into a disk by using a function such as synchronized writing. The data that the application assumes to have been written is expected to be inherited after failover. For example, a mail server reports the completion of mail receiving to other mail servers or clients after it has securely written mails it received in a spool. This will allow the spooled mail to be distributed again after the server is restarted. Likewise, a cluster system should ensure mails written into spool by a server to become readable by another server.

2.4.2. Taking over the applications¶

The last to come in inheritance of operation by cluster software is inheritance of applications. Unlike fault tolerant computers (FTC), no process status such as contents of memory is inherited in typical failover cluster systems. The applications running on a failed server are inherited by rerunning them on a healthy server.

For example, when instances of a database management system (DBMS) are inherited, the database is automatically recovered (roll-forward/roll-back) by startup of the instances. The time needed for this database recovery is typically a few minutes though it can be controlled by configuring the interval of DBMS checkpoint to a certain extent.

Many applications can restart operations by re-execution. Some applications, however, require going through procedures for recovery if a failure occurs. For these applications, cluster software allows to start up scripts instead of applications so that recovery process can be written. In a script, the recovery process, including cleanup of files half updated, is written as necessary according to factors for executing the script and information on the execution server.

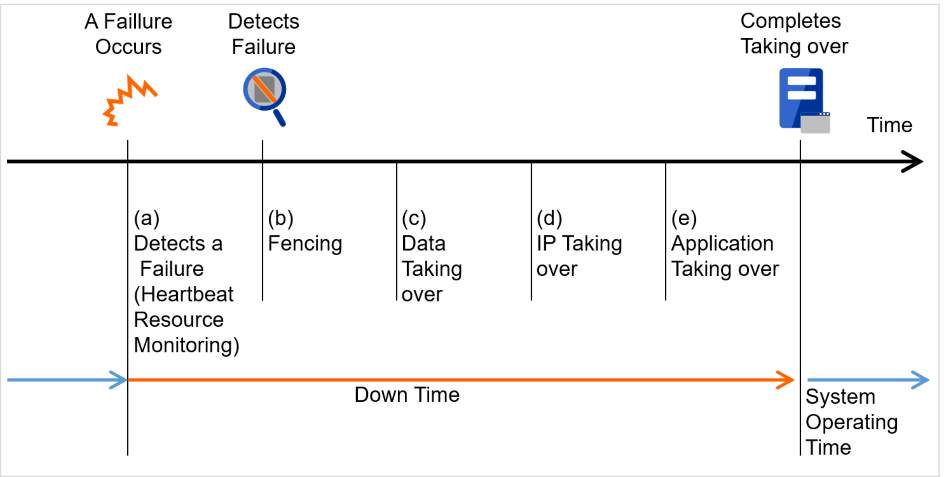

2.4.3. Summary of failover¶

To summarize the behavior of cluster software:

Detects a failure (heartbeat/resource monitoring)

Performs fencing (resolves a network partition (NP resolution) and disconnects the failed server)

Pass data

Pass IP address

Application Taking over

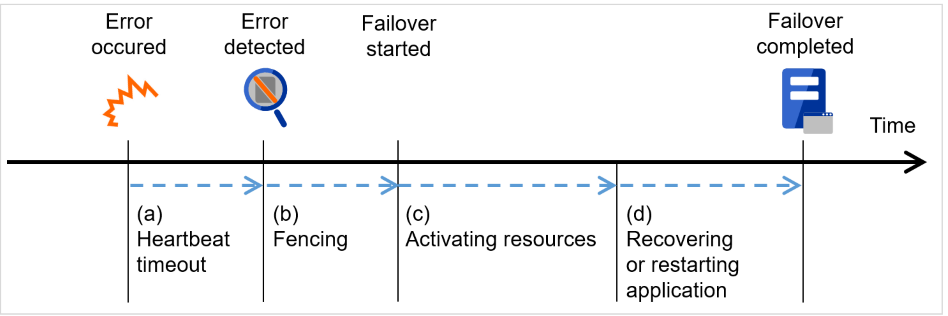

Fig. 2.10 Failover time chart¶

Cluster software is required to complete each task quickly and reliably (see "Figure 2.10 Failover time chart"). Cluster software achieves high availability with due consideration on what has been described so far.

2.5. Eliminating single point of failure¶

Having a clear picture of the availability level required or aimed is important in building a high availability system. This means when you design a system, you need to study cost effectiveness of countermeasures, such as establishing a redundant configuration to continue operations and recovering operations within a short period of time, against various failures that can disturb system operations.

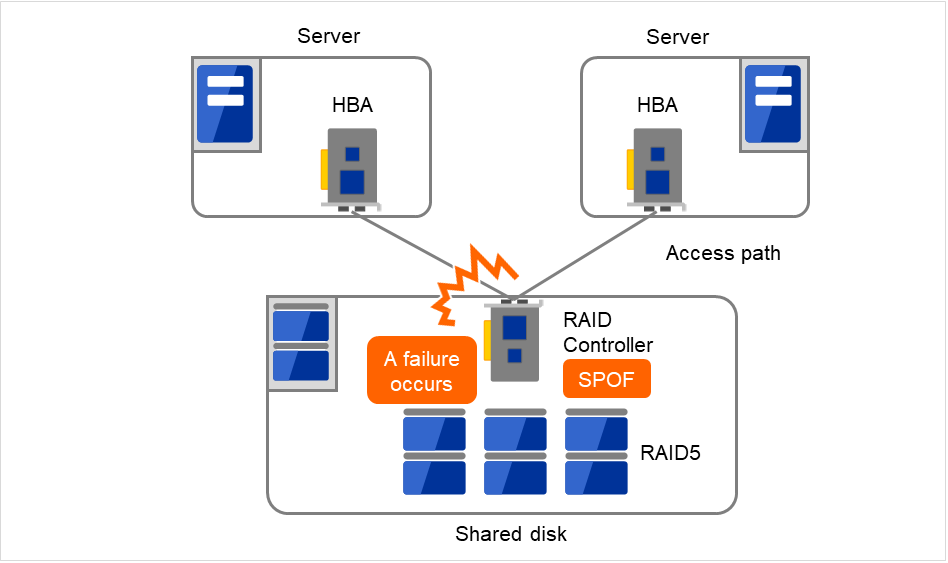

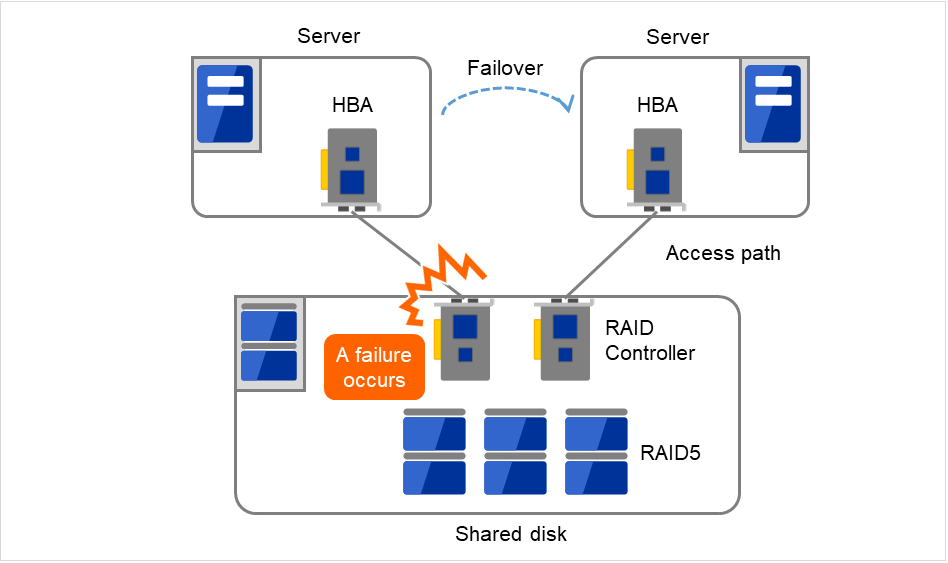

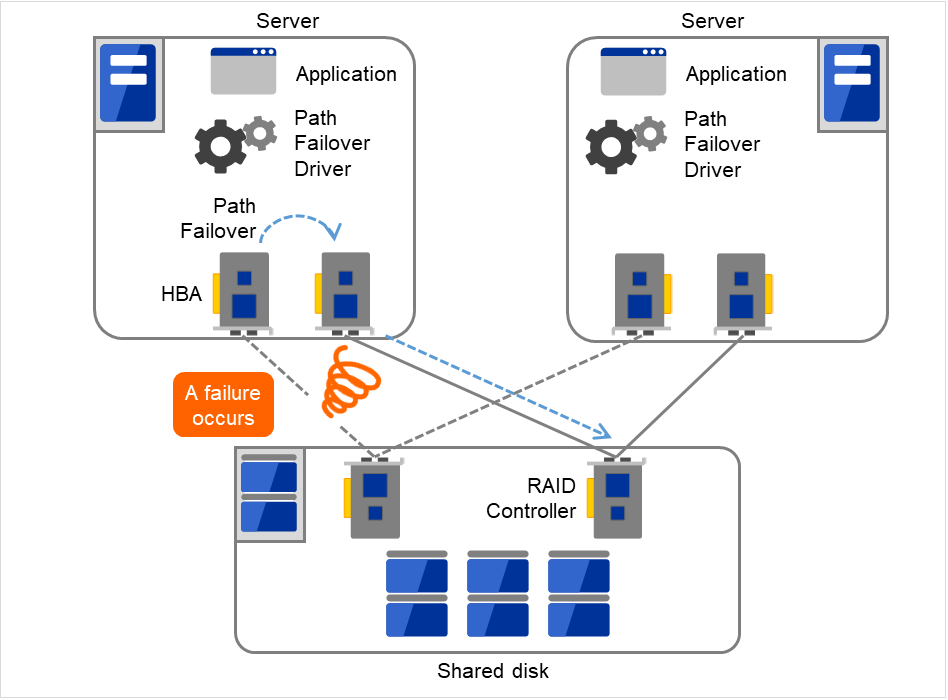

Single point of failure (SPOF), as described previously, is a component where failure can lead to stop of the system. In a cluster system, you can eliminate the system's SPOF by establishing server redundancy. However, components shared among servers, such as shared disk may become a SPOF. The key in designing a high availability system is to duplicate or eliminate this shared component.

A cluster system can improve availability but failover will take a few minutes for switching systems. That means time for failover is a factor that reduces availability. Solutions for the following three, which are likely to become SPOF, will be discussed hereafter although technical issues that improve availability of a single server such as ECC memory and redundant power supply are important.

Shared disk

Access path to the shared disk

LAN

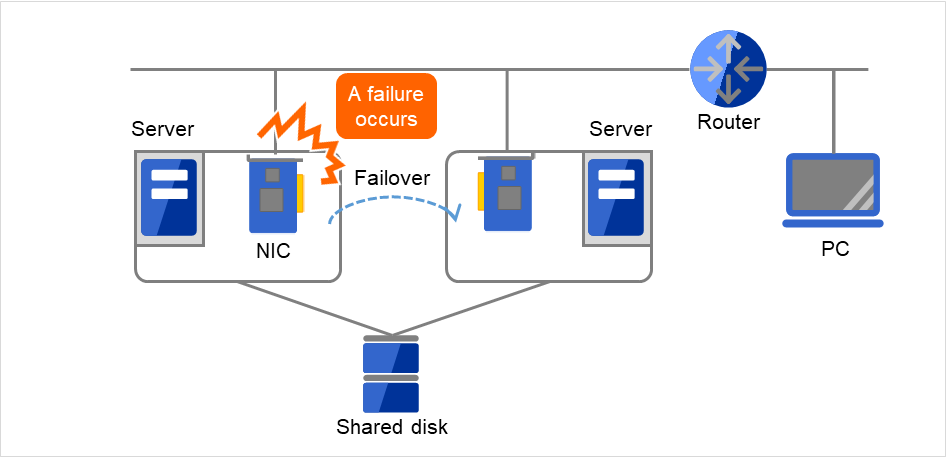

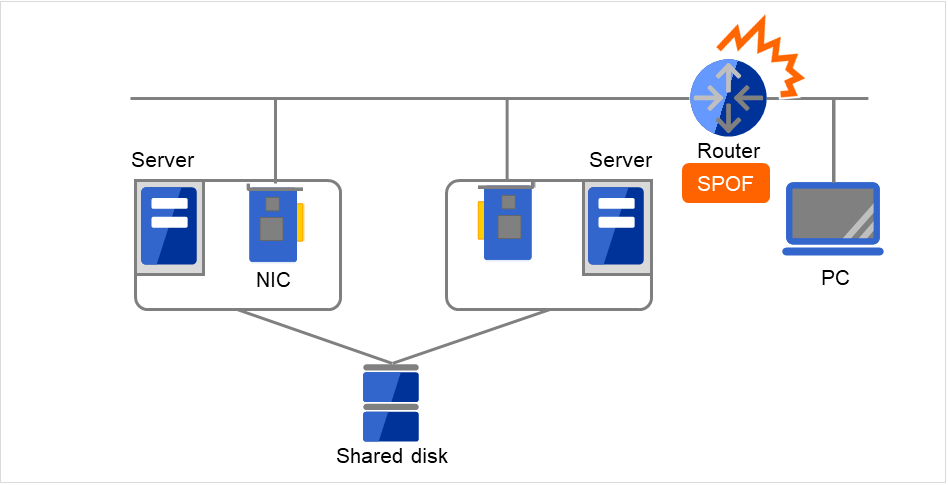

2.5.3. LAN¶

In any systems that run services on a network, a LAN failure is a major factor that disturbs operations of the system. If appropriate settings are made, availability of cluster system can be increased through failover between nodes at NIC failures. However, a failure in a network device that resides outside the cluster system disturbs operation of the system.

Fig. 2.14 Example of a failure with LAN (NIC)¶

In the case of this above figure, even if NIC on the server has a failure, a failover will keep the access from the PC to the service on the server.

Fig. 2.15 Example of a failure with LAN (Router)¶

In the case of this above figure, if the router has a failure, the access from the PC to the service on the server cannot be maintained (Router becomes a SPOF).

LAN redundancy is a solution to tackle device failure outside the cluster system and to improve availability. You can apply ways used for a single server to increase LAN availability. For example, choose a primitive way to have a spare network device with its power off, and manually replace a failed device with this spare device. Choose to have a multiplex network path through a redundant configuration of high-performance network devices, and switch paths automatically. Another option is to use a driver that supports NIC redundant configuration such as Intel's ANS driver.

Load balancing appliances and firewall appliances are also network devices that are likely to become SPOF. Typically they allow failover configurations through standard or optional software. Having redundant configuration for these devices should be regarded as requisite since they play important roles in the entire system.

2.6. Operation for availability¶

2.6.1. Evaluation before staring operation¶

Given many of factors causing system troubles are said to be the product of incorrect settings or poor maintenance, evaluation before actual operation is important to realize a high availability system and its stabilized operation. Exercising the following for actual operation of the system is a key in improving availability:

Clarify and list failures, study actions to be taken against them, and verify effectiveness of the actions by creating dummy failures.

Conduct an evaluation according to the cluster life cycle and verify performance (such as at degenerated mode)

Arrange a guide for system operation and troubleshooting based on the evaluation mentioned above.

Having a simple design for a cluster system contributes to simplifying verification and improvement of system availability.

2.6.2. Failure monitoring¶

Despite the above efforts, failures still occur. If you use the system for long time, you cannot escape from failures: hardware suffers from aging deterioration and software produces failures and errors through memory leaks or operation beyond the originally intended capacity. Improving availability of hardware and software is important yet monitoring for failure and troubleshooting problems is more important. For example, in a cluster system, you can continue running the system by spending a few minutes for switching even if a server fails. However, if you leave the failed server as it is, the system no longer has redundancy and the cluster system becomes meaningless should the next failure occur.

If a failure occurs, the system administrator must immediately take actions such as removing a newly emerged SPOF to prevent another failure. Functions for remote maintenance and reporting failures are very important in supporting services for system administration. Linux is known for providing good remote maintenance functions. Mechanism for reporting failures are coming in place. To achieve high availability with a cluster system, you should:

Remove or have complete control on single point of failure.

Have a simple design that has tolerance and resistance for failures, and be equipped with a guide for operation and troubleshooting.

Detect a failure quickly and take appropriate action against it.

3. Using EXPRESSCLUSTER¶

This chapter explains the components of EXPRESSCLUSTER, how to design a cluster system, and how to use EXPRESSCLUSTER.

This chapter covers:

3.1. What is EXPRESSCLUSTER?¶

EXPRESSCLUSTER is software that enhances availability and expandability of systems by a redundant (clustered) system configuration. The application services running on the active server are automatically inherited to a standby server when an error occurs in the active server.

3.2. EXPRESSCLUSTER modules¶

EXPRESSCLUSTER consists of following two modules:

- EXPRESSCLUSTER ServerA core component of EXPRESSCLUSTER. This includes all high availability functions of the server. The server functions of the Cluster WebUI, are also included.

- Cluster WebUIThis is a tool to create the configuration data of EXPRESSCLUSTER and to manage EXPRESSCLUSTER operations. Uses a Web browser as a user interface. The Cluster WebUI is installed in EXPRESSCLUSTER Server, but it is distinguished from the EXPRESSCLUSTER Server because the Cluster WebUI is operated from the Web browser on the management PC.

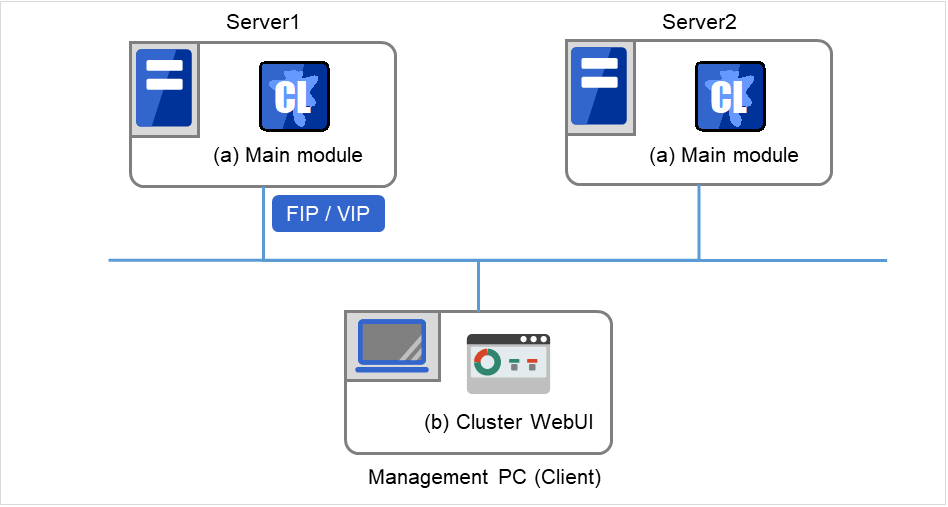

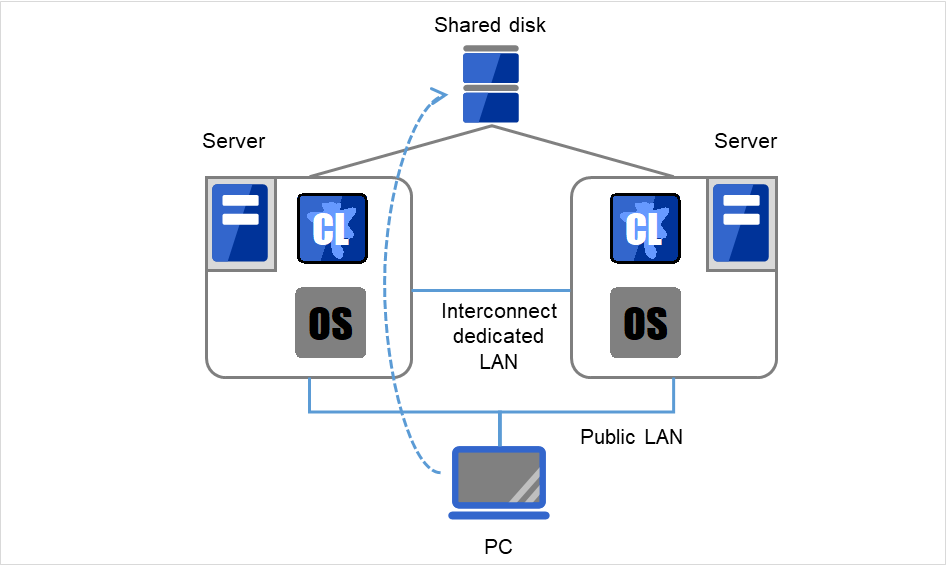

3.3. Software configuration of EXPRESSCLUSTER¶

The software configuration of EXPRESSCLUSTER should look similar to the figure below. Install the EXPRESSCLUSTER Server (software) on a Linux server, and the Cluster WebUI on a management PC or a server. Because the main functions of Cluster WebUI are included in EXPRESSCLUSTER Server, it is not necessary to separately install them. The Cluster WebUI can be used through the web browser on the management PC or on each server in the cluster.

EXPRESSCLUSTER Server

Cluster WebUI

Fig. 3.1 Software configuration of EXPRESSCLUSTER¶

3.3.1. How an error is detected in EXPRESSCLUSTER¶

There are three kinds of monitoring in EXPRESSCLUSTER: (1) server monitoring, (2) application monitoring, and (3) internal monitoring. These monitoring functions let you detect an error quickly and reliably. The details of the monitoring functions are described below.

3.3.2. What is server monitoring?¶

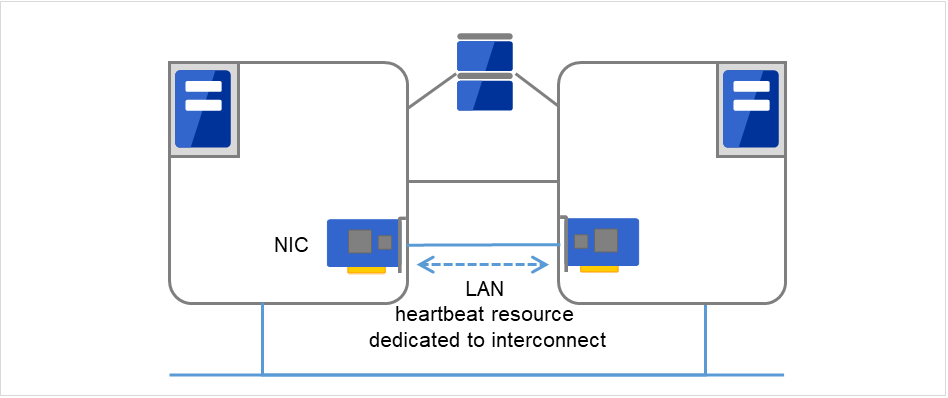

- Primary InterconnectUses an Ethernet NIC in communication path dedicated to the failover-type cluster system. This is used to exchange information between the servers as well as to perform heartbeat communication.

Fig. 3.2 LAN heartbeat/Kernel mode LAN heartbeat (Primary interconnect)¶

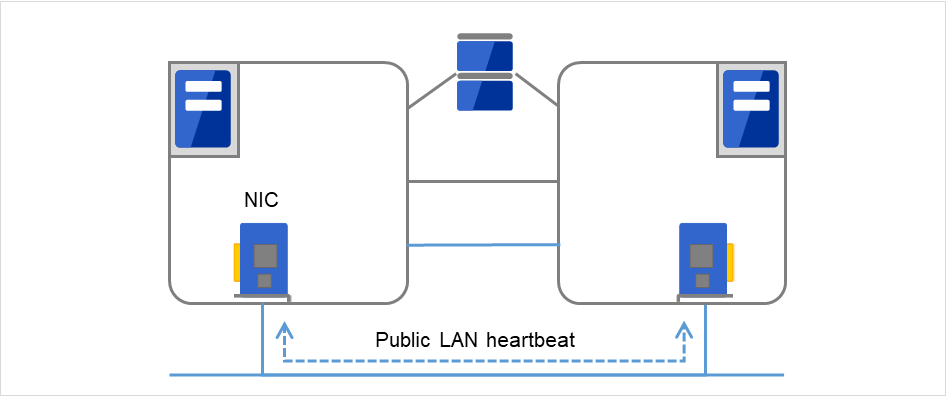

- Secondary InterconnectUses a communication path used for communication with client machine as an alternative interconnect. Any Ethernet NIC can be used as long as TCP/IP can be used. This is also used to exchange information between the servers and to perform heartbeat communication.

Fig. 3.3 LAN heartbeat/Kernel mode LAN heartbeat (Secondary interconnect)¶

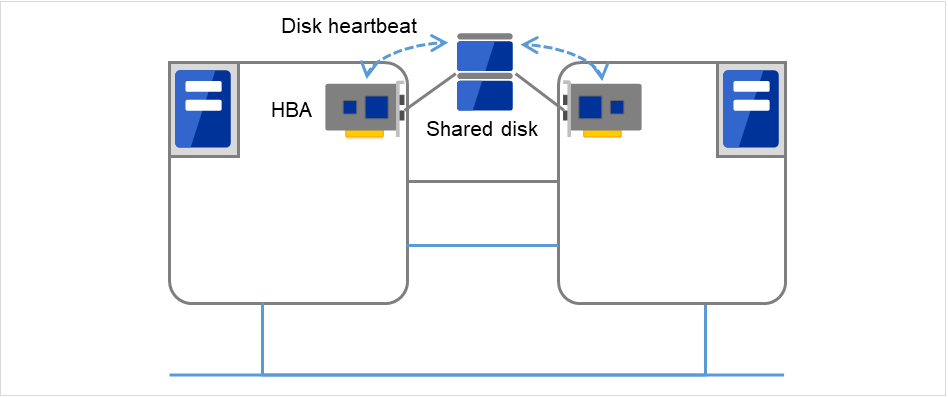

- Shared diskCreates an EXPRESSCLUSTER-dedicated partition (EXPRESSCLUSTER partition) on the disk that is connected to all servers that constitute the failover-type cluster system, and performs heartbeat communication on the EXPRESSCLUSTER partition.

Fig. 3.4 Disk heartbeat¶

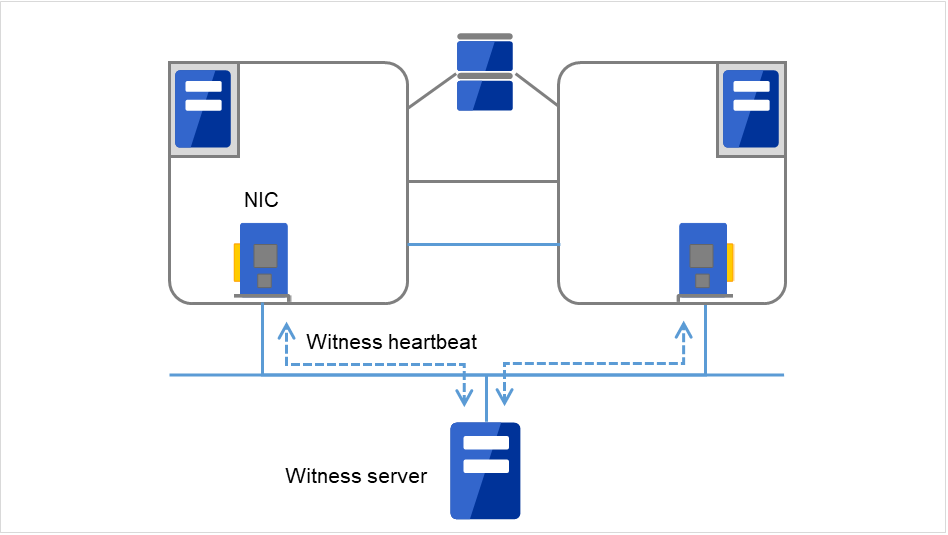

- WitnessThis is used by the external Witness server running the Witness server service to check if other servers constructing the failover-type cluster exist through communication with them.

Fig. 3.5 Witness heartbeat¶

Having these communication paths dramatically improves the reliability of the communication between the servers, and prevents the occurrence of network partition.

Note

Network partition refers to a condition when a network gets split by having a problem in all communication paths of the servers in a cluster. In a cluster system that is not capable of handling a network partition, a problem occurred in a communication path and a server cannot be distinguished. As a result, multiple servers may access the same resource and cause the data in a cluster system to be corrupted.

3.3.3. What is application monitoring?¶

Application monitoring is a function that monitors applications and factors that cause a situation where an application cannot run.

- Activation status of application monitoringAn error can be detected by starting up an application from an exec resource in EXPRESSCLUSTER and regularly checking whether a process is active or not by using the pid monitor resource. It is effective when the factor for application to stop is due to error termination of an application.

Note

An error in resident process cannot be detected in an application started up by EXPRESSCLUSTER. When the monitoring target application starts and stops a resident process, an internal application error (such as application stalling, result error) cannot be detected.

- Resource monitoringAn error can be detected by monitoring the cluster resources (such as disk partition and IP address) and public LAN using the monitor resources of the EXPRESSCLUSTER. It is effective when the factor for application to stop is due to an error of a resource which is necessary for an application to operate.

3.3.4. What is internal monitoring?¶

Critical monitoring of EXPRESSCLUSTER process

3.3.5. Monitorable and non-monitorable errors¶

There are monitorable and non-monitorable errors in EXPRESSCLUSTER. It is important to know what can or cannot be monitored when building and operating a cluster system.

3.3.6. Detectable and non-detectable errors by server monitoring¶

Monitoring condition: A heartbeat from a server with an error is stopped

Example of errors that can be monitored:

Hardware failure (of which OS cannot continue operating)

System panic

Example of error that cannot be monitored:

Partial failure on OS (for example, only a mouse or keyboard does not function)

3.3.7. Detectable and non-detectable errors by application monitoring¶

Monitoring conditions: Termination of applications with errors, continuous resource errors, and disconnection of a path to the network devices.

Example of errors that can be monitored:

Abnormal termination of an application

Failure to access the shared disk (such as HBA 1 failure)

Public LAN NIC problem

Example of errors that cannot be monitored:

Application stalling and resulting in error. EXPRESSCLUSTER cannot monitor application stalling and error results. However, it is possible to perform failover by creating a program that monitors applications and terminates itself when an error is detected, starting the program using the exec resource, and monitoring application using the PID monitor resource.

- 1

HBA is an abbreviation for host bus adapter. This adapter is not for the shared disk, but for the server.

3.4. Fencing Function¶

EXPRESSCLUSTER's fencing function consists of network partition resolution and forced stopping.

3.4.1. Network partition resolution¶

ping method

http method

See also

For the details on the network partition resolution method, see "Details on network partition resolution resources" of the Reference Guide.

3.4.2. Forced stop¶

When a server failure is detected, a healthy server can send a stop request to the failed server. Making the failed server stop eliminates the possibility of simultaneously starting business applications on two or more servers. The forced stop is made before a failover is started.

See also

For the details on the forced stop function, see "Forced stop resource details" in the "Reference Guide".

3.5. Failover mechanism¶

Upon detecting that a heartbeat from a server is interrupted, EXPRESSCLUSTER determines whether the cause of this interruption is an error in a server or a network partition before starting a failover. Then a failover is performed by activating various resources and starting up applications on a properly working server.

The group of resources which fail over at the same time is called a "failover group." From a user's point of view, a failover group appears as a virtual computer.

Note

In a cluster system, a failover is performed by restarting the application from a properly working node. Therefore, what is saved in an application memory cannot be failed over.

From occurrence of error to completion of failover takes a few minutes. See the "Figure 3.6 Failover time chart" below:

Fig. 3.6 Failover time chart¶

Heartbeat timeout

The time for a standby server to detect an error after that error occurred on the active server.

The setting values of the cluster properties should be adjusted depending on the application load. (The default value is 90 seconds.)

Fencing

The time for network partition resolution and forced stopping.

- For network partition resolution, EXPRESSCLUSTER checks whether stop of heartbeat (heartbeat timeout) detected from the other server is due to a network partition or an error in the other server.Confirmation completes immediately.

- For forced stopping, a stop request is sent to the server that is recognized to be the failure source.How long it will take varies depending on the cluster's operating environment such as a physical one, a virtual one, or the cloud.

Activating various resources

The time to activate the resources necessary for operating an application.

The file system recovery, transfer of data in disks, and transfer of IP addresses are performed.

The resources can be activated in a few seconds in ordinary settings, but the required time changes depending on the type and the number of resources registered to the failover group. For more information, refer to the "Installation and Configuration Guide".

Start script execution time

The data recovery time for a roll-back or roll-forward of the database and the startup time of the application to be used in operation.

The time for roll-back or roll-forward can be predicted by adjusting the check point interval. For more information, refer to the document that comes with each software product.

3.5.1. Failover resources¶

EXPRESSCLUSTER can fail over the following resources:

Switchable partition

Resources such as disk resource, mirror disk resource and hybrid disk resource.

A disk partition to store the data that the application takes over.

Floating IP Address

By connecting an application using the floating IP address, a client does not have to be conscious about switching the servers due to failover processing.

It is achieved by dynamic IP address allocation to the public LAN adapter and sending ARP packet. Connection by floating IP address is possible from most of the network devices.

Script (exec resource)

In EXPRESSCLUSTER, applications are started up from the scripts.

The file failed over on the shared disk may not be complete as data even if it is properly working as a file system. Write the recovery processing specific to an application at the time of failover in addition to the startup of an application in the scripts.

Note

In a cluster system, failover is performed by restarting the application from a properly working node. Therefore, what is saved in an application memory cannot be failed over.

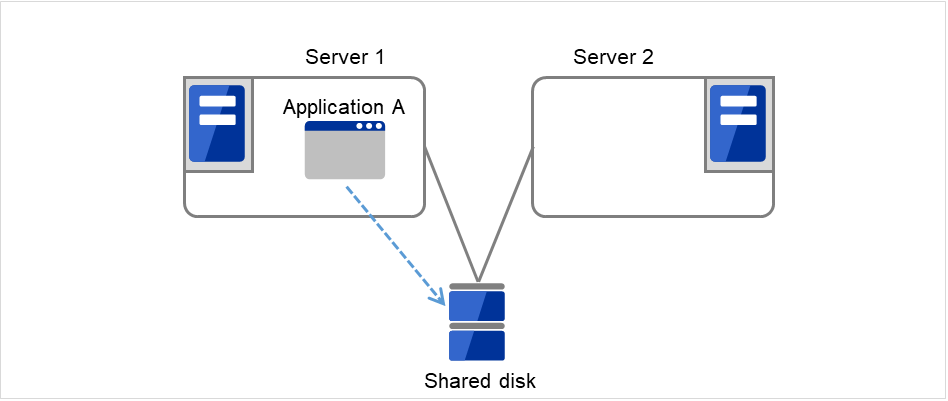

3.5.2. System configuration of the failover-type cluster¶

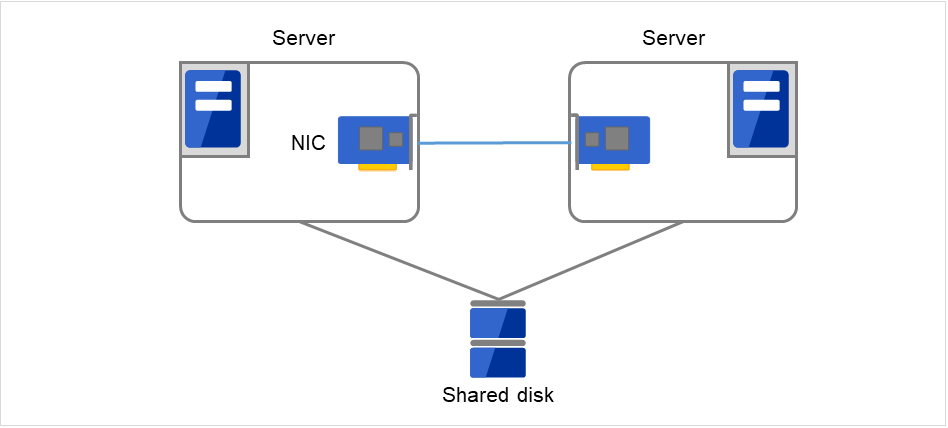

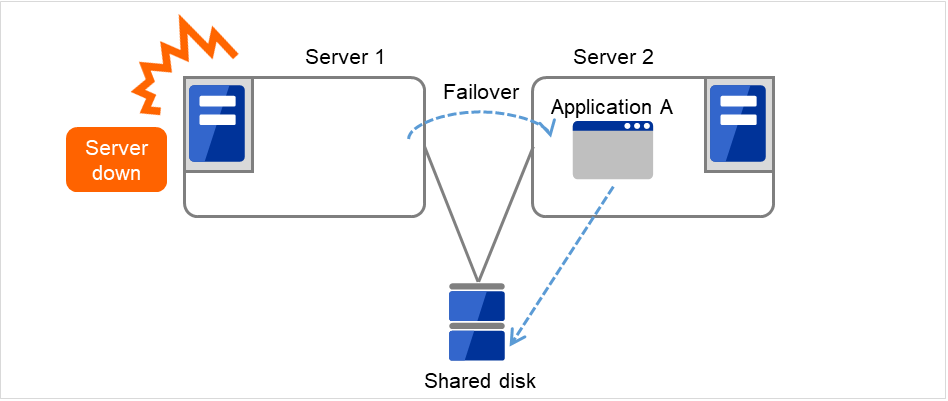

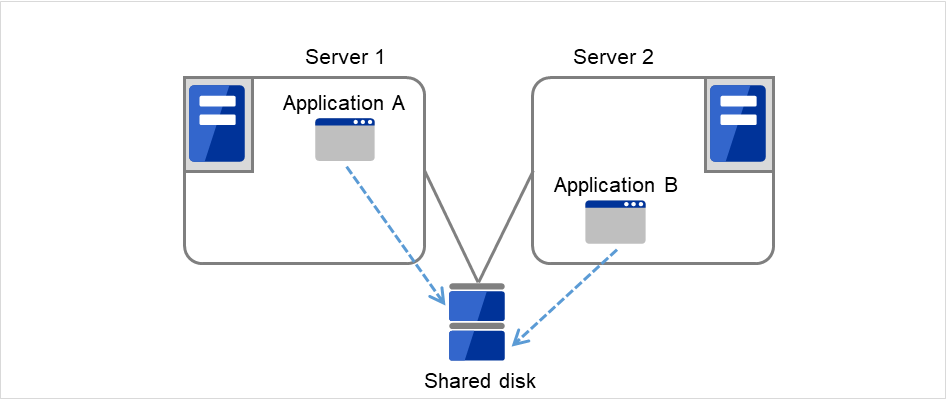

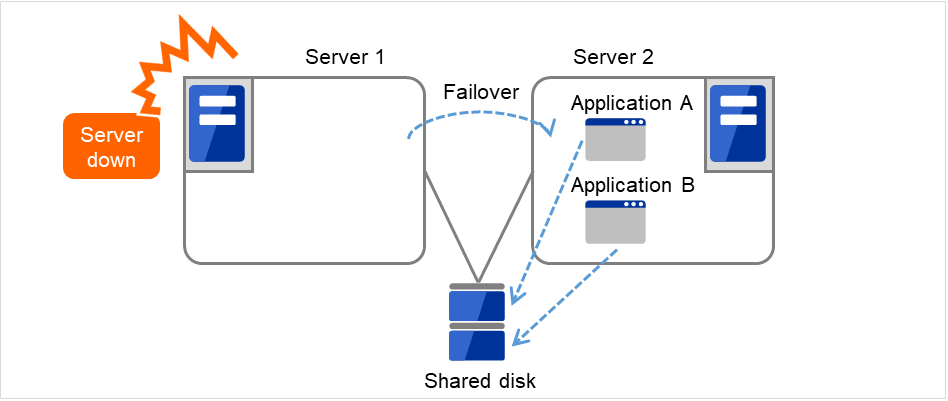

In a failover-type cluster, a disk array device is shared between the servers in a cluster. When an error occurs on a server, the standby server takes over the applications using the data on the shared disk.

Fig. 3.7 System configuration of failover-type cluster¶

A failover-type cluster can be divided into the following categories depending on the cluster topologies:

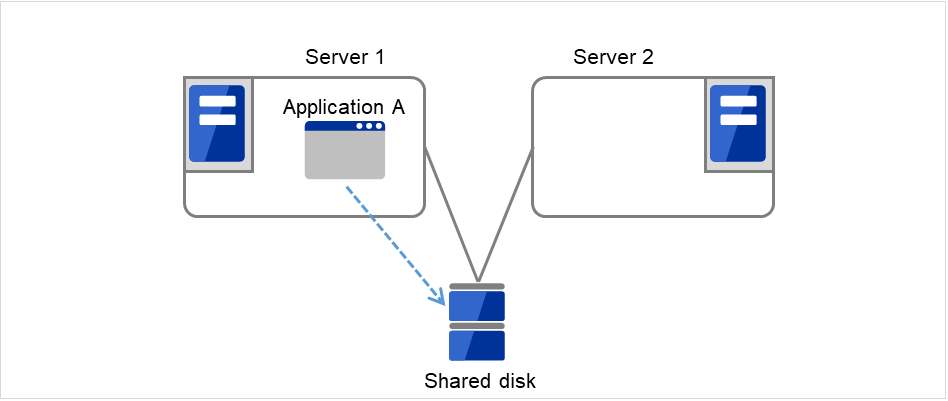

Uni-Directional Standby Cluster System

In the uni-directional standby cluster system, the active server runs applications while the other server, the standby server, does not. This is the simplest cluster topology and you can build a high-availability system without performance degradation after failing over.

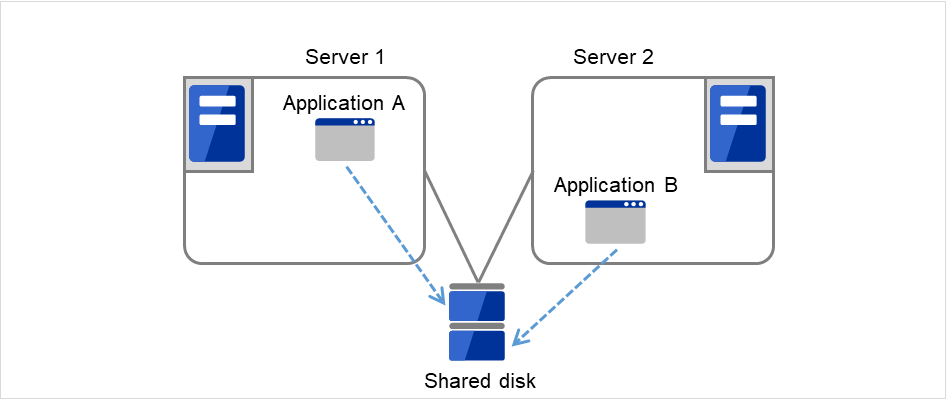

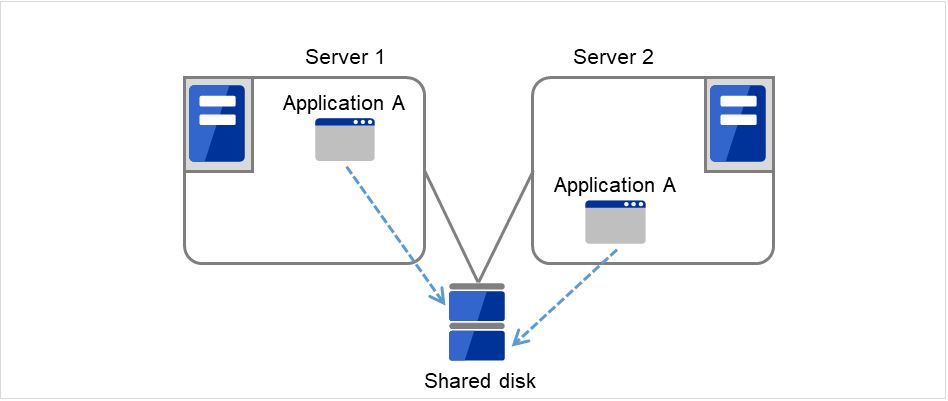

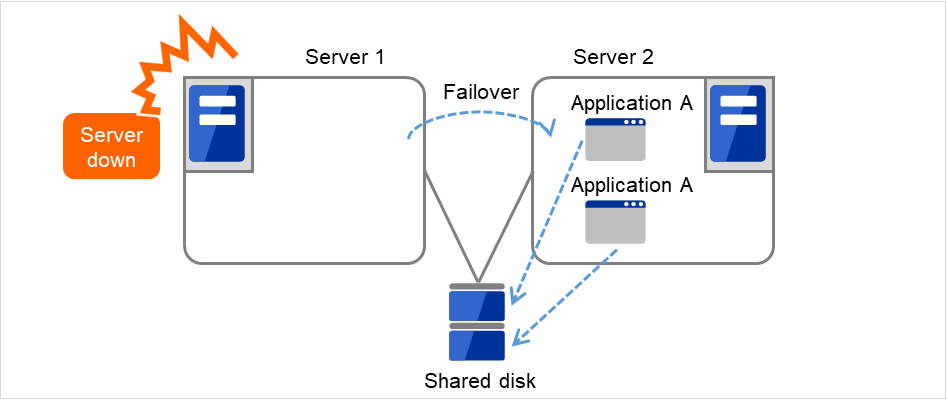

Multi-directional standby cluster system with the same application

In the same application multi-directional standby cluster system, the same applications are activated on multiple servers. These servers also operate as standby servers. The applications must support multi-directional standby operation. When the application data can be split into multiple data, depending on the data to be accessed, you can build a load distribution system per data partitioning basis by changing the client's connecting server.

Multi-directional standby cluster system with different applications

In the different application multi-directional standby cluster system, different applications are activated on multiple servers and these servers also operate as standby servers. The applications do not have to support multi-directional standby operation. A load distribution system can be built per application unit basis.

Application A and Application B are different applications.

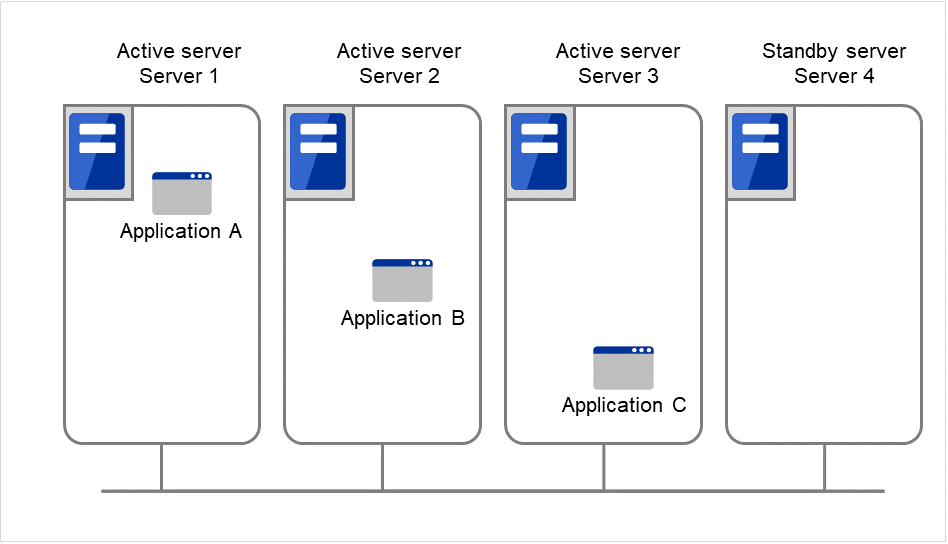

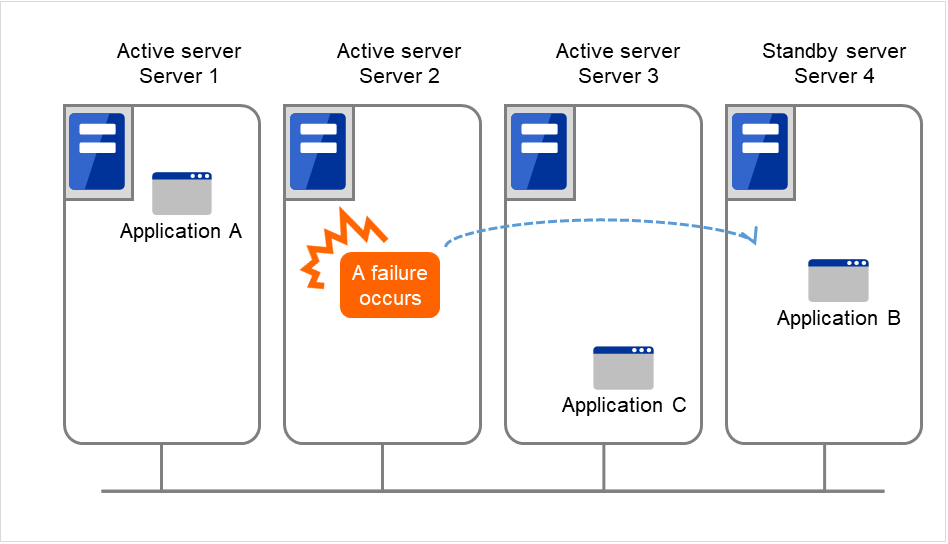

Node to Node Configuration

The configuration can be expanded with more nodes by applying the configurations introduced thus far. In a node to node configuration described below, three different applications are run on three servers and one standby server takes over the application if any problem occurs. In a uni-directional standby cluster system, one of the two servers functions as a standby server. However, in a node to node configuration, only one of the four server functions as a standby server and performance deterioration is not anticipated if an error occurs only on one server.

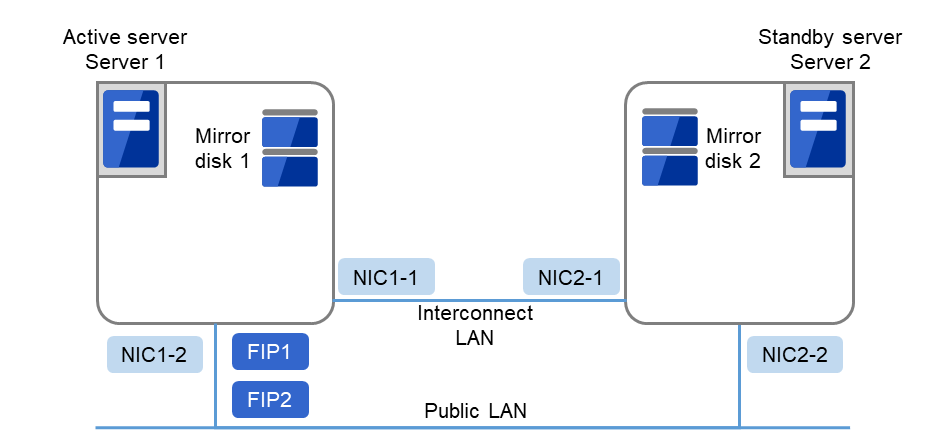

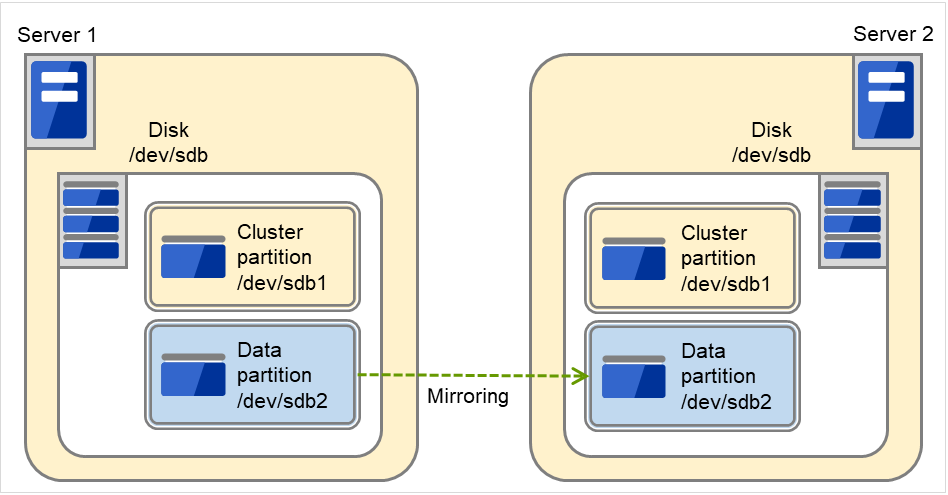

3.5.4. Hardware configuration of the mirror disk type cluster¶

The hardware configuration of the mirror disk in EXPRESSCLUSTER is described below.

Unlike the shared disk type, a network to copy the mirror disk data is necessary. In general, a network is used with NIC for internal communication in EXPRESSCLUSTER.

Mirror disks need to be separated from the operating system; however, they do not depend on a connection interface (IDE or SCSI.)

Sample cluster environment with mirror disks used (When cluster partitions and data partitions are allocated to OS-installed disks)

In the following configuration, free partitions of the OS-installed disks are used as cluster partitions and data partitions.

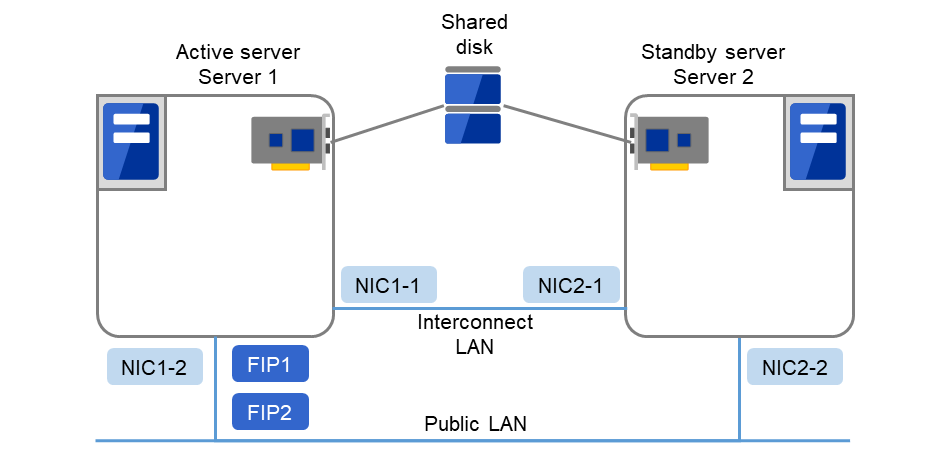

Fig. 3.17 Example of cluster configuration (1) (Mirror disk type)¶

FIP1

10.0.0.11 (Access destination from the Cluster WebUI client)

FIP2

10.0.0.12 (Access destination from the operation client)

NIC1-1

192.168.0.1

NIC1-2

10.0.0.1

NIC2-1

192.168.0.2

NIC2-2

10.0.0.2

RS-232C device

/dev/ttyS0

/boot device for OS

/dev/sda1

Swap device for OS

/dev/sda2

/(root) device for OS

/dev/sda3

Device for cluster partitions

/dev/sda5

Device for data partitions

/dev/sda6

Mount point

/mnt/sda6

File system

ext3

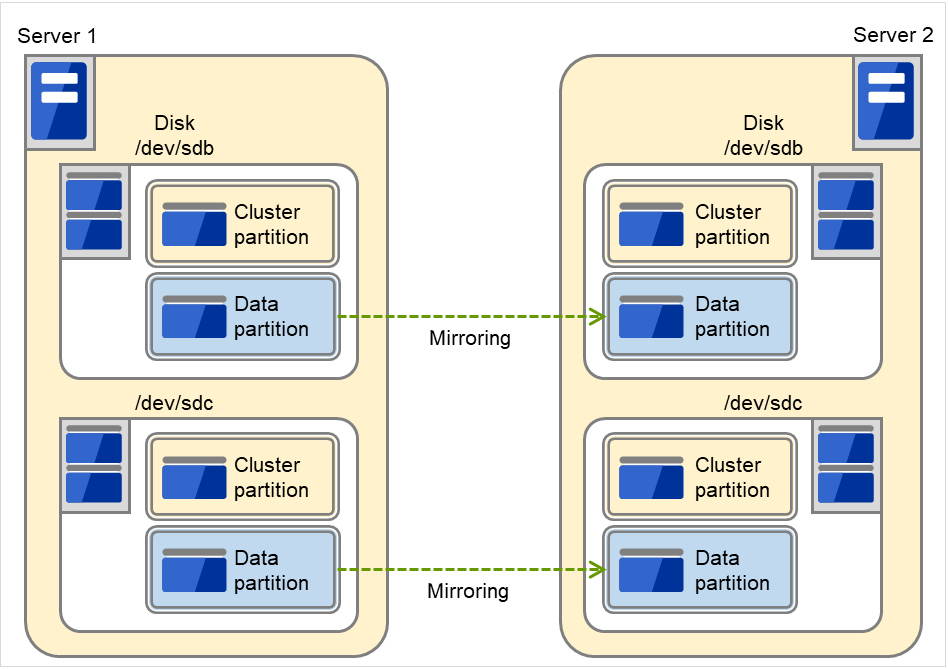

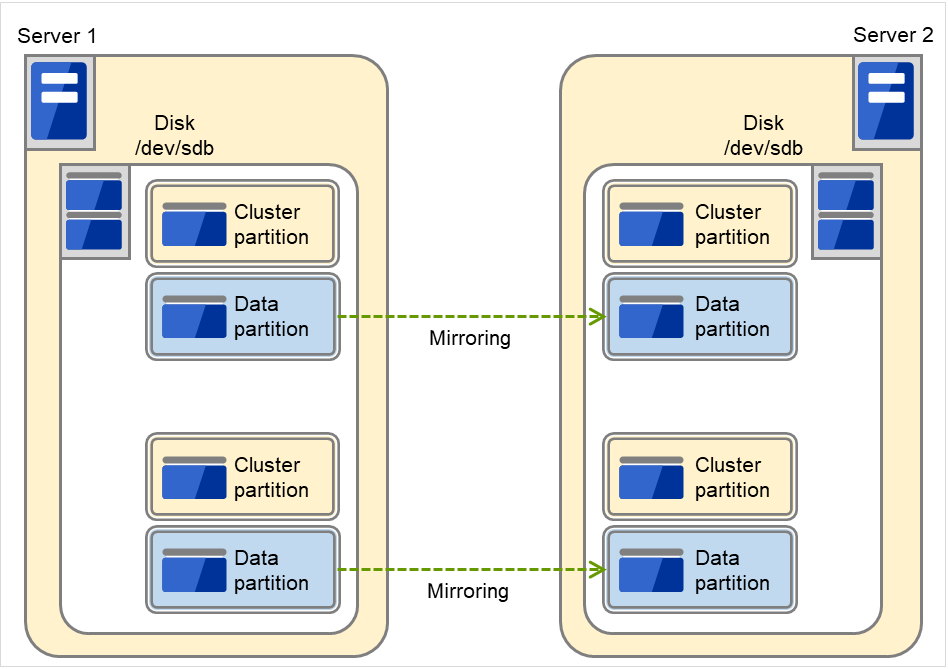

Sample cluster environment with mirror disks used (When disks are prepared for cluster partitions and data partitions)

In the following configuration, disks are prepared to be used for cluster partitions and data partitions, and connected to the servers.

Fig. 3.18 Example of cluster configuration (2) (Mirror disk type)¶

FIP1

10.0.0.11 (Access destination from the Cluster WebUI client)

FIP2

10.0.0.12 (Access destination from the operation client)

NIC1-1

192.168.0.1

NIC1-2

10.0.0.1

NIC2-1

192.168.0.2

NIC2-2

10.0.0.2

RS-232C device

/dev/ttyS0

/boot device for OS

/dev/sda1

Swap device for OS

/dev/sda2

/(root) device for OS

/dev/sda3

Device for cluster partitions

/dev/sdb1

Mirror resource disk device

/dev/sdb2

Mount point

/mnt/sdb2

File system

ext3

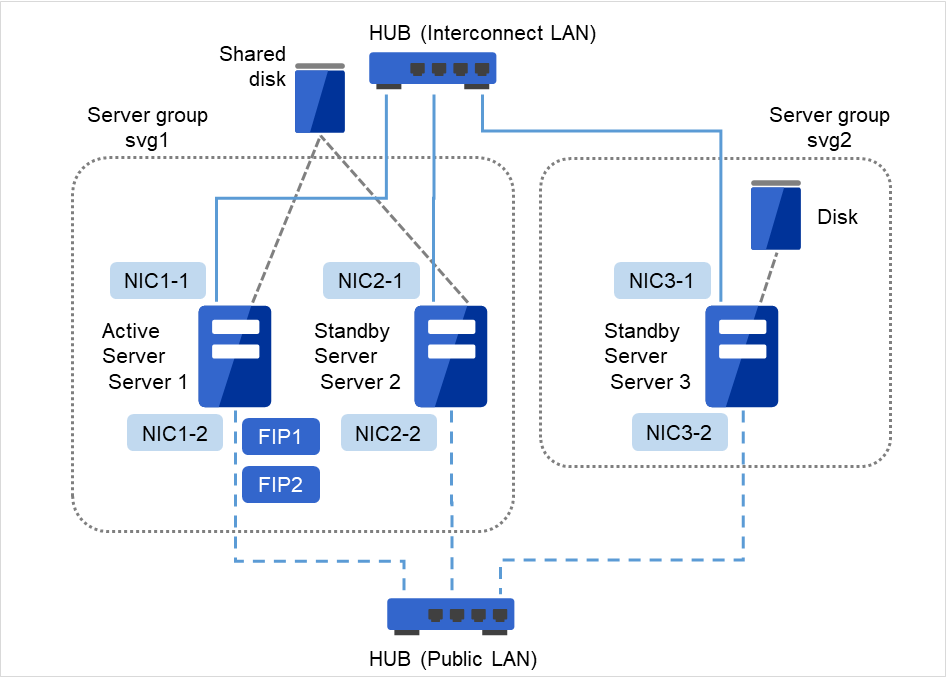

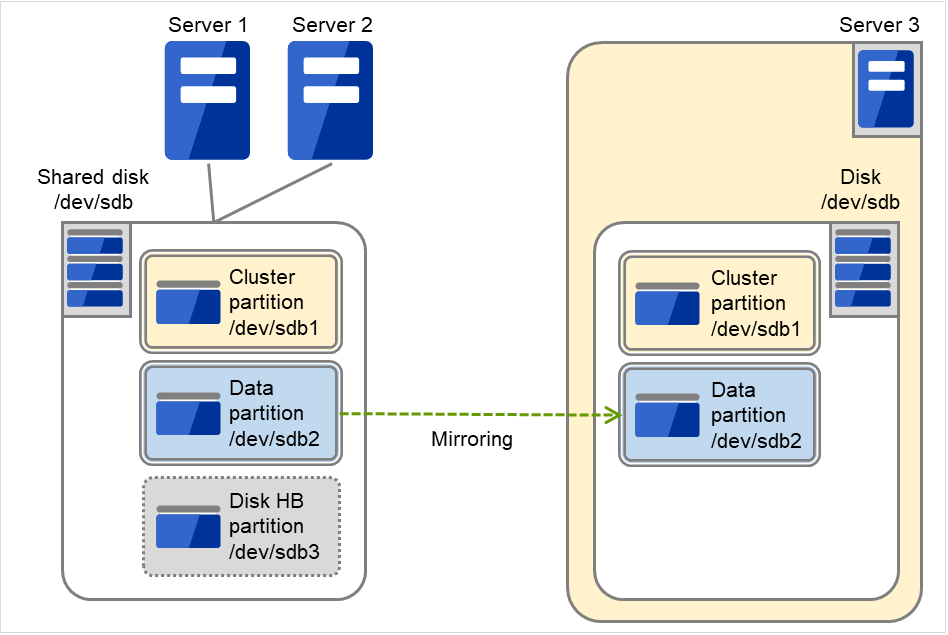

3.5.5. Hardware configuration of the hybrid disk type cluster¶

The hardware configuration of the hybrid disk in EXPRESSCLUSTER is described below.

Unlike the shared disk type, a network to copy the data is necessary. In general, NIC for internal communication in EXPRESSCLUSTER is used to meet this purpose.

Disks do not depend on a connection interface (IDE or SCSI).

Sample cluster environment with the hybrid disk used (When a shared disk is used by two servers and the data is mirrored to the normal disk of the third server)

Fig. 3.19 Example of cluster configuration (Hybrid disk type)¶

FIP1

10.0.0.11 (Access destination from the Cluster WebUI client)

FIP2

10.0.0.12 (Access destination from the operation client)

NIC1-1

192.168.0.1

NIC1-2

10.0.0.1

NIC2-1

192.168.0.2

NIC2-2

10.0.0.2

NIC3-1

192.168.0.3

NIC3-2

10.0.0.3

Shared disk

Hybrid device

/dev/NMP1

Mount point

/mnt/hd1

File system

ext3

Device for cluster partitions

/dev/sdb1

Hybrid resource disk device

/dev/sdb2

Disk heartbeat device name

/dev/sdb3

Raw device name

/dev/raw/raw1

Disk for hybrid resource

Hybrid device

/dev/NMP1

Mount point

/mnt/hd1

File system

ext3

Device for cluster partitions

/dev/sdb1

Hybrid resource disk device

/dev/sdb2

3.5.6. What is cluster object?¶

In EXPRESSCLUSTER, the various resources are managed as the following groups:

- Cluster objectConfiguration unit of a cluster.

- Server objectIndicates the physical server and belongs to the cluster object.

- Server group objectGroups the servers and belongs to the cluster object.

- Heartbeat resource objectIndicates the network part of the physical server and belongs to the server object.

- Network partition resolution resource objectIndicates the network partition resolution mechanism and belongs to the server object.

- Group objectIndicates a virtual server and belongs to the cluster object.

- Group resource objectIndicates resources (network, disk) of the virtual server and belongs to the group object.

- Monitor resource objectIndicates monitoring mechanism and belongs to the cluster object.

3.6. What is a resource?¶

In EXPRESSCLUSTER, a group used for monitoring the target is called "resources." There are four types of resources and are managed separately. Having resources allows distinguishing what is monitoring and what is being monitored more clearly. It also makes building a cluster and handling an error easy. The resources can be divided into heartbeat resources, network partition resolution resources, group resources, and monitor resources.

3.6.1. Heartbeat resources¶

Heartbeat resources are used for verifying whether the other server is working properly between servers. The following heartbeat resources are currently supported:

- LAN heartbeat resourceUses Ethernet for communication.

- Kernel mode LAN heartbeat resourceUses Ethernet for communication.

- Disk heartbeat resourceUses a specific partition (cluster partition for disk heartbeat) on the shared disk for communication. It can be used only on a shared disk configuration.

- Witness heartbeat resourceUses the external server running the Witness server service to show the status (of communication with each server) obtained from the external server.

3.6.2. Network partition resolution resources¶

The following resource is used to resolve a network partition.

- PING network partition resolution resourceThis is a network partition resolution resource by the PING method.

- HTTP network partition resolution resourceThis is a network partition resolution resource by the HTTP method.

3.6.3. Group resources¶

A group resource constitutes a unit when a failover occurs. The following group resources are currently supported:

- Floating IP resource (fip)Provides a virtual IP address. A client can access virtual IP address the same way as the regular IP address.

- EXEC resource (exec)Provides a mechanism for starting and stopping the applications such as DB and httpd.

- Disk resource (disk)Provides a specified partition on the shared disk. It can be used only on a shared disk configuration.

- Mirror disk resource (md)Provides a specified partition on the mirror disk. It can be used only on a mirror disk configuration.

- Hybrid disk resource (hd)Provides a specified partition on a shared disk or a disk. It can be used only for hybrid configuration.

- Volume manager resource (volmgr)Handles multiple storage devices and disks as a single logical disk.

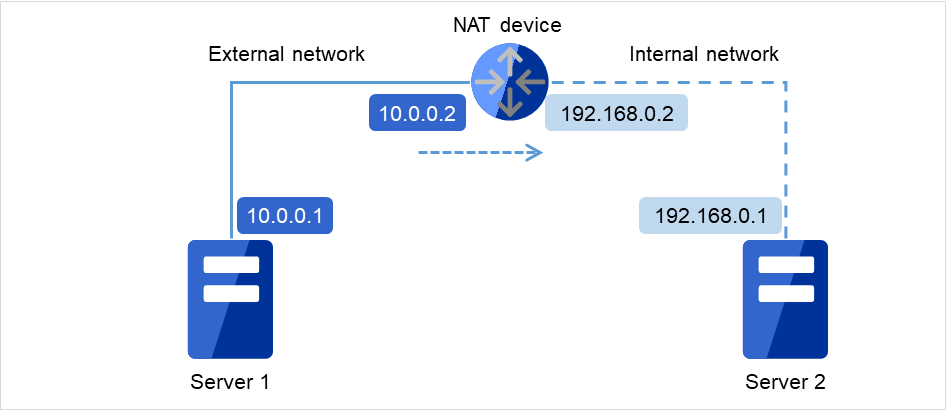

- Virtual IP resource (vip)Provides a virtual IP address. This can be accessed from a client in the same way as a general IP address. This can be used in the remote cluster configuration among different network addresses.

- Dynamic DNS resource (ddns)Registers the virtual host name and the IP address of the active server to the dynamic DNS server.

- AWS elastic ip resource (awseip)Provides a system for giving an elastic IP (referred to as EIP) when EXPRESSCLUSTER is used on AWS.

- AWS virtual ip resource (awsvip)Provides a system for giving a virtual IP (referred to as VIP) when EXPRESSCLUSTER is used on AWS.

- AWS secondary ip resource (awssip)Provides a system for giving a secondary IP when EXPRESSCLUSTER is used on AWS.

- AWS DNS resource (awsdns)Registers the virtual host name and the IP address of the active server to Amazon Route 53 when EXPRESSCLUSTER is used on AWS.

- Azure probe port resource (azurepp)Provides a system for opening a specific port on a node on which the operation is performed when EXPRESSCLUSTER is used on Microsoft Azure.

- Azure DNS resource (azuredns)Registers the virtual host name and the IP address of the active server to Azure DNS when EXPRESSCLUSTER is used on Microsoft Azure.

- Google Cloud virtual IP resource (gcvip)Provides a system for opening a specific port on a node on which the operation is performed when EXPRESSCLUSTER is used on Google Cloud Platform.

- Google Cloud DNS resource (gcdns)Registers the virtual host name and the IP address of the active server to Cloud DNS when EXPRESSCLUSTER is used on Google Cloud Platform.

- Oracle Cloud virtual IP resource (ocvip)Provides a system for opening a specific port on a node on which the operation is performed when EXPRESSCLUSTER is used on Oracle Cloud Infrastructure.

3.6.4. Monitor resources¶

A monitor resource monitors a cluster system. The following monitor resources are currently supported:

- Floating IP monitor resource (fipw)Provides a monitoring mechanism of an IP address started up by a floating IP resource.

- IP monitor resource (ipw)Provides a monitoring mechanism of an external IP address.

- Disk monitor resource (diskw)Provides a monitoring mechanism of the disk. It also monitors the shared disk.

- Mirror disk monitor resource (mdw)Provides a monitoring mechanism of the mirroring disks.

- Mirror disk connect monitor resource (mdnw)Provides a monitoring mechanism of the mirror disk connect.

- Hybrid disk monitor resource (hdw)Provides a monitoring mechanism of the hybrid disk.

- Hybrid disk connect monitor resource (hdnw)Provides a monitoring mechanism of the hybrid disk connect.

- PID monitor resource (pidw)Provides a monitoring mechanism to check whether a process started up by exec resource is active or not.

- User mode monitor resource (userw)Provides a monitoring mechanism for a stalling problem in the user space.

- NIC Link Up/Down monitor resource (miiw)Provides a monitoring mechanism for link status of LAN cable.

- Volume manager monitor resource (volmgrw)Provides a monitoring mechanism for multiple storage devices and disks.

- Multi target monitor resource (mtw)Provides a status with multiple monitor resources.

- Virtual IP monitor resource (vipw)Provides a mechanism for sending RIP packets of a virtual IP resource.

- ARP monitor resource (arpw)Provides a mechanism for sending ARP packets of a floating IP resource or a virtual IP resource.

- Custom monitor resource (genw)Provides a monitoring mechanism to monitor the system by the operation result of commands or scripts which perform monitoring, if any.

- Message receive monitor resource (mrw)Specifies the action to take when an error message is received and how the message is displayed on the Cluster WebUI.

- Dynamic DNS monitor resource (ddnsw)Periodically registers the virtual host name and the IP address of the active server to the dynamic DNS server.

- Process name monitor resource (psw)Provides a monitoring mechanism for checking whether a process specified by a process name is active.

- DB2 monitor resource (db2w)Provides a monitoring mechanism for IBM DB2 database.

- FTP monitor resource (ftpw)Provides a monitoring mechanism for FTP server.

- HTTP monitor resource (httpw)Provides a monitoring mechanism for HTTP server.

- IMAP4 monitor resource (imap4w)Provides a monitoring mechanism for IMAP4 server.

- MySQL monitor resource (mysqlw)Provides a monitoring mechanism for MySQL database.

- NFS monitor resource (nfsw)Provides a monitoring mechanism for nfs file server.

- Oracle monitor resource (oraclew)Provides a monitoring mechanism for Oracle database.

- Oracle Clusterware Synchronization Management monitor resource (osmw)Provides a monitoring mechanism for Oracle Clusterware process linked EXPRESSCLUSTER.

- POP3 monitor resource (pop3w)Provides a monitoring mechanism for POP3 server.

- PostgreSQL monitor resource (psqlw)Provides a monitoring mechanism for PostgreSQL database.

- Samba monitor resource (sambaw)Provides a monitoring mechanism for samba file server.

- SMTP monitor resource (smtpw)Provides a monitoring mechanism for SMTP server.

- Tuxedo monitor resource (tuxw)Provides a monitoring mechanism for Tuxedo application server.

- WebSphere monitor resource (wasw)Provides a monitoring mechanism for WebSphere application server.

- WebLogic monitor resource (wlsw)Provides a monitoring mechanism for WebLogic application server.

- WebOTX monitor resource (otxsw)Provides a monitoring mechanism for WebOTX application server.

- JVM monitor resource (jraw)Provides a monitoring mechanism for Java VM.

- System monitor resource (sraw)Provides a monitoring mechanism for the resources of the whole system.

- Process resource monitor resource(psrw)Provides a monitoring mechanism for running processes on the server.

- AWS Elastic IP monitor resource (awseipw)Provides a monitoring mechanism for the elastic ip given by the AWS elastic ip (referred to as EIP) resource.

- AWS Virtual IP monitor resource (awsvipw)Provides a monitoring mechanism for the virtual ip given by the AWS virtual ip (referred to as VIP) resource.

- AWS Secondary IP monitor resource (awssipw)Provides a monitoring mechanism for the secondary ip given by the AWS secondary ip resource.

- AWS AZ monitor resource (awsazw)Provides a monitoring mechanism for an Availability Zone (referred to as AZ).

- AWS DNS monitor resource (awsdnsw)Provides a monitoring mechanism for the virtual host name and IP address provided by the AWS DNS resource.

- Azure probe port monitor resource (azureppw)Provides a monitoring mechanism for probe port for the node where an Azure probe port resource has been activated.

- Azure load balance monitor resource (azurelbw)Provides a mechanism for monitoring whether the port number that is same as the probe port is open for the node where an Azure probe port resource has not been activated.

- Azure DNS monitor resource (azurednsw)Provides a monitoring mechanism for the virtual host name and IP address provided by the Azure DNS resource.

- Google Cloud virtual IP monitor resource (gcvipw)Provides a mechanism for monitoring the alive-monitoring port for the node where a Google Cloud virtual IP resource has been activated.

- Google Cloud load balance monitor resource (gclbw)Provides a mechanism for monitoring whether the same port number as the health-check port number has already been used , for the node where a Google Cloud virtual IP resource has not been activated.

- Google Cloud DNS monitor resource (gcdnsw)Provides a monitoring mechanism for the virtual host name and IP address provided by the Google Cloud DNS resource.

- Oracle Cloud virtual IP monitor resource (ocvipw)Provides a mechanism for monitoring the alive-monitoring port for the node where an Oracle Cloud virtual IP resource has been activated.

- Oracle Cloud load balance monitor resource (oclbw)Provides a mechanism for monitoring whether the same port number as the health-check port number has already been used , for the node where an Oracle Cloud virtual IP resource has not been activated.

3.7. Getting started with EXPRESSCLUSTER¶

Refer to the following guides when building a cluster system with EXPRESSCLUSTER:

3.7.1. Latest information¶

Refer to "4. Installation requirements for EXPRESSCLUSTER" and "5. Latest version information" and "6. Notes and Restrictions" and "7. Upgrading EXPRESSCLUSTER" in this guide.

3.7.2. Designing a cluster system¶

Refer to "Determining a system configuration" and "Configuring a cluster system" in the "Installation and Configuration Guide"; "Group resource details", "Monitor resource details", "Heartbeat resources details", "Network partition resolution resources details", and "Information on other settings" in the "Reference Guide" ; and the "Hardware Feature Guide".

3.7.3. Configuring a cluster system¶

Refer to the "Installation and Configuration Guide".

3.7.4. Troubleshooting the problem¶

Refer to "The system maintenance information" in the "Maintenance Guide", and "Troubleshooting" and "Error messages" in the "Reference Guide".

4. Installation requirements for EXPRESSCLUSTER¶

This chapter provides information on system requirements for EXPRESSCLUSTER.

This chapter covers:

4.1. Hardware¶

EXPRESSCLUSTER operates on the following server architectures:

x86_64

IBM POWER (Replicator, Replicator DR, Agents except Database Agent are not supported)

IBM POWER LE (Replicator, Replicator DR and Agents are not supported)

4.1.1. General server requirements¶

Required specifications for EXPRESSCLUSTER Server are the following:

RS-232C port 1 port (not necessary when configuring a cluster with 3 or more nodes)

Ethernet port 2 or more ports

Shared disk

Mirror disk or empty partition for mirror

DVD-ROM drive

4.2. Software¶

4.2.1. System requirements for EXPRESSCLUSTER Server¶

4.2.2. Supported distributions and kernel versions¶

The environment where EXPRESSCLUSTER Server can operate depends on kernel module versions because there are kernel modules unique to EXPRESSCLUSTER.

There are the following driver modules unique to EXPRESSCLUSTER.

Driver module unique to EXPRESSCLUSTER |

Description |

|---|---|

Kernel mode LAN heartbeat driver |

Used with kernel mode LAN heartbeat resources. |

Keepalive driver |

Used if keepalive is selected as the monitoring method for user-mode monitor resources.

Used if keepalive is selected as the monitoring method for shutdown monitoring.

|

Mirror driver |

Used with mirror disk resources. |

Kernel versions which has been verified are listed below.

About newest information, see the web site as follows:

Note

For the kernel version of Cent OS supported by EXPRESSCLUSTER, see the supported kernel version of Red Hat Enterprise Linux.

4.2.3. Applications supported by monitoring options¶

Version information of the applications to be monitored by monitor resources is described below.

x86_64

Monitor resource |

Monitored application |

EXPRESSCLUSTER

version

|

Remarks |

|---|---|---|---|

Oracle monitor |

Oracle Database 19c (19.3) |

5.0.0-1 or later |

|

DB2 monitor |

DB2 V11.5 |

5.0.0-1 or later |

|

PostgreSQL monitor |

PostgreSQL 14.1 |

5.0.0-1 or later |

|

PowerGres on Linux 13.5 |

5.0.0-1 or later |

||

MySQL monitor |

MySQL 8.0 |

5.0.0-1 or later |

|

MariaDB 10.5 |

5.0.0-1 or later |

||

SQL Server monitor |

SQL Server 2019 |

5.0.0-1 or later |

|

Samba monitor |

Samba 3.3 |

4.0.0-1 or later |

|

Samba 3.6 |

4.0.0-1 or later |

||

Samba 4.0 |

4.0.0-1 or later |

||

Samba 4.1 |

4.0.0-1 or later |

||

Samba 4.2 |

4.0.0-1 or later |

||

Samba 4.4 |

4.0.0-1 or later |

||

Samba 4.6 |

4.0.0-1 or later |

||

Samba 4.7 |

4.1.0-1 or later |

||

Samba 4.8 |

4.1.0-1 or later |

||

Samba 4.13 |

4.3.0-1 or later |

||

NFS monitor |

nfsd 2 (udp) |

4.0.0-1 or later |

|

nfsd 3 (udp) |

4.0.0-1 or later |

||

nfsd 4 (tcp) |

4.0.0-1 or later |

||

mountd 1 (tcp) |

4.0.0-1 or later |

||

mountd 2 (tcp) |

4.0.0-1 or later |

||

mountd 3 (tcp) |

4.0.0-1 or later |

||

HTTP monitor |

No specified version |

4.0.0-1 or later |

|

SMTP monitor |

No specified version |

4.0.0-1 or later |

|

POP3 monitor |

No specified version |

4.0.0-1 or later |

|

imap4 monitor |

No specified version |

4.0.0-1 or later |

|

ftp monitor |

No specified version |

4.0.0-1 or later |

|

Tuxedo monitor |

Tuxedo 12c Release 2 (12.1.3) |

4.0.0-1 or later |

|

WebLogic monitor |

WebLogic Server 11g R1 |

4.0.0-1 or later |

|

WebLogic Server 11g R2 |

4.0.0-1 or later |

||

WebLogic Server 12c R2 (12.2.1) |

4.0.0-1 or later |

||

WebLogic Server 14c (14.1.1) |

4.2.0-1 or later |

||

WebSphere monitor |

WebSphere Application Server 8.5 |

4.0.0-1 or later |

|

WebSphere Application Server 8.5.5 |

4.0.0-1 or later |

||

WebSphere Application Server 9.0 |

4.0.0-1 or later |

||

WebOTX monitor |

WebOTX Application Server V9.1 |

4.0.0-1 or later |

|

WebOTX Application Server V9.2 |

4.0.0-1 or later |

||

WebOTX Application Server V9.3 |

4.0.0-1 or later |

||

WebOTX Application Server V9.4 |

4.0.0-1 or later |

||

WebOTX Application Server V10.1 |

4.0.0-1 or later |

||

WebOTX Application Server V10.3 |

4.3.0-1 or later |

||

JVM monitor |

WebLogic Server 11g R1 |

4.0.0-1 or later |

|

WebLogic Server 11g R2 |

4.0.0-1 or later |

||

WebLogic Server 12c |

4.0.0-1 or later |

||

WebLogic Server 12c R2 (12.2.1) |

4.0.0-1 or later |

||

WebLogic Server 14c (14.1.1) |

4.2.0-1 or later |

||

WebOTX Application Server V9.1 |

4.0.0-1 or later |

||

WebOTX Application Server V9.2 |

4.0.0-1 or later |

WebOTX update is required to monitor process groups |

|

WebOTX Application Server V9.3 |

4.0.0-1 or later |

||

WebOTX Application Server V9.4 |

4.0.0-1 or later |

||

WebOTX Application Server V10.1 |

4.0.0-1 or later |

||

WebOTX Application Server V10.3 |

4.3.0-1 or later |

||

WebOTX Enterprise Service Bus V8.4 |

4.0.0-1 or later |

||

WebOTX Enterprise Service Bus V8.5 |

4.0.0-1 or later |

||

WebOTX Enterprise Service Bus V10.3 |

4.3.0-1 or later |

||

JBoss Enterprise Application Platform 7.0 |

4.0.0-1 or later |

||

JBoss Enterprise Application Platform 7.3 |

4.3.2-1 or later |

||

Apache Tomcat 8.0 |

4.0.0-1 or later |

||

Apache Tomcat 8.5 |

4.0.0-1 or later |

||

Apache Tomcat 9.0 |

4.0.0-1 or later |

||

WebSAM SVF for PDF 9.0 |

4.0.0-1 or later |

||

WebSAM SVF for PDF 9.1 |

4.0.0-1 or later |

||

WebSAM SVF for PDF 9.2 |

4.0.0-1 or later |

||

WebSAM Report Director Enterprise 9.0 |

4.0.0-1 or later |

||

WebSAM Report Director Enterprise 9.1 |

4.0.0-1 or later |

||

WebSAM Report Director Enterprise 9.2 |

4.0.0-1 or later |

||

WebSAM Universal Connect/X 9.0 |

4.0.0-1 or later |

||

WebSAM Universal Connect/X 9.1 |

4.0.0-1 or later |

||

WebSAM Universal Connect/X 9.2 |

4.0.0-1 or later |

||

System monitor |

No specified version |

4.0.0-1 or later |

|

Process resource monitor |

No specified version |

4.1.0-1 or later |

Note

To use monitoring options in x86_64 environments, applications to be monitored must be x86_64 version.

IBM POWER

Monitor resource |

Monitored application |

EXPRESSCLUSTER

version

|

Remarks |

|---|---|---|---|

DB2 monitor |

DB2 V10.5 |

4.0.0-1 or later |

|

PostgreSQL monitor |

PostgreSQL 9.3 |

4.0.0-1 or later |

|

PostgreSQL 9.4 |

4.0.0-1 or later |

||

PostgreSQL 9.5 |

4.0.0-1 or later |

||

PostgreSQL 9.6 |

4.0.0-1 or later |

||

PostgreSQL 10 |

4.0.0-1 or later |

||

PostgreSQL 11 |

4.1.0-1 or later |

Note

To use monitoring options in IBM POWER environments, applications to be monitored must be IBM POWER version.

4.2.4. Operation environment for JVM monitor¶

The use of the JVM monitor requires a Java runtime environment. Also, monitoring a domain mode of JBoss Enterprise Application Platform requires Java(TM) SE Development Kit.

Java(TM) Runtime Environment |

Version 7.0 Update 6 (1.7.0_6) or later |

Java(TM) SE Development Kit |

Version 7.0 Update 1 (1.7.0_1) or later |

Java(TM) Runtime Environment |

Version 8.0 Update 11 (1.8.0_11) or later |

Java(TM) SE Development Kit |

Version 8.0 Update 11 (1.8.0_11) or later |

Java(TM) Runtime Environment |

Version 9.0 (9.0.1) or later |

Java(TM) SE Development Kit |

Version 9.0 (9.0.1) or later |

Java(TM) SE Development Kit |

Version 11.0 (11.0.5) or later |

Open JDK |

Version 7.0 Update 45 (1.7.0_45) or later

Version 8.0 (1.8.0) or later

Version 9.0 (9.0.1) or later

|

4.2.5. Operation environment for AWS elastic ip resource, AWS virtual ip resource, AWS Elastic IP monitor resource, AWS virtual IP monitor resource, AWS AZ monitor resource¶

The use of the AWS elastic ip resource, AWS virtual ip resource, AWS Elastic IP monitor resource, AWS virtual IP monitor resource, AWS AZ monitor resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

AWS CLI |

1.6.0 or later

2.0.0 or later

|

|

Python |

2.6.5 or later

2.7.5 or later

3.5.2 or later

3.6.8 or later

3.8.1 or later

3.8.3 or later

|

Python accompanying the AWS CLI is not allowed.

|

4.2.6. Operation environment for AWS secondary ip resource, AWS secondary IP monitor resource¶

The use of the AWS secondary ip resource, AWS secondary IP monitor resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

AWS CLI |

2.0.0 or later |

4.2.7. Operation environment for AWS DNS resource, AWS DNS monitor resource¶

The use of the AWS DNS resource, AWS DNS monitor resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

AWS CLI |

1.11.0 or later |

|

Python (When OS is Red Hat Enterprise Linux 6, Cent OS 6, SUSE Linux Enterprise Server 11, Oracle Linux 6) |

2.6.6 or later

3.6.5 or later

3.8.1 or later

|

Python accompanying the AWS CLI is not allowed.

|

Python (When OS is besides Red Hat Enterprise Linux 6, Cent OS 6, SUSE Linux Enterprise Server 11, Oracle Linux 6) |

2.7.5 or later

3.5.2 or later

3.6.8 or later

3.8.1 or later

3.8.3 or later

|

Python accompanying the AWS CLI is not allowed.

|

4.2.8. Operation environment for AWS forced stop resource¶

The use of the AWS forced stop resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

AWS CLI |

2.0.0 or later |

4.2.9. Operation environment for Azure probe port resource, Azure probe port monitor resource, Azure load balance monitor resource¶

The following are the Microsoft Azure deployment models with which the operation of the Azure probe port resource is verified. For details on how to set up a Load Balancer, refer to the documents from Microsoft (https://azure.microsoft.com/en-us/documentation/articles/load-balancer-arm/).

x86_64

Deployment model |

EXPRESSCLUSTER

Version

|

Remark |

|---|---|---|

Resource Manager |

4.0.0-1 or later |

Load balancer is required |

4.2.10. Operation environment for Azure DNS resource, Azure DNS monitor resource¶

The use of the Azure DNS resource, Azure DNS monitor resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

Azure CLI (When OS is Red Hat Enterprise Linux 6, Cent OS 6, Asianux Server 4, SUSE Linux Enterprise Server 11, Oracle Linux 6) |

1.0 or later |

Python is not required. |

Azure CLI (When OS is besides Red Hat Enterprise Linux 6, Cent OS 6, Asianux Server 4, SUSE Linux Enterprise Server 11, Oracle Linux 6) |

2.0 or later |

The following are the Microsoft Azure deployment models with which the operation of the Azure DNS resource, the Azure DNS monitor resource is verified. For setting about Azure DNS, please refer to the "EXPRESSCLUSTER X HA Cluster Configuration Guide for Microsoft Azure (Linux)"

x86_64

Deployment model |

EXPRESSCLUSTER

Version

|

Remark |

|---|---|---|

Resource Manager |

4.0.0-1 or later |

Azure DNS is required |

4.2.11. Operation environments for Google Cloud virtual IP resource, Google Cloud virtual IP monitor resource, and Google Cloud load balance monitor resource¶

4.2.12. Operation environments for Google Cloud DNS resource, Google Cloud DNS monitor resource¶

The use of the Google Cloud DNS resource, Azure Google Cloud monitor resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

Google Cloud SDK |

295.0.0~ |

4.2.13. Operation environments for Oracle Cloud virtual IP resource, Oracle Cloud virtual IP monitor resource, and Oracle Cloud load balance monitor resource¶

4.2.14. Operation environment for OCI forced stop resource¶

The use of the OCI forced stop resource requires the following software.

Software |

Version |

Remarks |

|---|---|---|

OCI CLI |

3.5.3 or later

|

4.2.15. Operation environment for clpcfadm.py command¶

Using the clpcfadm.py command requires the following software:

Software |

Version |

Remarks |

|---|---|---|

Python |

3.6.8~ |

4.2.16. Required memory and disk size¶

Required memory size

(User mode)

|

200MB 2

|

|---|---|

Required memory size

(Kernel mode)

|

When the synchronization mode is used:

1MB + (number of request queues x I/O size) +

(2MB + Difference Bitmap Size x number of mirror disk resources and hybrid disk resources

When the asynchronous mode is used:

1MB + (number of request queues x I/O size)

+ (3MB

+ (number of asynchronous queues x I/O size)

+ (I/O size / 4KB x 8B + 0.5KB) x (max size of history file / I/O size + number of asynchronous queues)

+ (Difference Bitmap Size)

) x number of mirror disk resources and hybrid disk resources

When the kernel mode LAN heartbeat driver is used:

8MB

When the keepalive driver is used:

8MB

|

Required disk size

(Right after installation)

|

300MB

|

Required disk size

(During operation)

|

5.0GB + 1.0GB 3

|

- 2

excepting for optional products.

- 3

A disk capacity required to use mirror disk resources and hybrid disk resources.

Note

Estimated I/O size is as follows:

2MB (RHEL8)

1MB (Ubuntu16)

124KB (Ubuntu14, RHEL7)

4KB (RHEL6)

For the setting value of the number of request queues and asynchronization queues, see "Understanding Mirror disk resources" of "Group resource details" in the "Reference Guide".

For the required size of a partition for a disk heartbeat resource, see "Shared disk".

For the required size of a cluster partition, see "Mirror disk" and "Hybrid disk".

4.3. System requirements for the Cluster WebUI¶

4.3.1. Supported operating systems and browsers¶

Refer to the website, http://www.nec.com/global/prod/expresscluster/, for the latest information. Currently the following operating systems and browsers are supported:

Browser |

Language |

|---|---|

Internet Explorer 11 |

English/Japanese/Chinese |

Internet Explorer 10 |

English/Japanese/Chinese |

Firefox |

English/Japanese/Chinese |

Google Chrome |

English/Japanese/Chinese |

Microsoft Edge (Chromium) |

English/Japanese/Chinese |

Note

When using an IP address to connect to Cluster WebUI, the IP address must be registered to Site of Local Intranet in advance.

Note

When accessing Cluster WebUI with Internet Explorer 11, the Internet Explorer may stop with an error. In order to avoid it, please upgrade the Internet Explorer into KB4052978 or later. Additionally, in order to apply KB4052978 or later to Windows 8.1/Windows Server 2012R2, apply KB2919355 in advance. For details, see the information released by Microsoft.

Note

No mobile devices, such as tablets and smartphones, are supported.

4.3.2. Required memory and disk size¶

Required memory size: 500 MB or more

Required disk size: 200 MB or more

5. Latest version information¶

This chapter provides the latest information on EXPRESSCLUSTER.

This chapter covers:

5.1. Correspondence list of EXPRESSCLUSTER and a manual¶

Description in this manual assumes the following version of EXPRESSCLUSTER. Make sure to note and check how EXPRESSCLUSTER versions and the editions of the manuals are corresponding.

EXPRESSCLUSTER

Internal Version

|

Manual |

Edition |

Remarks |

|---|---|---|---|

5.0.1-1 |

Getting Started Guide |

3rd Edition |

|

Installation and Configuration Guide |

1st Edition |

||

Reference Guide |

3rd Edition |

||

Maintenance Guide |

1st Edition |

||

Hardware Feature Guide |

1st Edition |

5.2. New features and improvements¶

The following features and improvements have been released.

No. |

Internal

Version

|

Contents |

|---|---|---|

1 |

5.0.0-1 |

The newly released kernel is now supported. |

2 |

5.0.0-1 |

Ubuntu 20.04.3 LTS is now supported. |

3 |

5.0.0-1 |

SUSE LINUX Enterprise Server 12 SP3 is now supported. |

4 |

5.0.0-1 |

Along with the major upgrade, some functions have been removed. For details, refer to the list of removed functions. |

5 |

5.0.0-1 |

Added a function to suppress the automatic failover against a server crash, collectively in the whole cluster. |

6 |

5.0.0-1 |

Added a function to give a notice in an alert log that the server restart count was reset as the final action against the detected activation error or deactivation error of a group resource or against the detected error of a monitor resource. |

7 |

5.0.0-1 |

Added a function to exclude a server (with an error detected by a specified monitor resource) from the failover destination, for the automatic failover other than dynamic failover. |

8 |

5.0.0-1 |

Added the clpfwctrl command for adding a firewall rule. |

9 |

5.0.0-1 |

Added AWS secondary IP resources and AWS secondary IP monitor resources. |

10 |

5.0.0-1 |

The forced stop function using BMC has been redesigned as a BMC forced-stop resource. |

11 |

5.0.0-1 |

Redesigned the function for forcibly stopping virtual machines as a vCenter forced-stop resource. |

12 |

5.0.0-1 |

The forced stop function in the AWS environment has been added to forced stop resources. |

13 |

5.0.0-1 |

The forced stop function in the OCI environment has been added to forced stop resources. |

14 |

5.0.0-1 |

Redesigned the forced stop script as a custom forced-stop resource. |

15 |

5.0.0-1 |

Added a function to collectively change actions (followed by OS shutdowns such as a recovery action following an error detected by a monitor resource) into OS reboots. |

16 |

5.0.0-1 |

Improved the alert message regarding the wait process for start/stop between groups. |

17 |

5.0.0-1 |

The display option for the clpstat configuration information has allowed displaying the setting value of the resource start attribute. |

18 |

5.0.0-1 |

The clpcl/clpstdn command has allowed specifying the -h option even when the local server belongs to a stopped cluster. |

19 |

5.0.0-1 |

A warning message is now displayed when Cluster WebUI is connected via a non-actual IP address and is switched to config mode. |

20 |

5.0.0-1 |

In the config mode of Cluster WebUI, a group can now be deleted with the group resource registered. |

21 |

5.0.0-1 |

Changed the content of the error message that a communication timeout occurred in Cluster WebUI. |

22 |

5.0.0-1 |

Changed the content of the error message that executing the full copy failed on the mirror disk screen in Cluster WebUI. |

23 |

5.0.0-1 |

Added a function to copy a group, group resource, or monitor resource registered in the config mode of Cluster WebUI. |

24 |

5.0.0-1 |

Added a function to move a group resource registered in the config mode of Cluster WebUI, to another group. |

25 |

5.0.0-1 |

The settings can now be changed at the group resource list of [Group Properties] in the config mode of Cluster WebUI. |

26 |

5.0.0-1 |

The settings can now be changed at the monitor resource list of [Monitor Common Properties] in the config mode of Cluster WebUI. |

27 |

5.0.0-1 |

The dependency during group resource deactivation is now displayed in the config mode of Cluster WebUI. |

28 |

5.0.0-1 |

Added a function to display a dependency diagram at the time of group resource activation/deactivation in the config mode of Cluster WebUI. |

29 |

5.0.0-1 |

Added a function to narrow down a range of display by type or resource name of a group resource or monitor resource on the status screen of Cluster WebUI. |

30 |

5.0.0-1 |

User mode monitor resources and dynamic DNS monitor resources now support the function for collecting cluster statistics information. |

31 |

5.0.0-1 |

An intermediate certificate can now be used as a certificate file when HTTPS is used for communication in the WebManager service. |

32 |

5.0.0-1 |

Added the clpcfconv.sh command, which changes the cluster configuration data file from the old version to the current one. |

33 |

5.0.0-1 |

Added a function to delay the start of the cluster service for starting the OS. |

34 |

5.0.0-1 |

Increased the items of cluster configuration data to be checked. |

35 |

5.0.0-1 |

Details such as measures can now be displayed for error results of checking cluster configuration data in Cluster WebUI. |

36 |

5.0.0-1 |

The OS type can be specified for specifying the create option of the clpcfset command. |

37 |

5.0.0-1 |

Added a function to delete a resource or parameter from cluster configuration data, which is enabled by adding the del option to the clpcfset command. |

38 |

5.0.0-1 |

Added the clpcfadm.py command, which enhances the interface for the clpcfset command. |

39 |

5.0.0-1 |

The start completion timing of an AWS DNS resource has been changed to the timing before which the following is confirmed: The record set was propagated to AWS Route 53. |

40 |

5.0.0-1 |

Changed the default value for [Wait Time to Start Monitoring] of AWS DNS monitor resources to 300 seconds. |

41 |

5.0.0-1 |

Improved the functionality of monitor resources not to be affected by disk IO delay as follows: When a timeout occurs due to the disk wait dormancy (D state) of the monitor process, they consider the status as a warning instead of an error. |

42 |

5.0.0-1 |

The clpstat command can now be run duplicately. |

43 |

5.0.0-1 |

Added the Node Manager service. |

44 |

5.0.0-1 |

Added a function for statistical information on heartbeat. |

45 |

5.0.0-1 |

The proxy server has become available even when a Witness heartbeat resource is not used for an HTTP NP resolution resource. |

46 |

5.0.0-1 |

SELinux enforcing mode is now supported. |

47 |

5.0.0-1 |

HTTP monitor resources now support digest authentication. |

48 |

5.0.0-1 |

The FTP server that uses FTPS for the FTP monitor resource can now be monitored. |

49 |

5.0.0-1 |

JBoss EAP domain mode of JVM monitor resources can now be monitored in Java 9 or later. |

5.3. Corrected information¶

Modification has been performed on the following minor versions.

- Critical level:

- L

- Operation may stop. Data destruction or mirror inconsistency may occur.Setup may not be executable.

- M

- Operation stop should be planned for recovery.The system may stop if duplicated with another fault.

- S

- A matter of displaying messages.Recovery can be made without stopping the system.

No.

|

Version in which the problem has been solved

/ Version in which the problem occurred

|

Phenomenon

|

Level

|

Occurrence condition/

Occurrence frequency

|

|---|---|---|---|---|

1 |

5.0.0-1

/ 1.0.0-1 to 4.3.2-1

|

In a group, when a group resource alone is successfully activated, the restoration of another group resource may be executed. |

S |

This problem occurs in a group where a group resource alone is activated with another group resource failing in activation. |

2 |

5.0.0-1

/ 4.1.0-1 to 4.3.2-1

|

In the config mode of Cluster WebUI, modifying a comment on a group resource may not be applied. |

S |

This problem occurs in the following case: A comment on a group resource is modified, the [Apply] button is clicked, the change is undone, and then the [OK] button is clicked. |

3 |

5.0.0-1

/ 4.1.0-1 to 4.3.2-1

|

In the config mode of Cluster WebUI, modifying a comment on a monitor resource may not be applied. |

S |

This problem occurs in the following case: A comment on a monitor resource is modified, the [Apply] button is clicked, the change is undone, and then the [OK] button is clicked. |

4 |

5.0.0-1

/ 4.0.0-1 to 4.3.2-1

|

In the status screen of Cluster WebUI, a communication timeout during the operation of a cluster causes a request to be repeatedly issued. |

M |

This problem always occurs when a communication timeout occurs between Cluster WebUI and a cluster server. |

5 |

5.0.0-1

/ 4.1.0-1 to 4.3.2-1

|

Custer WebUI may freeze when dependency is set in the config mode of Cluster WebUI. |

S |

This problem occurs when two group resources are made dependent on each other. |

6 |

5.0.0-1

/ 4.2.0-1 to 4.3.2-1

|

The response of the clpstat command may be delayed. |

S |

This problem may occur when communication with other servers is cut off. |

7 |

5.0.0-1

/ 3.1.0-1 to 4.3.2-1

|

A cluster service may not stop. |

S |

This problem very rarely occurs when stopping a cluster service is tried. |

8 |

5.0.0-1

/ 4.0.0-1 to 4.3.2-1

|