1. Preface¶

1.1. Who Should Use This Guide¶

The Installation and Configuration Guide is intended for system engineers and administrators who want to build, operate, and maintain a cluster system. Instructions for designing, installing, and configuring a cluster system with EXPRESSCLUSTER are covered in this guide.

1.2. How This Guide is Organized¶

2. Determining a system configuration: Provides instructions for how to verify system requirements and determine the system configuration.

3. Configuring a cluster system: Helps you understand how to configure a cluster system.

4. Installing EXPRESSCLUSTER: Provides instructions for how to install EXPRESSCLUSTER.

5. Registering the license: Provides instructions for how to register the license.

6. Creating the cluster configuration data: Provides instructions for how to create the cluster configuration data with the Cluster WebUI.

7. Verifying a cluster system: Verify if the cluster system that you have configured operates successfully.

8. Modifying the cluster configuration data: Provides instructions for how to modify the cluster configuration data.

9. Verifying operation: Run the pseudo-failure test and adjust the parameters.

10. Preparing to operate a cluster system: Provides information on what you need to consider before actually start operating EXPRESSCLUSTER.

11. Uninstalling and reinstalling EXPRESSCLUSTER: Provides instructions for how to uninstall and reinstall EXPRESSCLUSTER.

12. Troubleshooting: Describes problems you might experience when installing or setting up EXPRESSCLUSTER X SingleServerSafe and how to resolve them.

1.3. EXPRESSCLUSTER X Documentation Set¶

The EXPRESSCLUSTER X manuals consist of the following six guides. The title and purpose of each guide is described below:

EXPRESSCLUSTER X Getting Started Guide

This guide is intended for all users. The guide covers topics such as product overview, system requirements, and known problems.

EXPRESSCLUSTER X Installation and Configuration Guide

This guide is intended for system engineers and administrators who want to build, operate, and maintain a cluster system. Instructions for designing, installing, and configuring a cluster system with EXPRESSCLUSTER are covered in this guide.

EXPRESSCLUSTER X Reference Guide

This guide is intended for system administrators. The guide covers topics such as how to operate EXPRESSCLUSTER, function of each moduleand troubleshooting. The guide is supplement to the Installation and Configuration Guide.

EXPRESSCLUSTER X Maintenance Guide

This guide is intended for administrators and for system administrators who want to build, operate, and maintain EXPRESSCLUSTER-based cluster systems. The guide describes maintenance-related topics for EXPRESSCLUSTER.

EXPRESSCLUSTER X Hardware Feature Guide

This guide is intended for administrators and for system engineers who want to build EXPRESSCLUSTER-based cluster systems. The guide describes features to work with specific hardware, serving as a supplement to the Installation and Configuration Guide.

EXPRESSCLUSTER X Legacy Feature Guide

This guide is intended for administrators and for system engineers who want to build EXPRESSCLUSTER-based cluster systems. The guide describes EXPRESSCLUSTER X 4.0 WebManager and Builder.

1.4. Conventions¶

In this guide, Note, Important, See also are used as follows:

Note

Used when the information given is important, but not related to the data loss and damage to the system and machine.

Important

Used when the information given is necessary to avoid the data loss and damage to the system and machine.

See also

Used to describe the location of the information given at the reference destination.

The following conventions are used in this guide.

Convention |

Usage |

Example |

|---|---|---|

Bold

|

Indicates graphical objects, such as fields, list boxes, menu selections, buttons, labels, icons, etc.

|

In User Name, type your name.

On the File menu, click Open Database.

|

Angled bracket within the command line |

Indicates that the value specified inside of the angled bracket can be omitted. |

clpstat -s[-h host_name] |

# |

Prompt to indicate that a Linux user has logged on as root user. |

# clpcl -s -a |

Monospace |

Indicates path names, commands, system output (message, prompt, etc.), directory, file names, functions and parameters. |

|

bold

|

Indicates the value that a user actually enters from a command line.

|

Enter the following:

# clpcl -s -a

|

italic |

Indicates that users should replace italicized part with values that they are actually working with.

|

rpm -i expresscls-<version_number> -<release_number>.x86_64.rpm |

In the figures of this guide, this icon represents EXPRESSCLUSTER.

In the figures of this guide, this icon represents EXPRESSCLUSTER.

1.5. Contacting NEC¶

For the latest product information, visit our website below:

2. Determining a system configuration¶

This chapter provides instructions for determining the cluster system configuration that uses EXPRESSCLUSTER.

This chapter covers:

2.1. Steps from configuring a cluster system to installing EXPRESSCLUSTER

2.4. Checking system requirements for each EXPRESSCLUSTER module

2.5. Example of EXPRESSCLUSTER (main module) hardware configuration

2.1. Steps from configuring a cluster system to installing EXPRESSCLUSTER¶

Before you set up a cluster system that uses EXPRESSCLUSTER, you should carefully plan the cluster system with due consideration for factors such as hardware requirements, software to be used, and the way the system is used. When you have built the cluster, check to see if the cluster system is successfully set up before you start its operation.

This guide explains how to create a cluster system with EXPRESSCLUSTER through step-by-step instructions. Read each chapter by actually executing the procedures to install the cluster system. Following is the steps to take from designing the cluster system to operating EXPRESSCLUSTER:

The following is the procedure for configuring a cluster system to run an operation test:

Prepare for installing EXPRESSCLUSTER. Determine the hardware configuration and the setting information of a cluster system to be constructed.

Step 1 "2. Determining a system configuration"

Step 2 "3. Configuring a cluster system"

Install EXPRESSCLUSTER to server machines, create a configuration data file on the Cluster WebUI by using the setting information created in Steps 1 and 2 , and construct a cluster. After that, verify that a cluster system operates normally.

Step 3 "4. Installing EXPRESSCLUSTER"

Step 4 "5. Registering the license"

Step 5 "6. Creating the cluster configuration data"

Step 6 "7. Verifying a cluster system"

Step 7 "8. Modifying the cluster configuration data"

Perform an evaluation required before starting the EXPRESSCLUSTER operation. Test the operations of a constructed cluster system, and then check what should be checked before starting the EXPRESSCLUSTER operation. On the last part of this section, how to uninstall and reinstall EXPRESSCLUSTER is described.

Step 8 "9. Verifying operation"

Step 9 "10. Preparing to operate a cluster system"

Step 10 "11. Uninstalling and reinstalling EXPRESSCLUSTER"

See also

Refer to the Reference Guide as you need when operating EXPRESSCLUSTER by following the procedures introduced in this guide. See the Getting Started Guide for installation requirements.

2.2. What is EXPRESSCLUSTER?¶

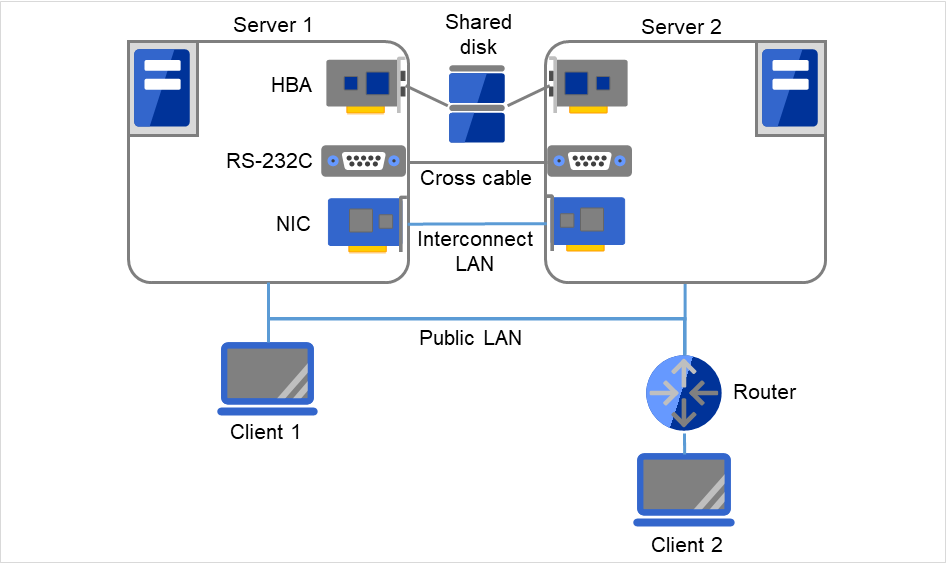

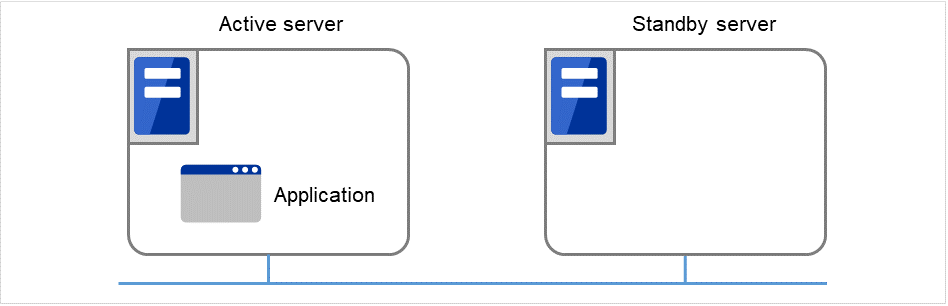

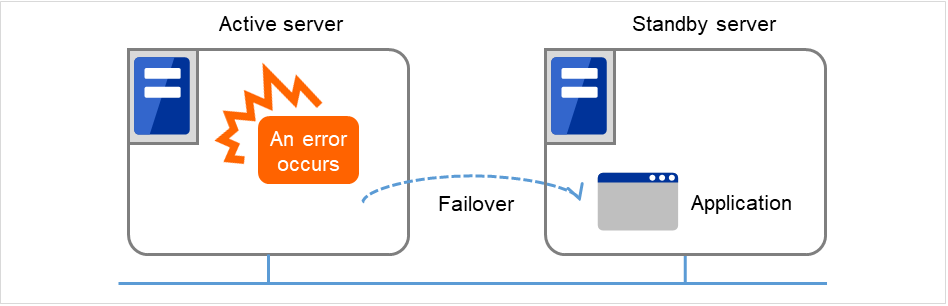

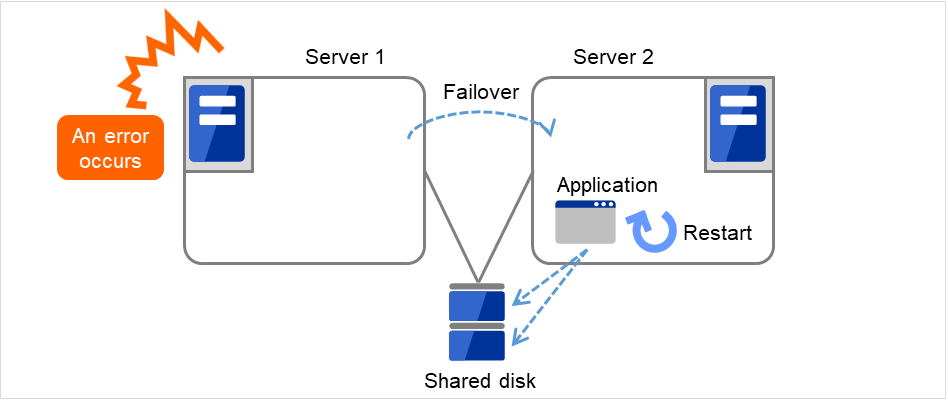

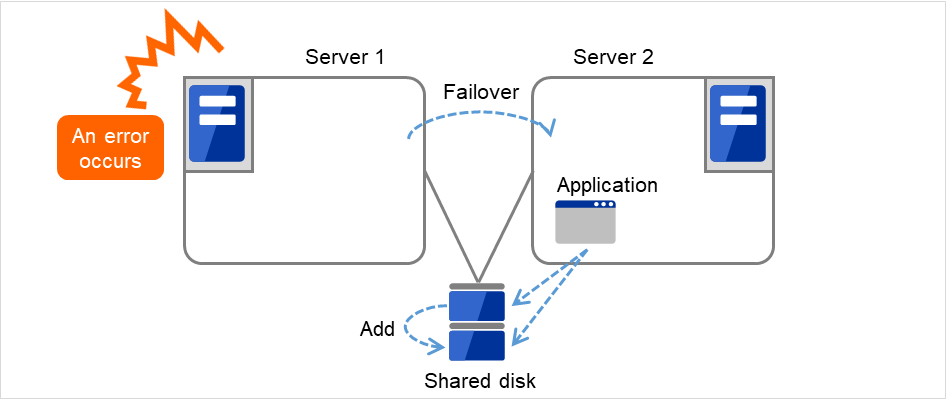

EXPRESSCLUSTER is software that enhances availability and expandability of systems by a redundant (clustered) system configuration. The application services running on the active server are automatically taken over to the standby server when an error occurs on the active server.

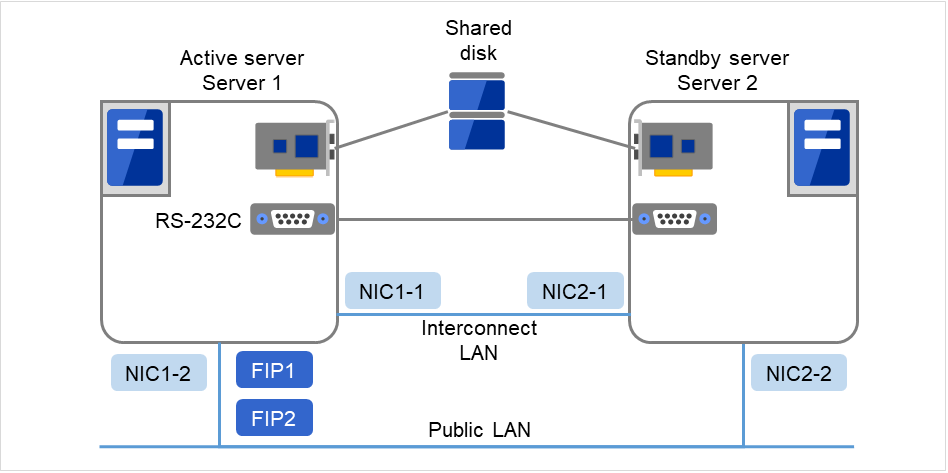

Fig. 2.1 Cluster system (in normal operation)¶

Fig. 2.2 Cluster system (when an error occurs)¶

The following can be achieved by installing a cluster system that uses EXPRESSCLUSTER.

- High availabilityThe down time is minimized by automatically failing over the applications and services to a "healthy" server when one of the servers which configure a cluster stops.

- High expandabilityAn expandable database platform can be provided by supporting a parallel database up to 32 servers.

See also

For details on EXPRESSCLUSTER, refer to "What is a cluster system?" and "Using EXPRESSCLUSTER" in the Getting Started Guide.

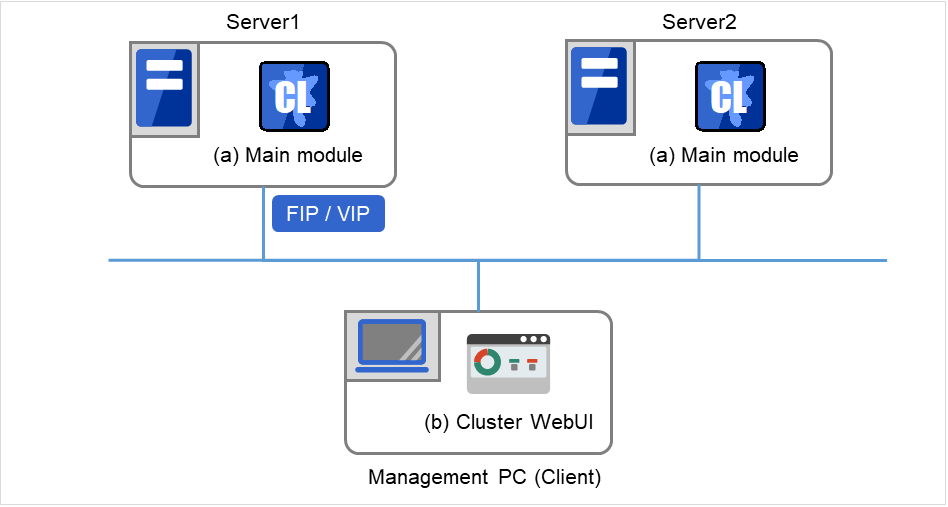

2.2.1. EXPRESSCLUSTER modules¶

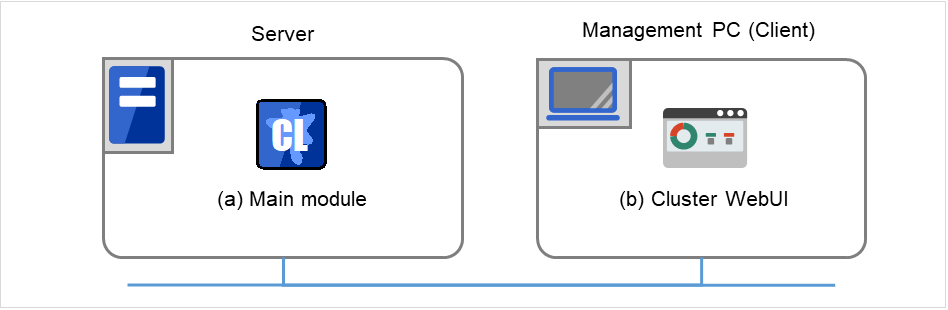

EXPRESSCLUSTER consists of following two modules:

- EXPRESSCLUSTER Server (Main module)The main module of EXPRESSCLUSTER and has all high availability functions of the server. Install this module on each server constituting the cluster.

- Cluster WebUIThis is a tool to create the configuration data of EXPRESSCLUSTER and to manage EXPRESSCLUSTER operations. It is distinguished from the EXPRESSCLUSTER Server because the Cluster WebUI is operated through a Web browser on the management PC.

Fig. 2.3 Modules constituting EXPRESSCLUSTER¶

2.3. Planning system configuration¶

You need to determine an appropriate hardware configuration to install a cluster system that uses EXPRESSCLUSTER. The configuration examples of EXPRESSCLUSTER are shown below.

See also

For latest information on system requirements, refer to the Getting Started Guide.

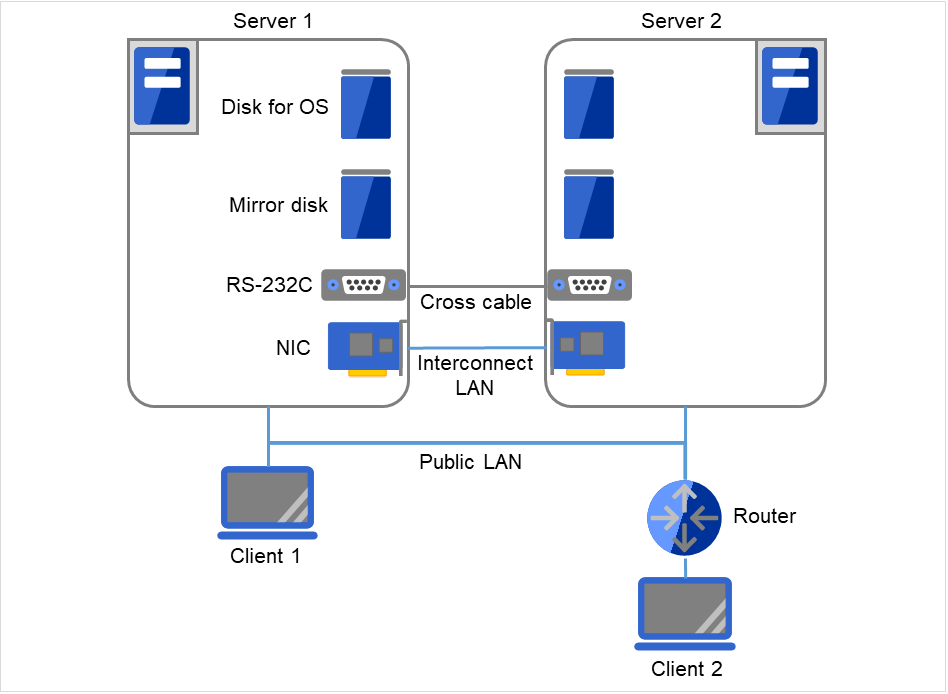

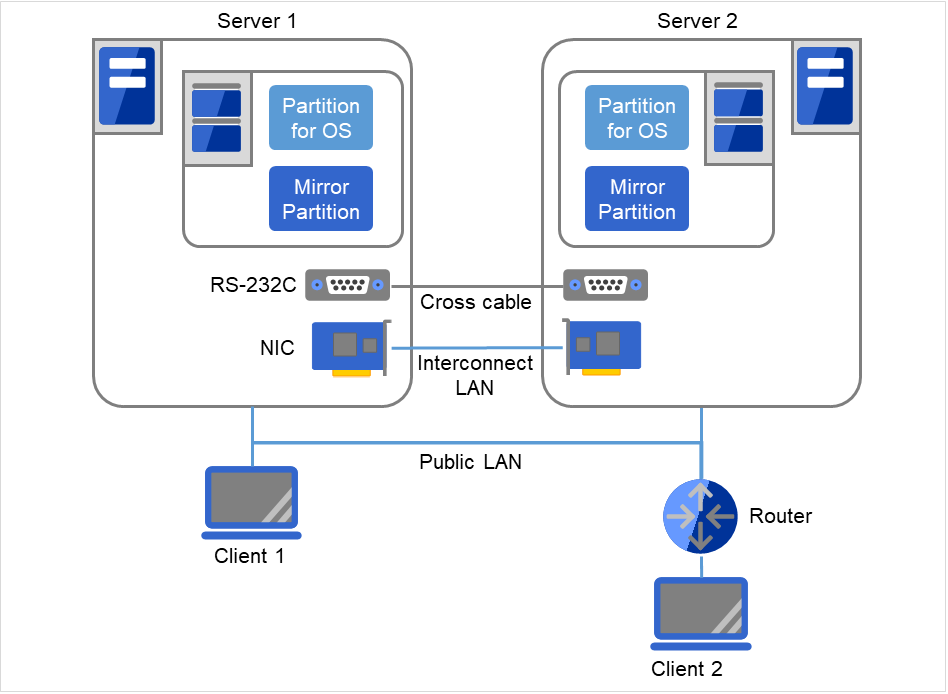

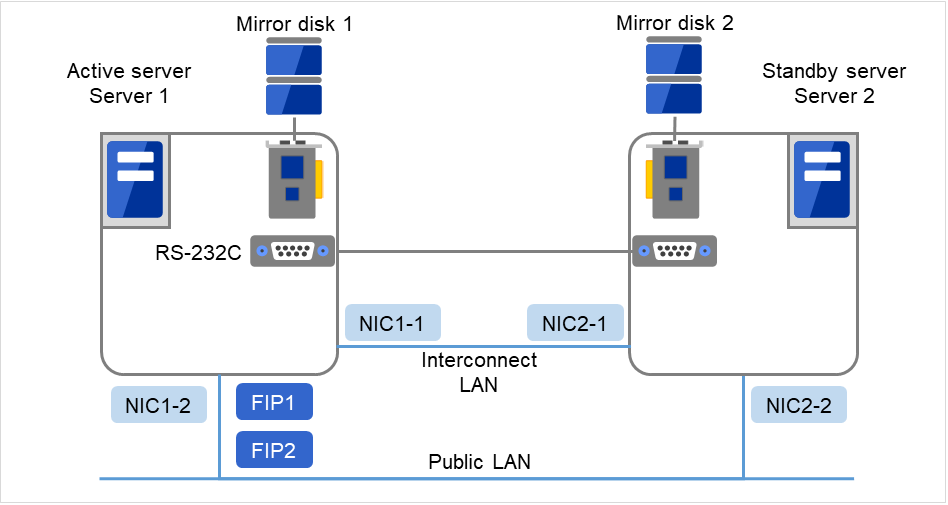

2.3.3. Example 2: configuration using mirror disks with two nodes¶

Different models can be used for servers. However, servers should have the same architecture.

Use LAN cables for interconnection. Use cross cables for the interconnection between the mirror disks (mirror disk connect). Do not connect a HUB.

Connect COM (RS-232C) ports using a cross cable.

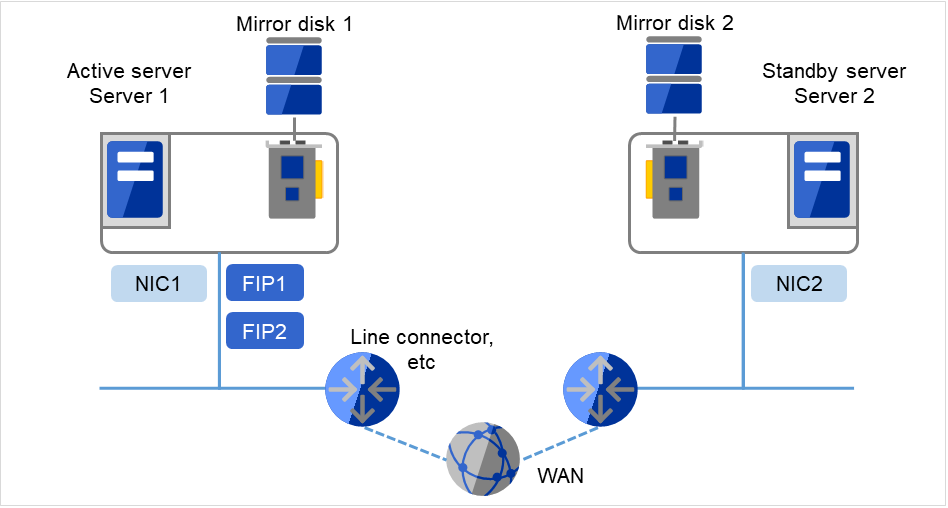

Fig. 2.5 Example of a configuration using mirror disks with two nodes¶

Client 1, which exists on the same LAN as that of the cluster servers, can access them through a floating IP address. Client 2, which exists on a remote LAN, can also access the cluster servers through a floating IP address. Using floating IP addresses does not require the router to be configured for them.

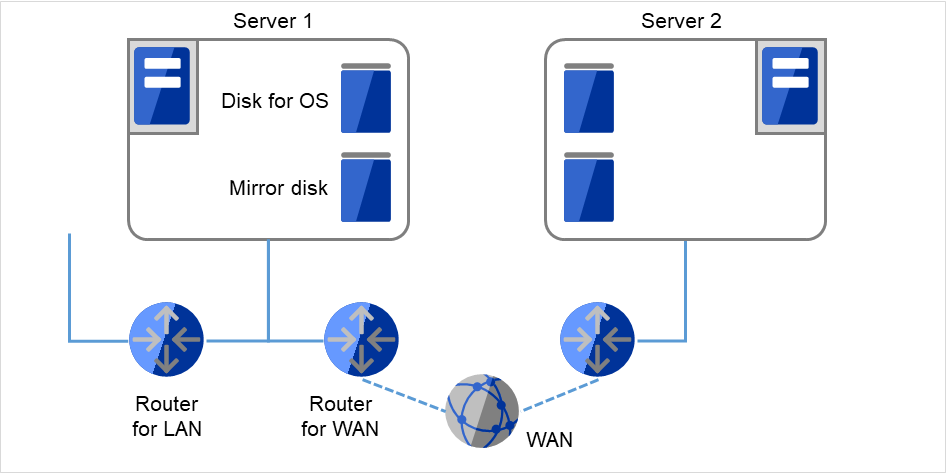

2.3.4. Example 3: configuration using mirror disks with two nodes and one LAN¶

Different models can be used for servers, but the servers must have the same architecture.

Fig. 2.6 Example of a configuration using mirror disks with two nodes and one LAN¶

WAN and LAN routers are to be monitored for the Ping NP resolution method. The WAN throughput must be enough to update data in an operation. EXPRESSCLUSTER does not support boosting line speed.

2.3.5. Example 4: configuration using mirror partitions on the disks for OS with two nodes¶

As shown below, a mirroring partition can be created on the disk used for the OS.

Fig. 2.7 Example of a configuration using mirror partitions on the disks for OSs with two nodes¶

Client 1, which exists on the same LAN as that of the cluster servers, can access them through a floating IP address. Client 2, which exists on a remote LAN, can also access the cluster servers through a floating IP address. Using floating IP addresses does not require the router to be configured for them.

See also

For mirror partition settings, refer to "Group resource details" in the Reference Guide.

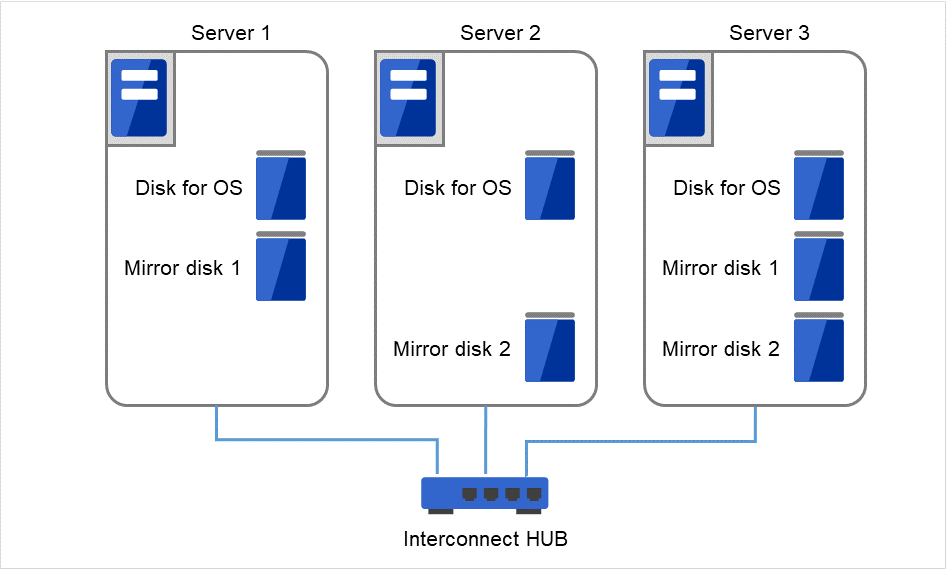

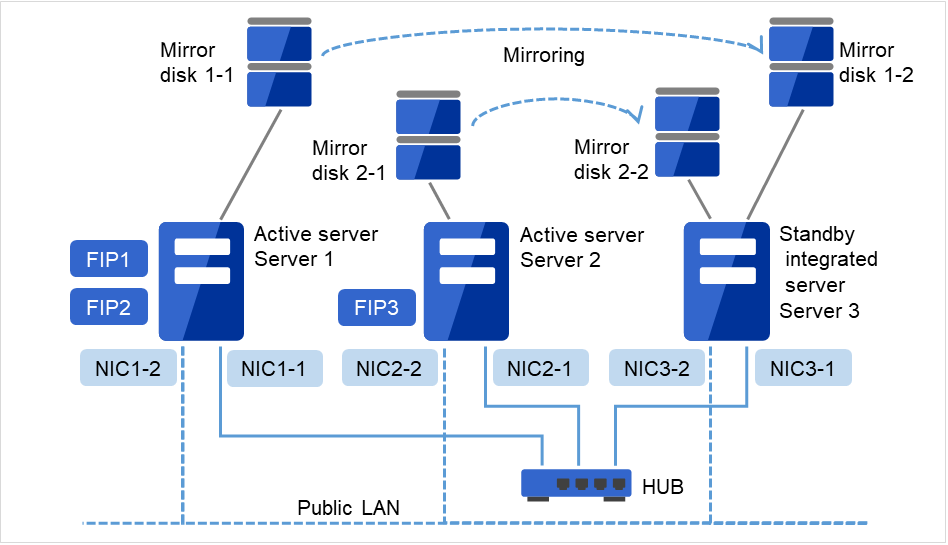

2.3.6. Example 5: configuration with three nodes¶

For three nodes configuration, prepare two mirror disks on a standby server where mirror resources are integrated (in the figure below, server3).

Install a dedicated HUB for LAN used for interconnect and mirror disk connection.

For the HUB, use the high-speed HUB.

It is not necessary to establish connectivity between servers using the connect COM (RS-232C).

Fig. 2.8 Example of a configuration with three nodes¶

Interconnect LAN cables are connected to the interconnect hub, which is not connected to any other server or client.

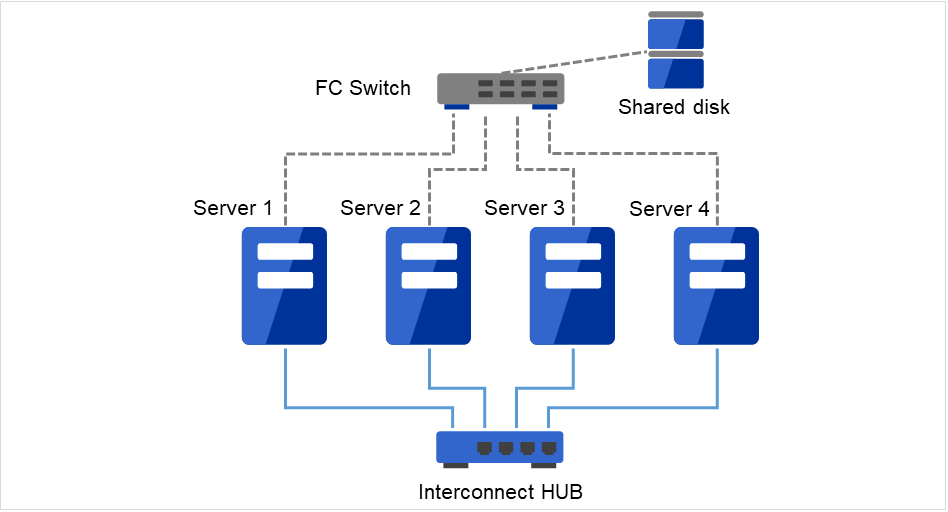

2.3.7. Example 6: configuration with four nodes¶

As is the case with two nodes, connect a shared disk.

Install a dedicated HUB for interconnect.

It is not necessary to establish connectivity between servers using the connect COM (RS-232C).

Fig. 2.9 Example of a configuration with four nodes¶

Interconnect LAN cables are connected to the interconnect hub, which is not connected to any other server or client.

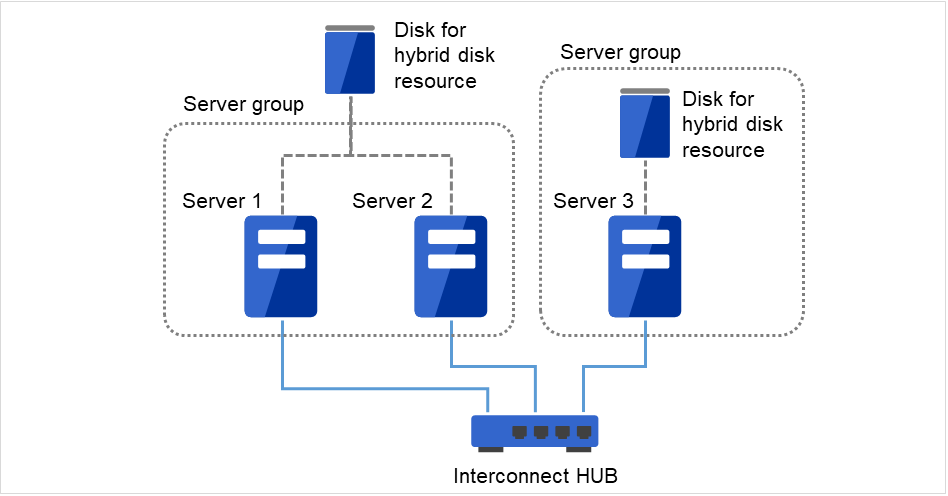

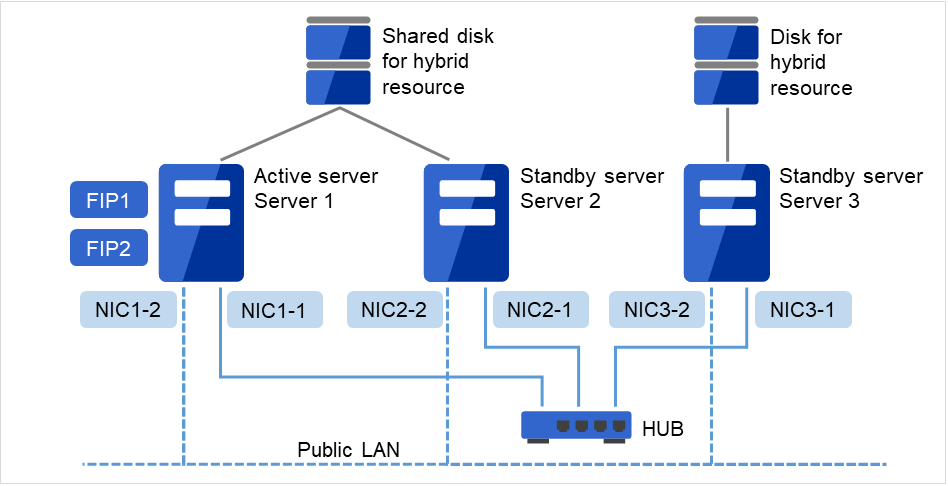

2.3.8. Example 7: Configuration of hybrid type with three nodes¶

This is a configuration with three nodes, which consists of two nodes connected to the shared disk and one node with the disk to be mirrored.

Different models can be used for servers but the servers must be configured in the same architecture.

Install a dedicated HUB for interconnection and LAN of mirror disk connect.

For the HUB, use a fast HUB.

Fig. 2.10 Example of a configuration of the hybrid type with three nodes¶

Interconnect LAN cables are connected to the interconnect hub, which is not connected to any other server or client.

2.4. Checking system requirements for each EXPRESSCLUSTER module¶

EXPRESSCLUSTER X consists of two modules: EXPRESSCLUSTER Server (main module) and Cluster WebUI. Check configuration and operation requirements of each machine where these modules will be used. For details about the operating environments, see "Installation requirements for EXPRESSCLUSTER" in the Getting Started Guide.

2.5. Example of EXPRESSCLUSTER (main module) hardware configuration¶

The EXPRESSCLUSTER Server is a core component of EXPRESSCLUSTER. Install it on each server that constitutes a cluster. Cluster WebUI is included in the EXPRESSCLUSTER Server and it is automatically installed once the EXPRESSCLUSTER Server is installed.

2.5.1. General requirements¶

Following is the recommended specification for the EXPRESSCLUSTER Server:

RS-232C port: 1 port (not necessary when configuring a cluster with more than 3 nodes)

Ethernet port: 2 or more ports

Shared disk (For disk resource and/or hybrid disk resource)

Disk for mirroring or free partition (For mirror disk resource or hybrid disk resource)

CD-ROM drive

See also

For information on system requirements for supported hardware and OS, refer to the Getting Started Guide.

2.6. Verifying system requirements for the Cluster WebUI¶

To monitor a cluster system that uses EXPRESSCLUSTER, use Cluster WebUI, which accesses from a management PC via a Web browser. Therefore, a management PC should be able to make access to the cluster via network. The management PC can be Linux or Windows.

For information of the latest system requirements of the Cluster WebUI (supported operating systems and browsers, required memory and disk size) see the Getting Started Guide.

2.7. Determining a hardware configuration¶

Determine a hardware configuration considering an application to be duplicated on a cluster system and how a cluster system is configured. Read "3. Configuring a cluster system" before you determine a hardware configuration.

2.8. Settings after configuring hardware¶

After you have determined the hardware configuration and installed the hardware, do the following:

2.8.1. Shared disk settings for disk resource (Required for disk resource)

2.8.2. Shared disk settings for Hybrid disk resource (Required for Replicator DR)

2.8.3. Partition settings for Hybrid disk resource (Required for the Replicator DR)

2.8.4. Partition settings for Mirror disk resource (when using Replicator)

2.8.5. Adjustment of the operating system startup time (Required)

2.8.3. Partition settings for Hybrid disk resource (Required for the Replicator DR)¶

Follow the steps below to configure the partitions when a non-shared disk (such as internal disk of the server or a non-shared external disk) is used as a hybrid disk resource.

For settings in a general mirror configuration (when Replicator is used), see "2.8.4. Partition settings for Mirror disk resource (when using Replicator)".

When a shared disk is used as a hybrid disk resource, refer to "2.8.2. Shared disk settings for Hybrid disk resource (Required for Replicator DR)".

Note

When you continue using an existing partition (in the cases such as clustering a single server) or reinstalling server, do not allocate a partition for a hybrid disk resource or create a file system. The data on the partition gets deleted if you allocate a partition for hybrid disk resources or create a file system.

- Allocate a cluster partition for hybrid disk resource.Allocate a partition to be used by the mirror driver. The mirror driver and mirror agent use this partition to monitor the status of hybrid disk resource. Create a partition in every server in the cluster that uses hybrid disk resource. Use the fdisk command to set 83 (Linux) for the partition ID.

Note

A cluster partition for hybrid disk resource should be 1024MB (1024*1024*1024 bytes) or larger. (The size will be actually larger than 1024MB even if you specify exactly 1024MB because of the disk geometry difference. This will cause no problem.) You do not need to create a file system on this partition.

Initialize the cluster partition. (Required only when you continue using a disk that is used as disk that was used as EXPRESSCLUSTER mirror disk or hybrid disk.)

Initialization is required because the old data on partitions survive even if allocation of partitions is performed.

If you continue to use a disk that was once used as an EXPRESSCLUSTER mirror disk or hybrid disk, make sure to initialize it.

Run the following command:

dd if=/dev/zero of=<Partition device name to be used as cluster partition>

Note

Running the dd command initializes the specified partition. Before you run the dd command make sure to check the partition device name.

Note

The following message is displayed when you run the dd command. This is not an error.

dd: writing to <Partition_device_name_used_as_a_cluster_partition>: No space left on device

- Allocate a partition for hybrid disk resource.Create a partition to be used for hybrid disk resource. Create the partition in every server in the cluster that use hybrid resource. Run the fdisk command to set 83 (Linux) for the partition ID.

- Create a file system.It is necessary to create a file system on the partition for hybrid disk resource.

The hybrid disk resource basically does not depend on file systems, problems may occur depending on the specification of the fsck of the file system.

It is recommended to use file systems which have journal function for fault tolerance improvement of the system.

Following is the currently supported file systems:

ext2

ext3

ext4

xfs

reiserfs

jfs

It is also possible to directly access the partition without creating a file system.

Note

The EXPRESSCLUSTER controls the file systems on hybrid disk resource. Do not enter the hybrid disk resource or partition for hybrid disk resource into /etc/fstab in the operating system. (Do not enter them into /etc/fstab, even if the ignore option is specified.)

Note

Distributions and kernels where vxfs can be used depend on the support status of vxfs.

Note

When problems occur because of forgetting creating file system, execute the following steps:

- Stop cluster when the cluster is running.For the Cluster WebUI, select Service -> Stop Cluster.For the commands, use clpcl -t -a command.

- Stop mirror agent when mirror agent is running.For the Cluster WebUI, select Service -> Stop Mirror Agent.For the commands, run service clusterpro_md stop on each server.

- Lift access control to the hybrid disk partition.use the clproset command.(Example: cloproset -w -d <partition_device>)

- Create file systemsuse the mkfs or other commands.(Example: mkfs -t ext3 <partition_device>)

- Reboot the system.

- Create a mount point.Create a directory to mount the partition for hybrid disk resource.

If a file system is not used for the partition for hybrid disk resources, a mount point does not need to be created.

2.8.4. Partition settings for Mirror disk resource (when using Replicator)¶

Set up partitions for mirror disk resource by following the steps below. For using hybrid disk resource (for Replicator DR), refer to "2.8.3. Partition settings for Hybrid disk resource (Required for the Replicator DR)".

Note

When you continue using an existing partition (in the cases such as clustering a single server) or reinstalling server, do not allocate a partition for mirror resources. If you create the partition for mirror resources, data on the existing partition will be deleted.

- Allocate a cluster partition.Allocate a partition to be used by the mirror driver. The mirror driver and mirror agent use this partition to monitor the status of mirror disk resource. Create a partition in every server in the cluster that uses mirror disk resource. Use the fdisk command to set 83 (Linux) for the partition ID.

Note

A cluster partition should be 1024MB (1024*1024*1024 bytes) or larger. (The size will be actually larger than 1024MB even if you specify exactly 1024MB because of the disk geometry difference. This will cause no problem.) You do not need to create a file system on this partition.

- Initialize the cluster partition. (Required only when you continue using a disk that is used as disk that was used as EXPRESSCLUSTER mirror disk or hybrid disk.)

Initialization is required because the old data on the cluster partition remains even if allocation of partitions is performed.

If you continue to use a disk that was once used as an EXPRESSCLUSTER mirror disk or hybrid disk, make sure to initialize it.

Run the following command:

dd if=/dev/zero of=<The name of the partition device to be used as cluster partition>

Note

Running the dd command initializes the specified partition. Before you run the dd command make sure to check the partition device name.

Note

The following message is displayed when you run the dd command. This is not an error.

dd: writing to <Partition_device_name_used_as_a_cluster_partition>: No space left on device

- Allocate a partition for mirror disk resourceCreate a partition to be used for mirror disk resource. Create a partition in every server in the cluster that use mirror resource. Run the fdisk command to set 83 (Linux) for the partition ID.

- Create a file system.Creation of a file system for the partition used for mirror resource depends on the Execute initial mkfs setting.

- If Execute initial mkfs is selected when creating the cluster configuration data using the Cluster WebUI, EXPRESSCLUSTER will automatically create a file system.Note that the existing data on the partition will be lost.

- If Execute initial mkfs is not selected when creating the cluster configuration data using the Cluster WebUI, EXPRESSCLUSTER will not create a file system.Because this option causes the existing file system on the partition to be used, it is necessary to create a file system in advance.

In addition, note the following about the partition for mirror resource:

The mirror resource basically does not depend on file systems, problems may occur depending on the specification of the fsck of the file system.

It is recommended to use a file system capable of journaling to avoid system failures.

The file systems currently supported are:

ext2

ext3

ext4

xfs

reiserfs

jfs

It is also possible to directly access the partition without creating a file system.

Note

Do not select Execute initial mkfs when you use the data has been saved on the partition.If you select it, the data will be removed.Note

The EXPRESSCLUSTER controls the file systems on the mirror resource. Do not enter the mirror resource or a partition for the mirror resource into the operating system /etc/fstab directory. (Do not enter them into /etc/fstab, even if the ignore option is specified.)

Note

Distributions and kernels where vxfs can be used depend on the vxfs support status.

- Create a mount point.Create a directory to mount the partition for mirror resource.Create this directory on all servers in the cluster that use mirror resource.

2.8.5. Adjustment of the operating system startup time (Required)¶

It is necessary to configure the time from power-on of each node in the cluster to the server operating system startup to be longer than the following:

The time from power-on of the shared disk to the point they become available.

Heartbeat timeout time (90 seconds by default in the Cluster WebUI.)

Adjustment of the startup time is necessary due to the following reasons:

Activating disk resources fails if the cluster system is started by powering on the shared disk and servers.

A failover fails if a server, with data you want to fail over by rebooting the server, reboots within the heartbeat timeout. This is because a remote server assumes heartbeat is continued.

Consider the times durations above and adjust the operating system startup time by following the procedure below.

Note

How you configure the time is determined by what is used as an operating system loader, LILO or GRUB.

When GRUB is used for the operating system loader

- Edit /boot/grub/menu.lst.Specify the timeout <startup_time> (in seconds) option. In the following example, change only the bolded part.

Example: Startup time: 90 seconds

default 0 timeout 90 title linux kernel (hd0,1)/boot/vmlinuz root=/dev/sda2 vga=785 initrd (hd0,1)/boot/initrd

When LILO is used for the operating system loader

- Edit /etc/lilo.conf.Specify the prompt option and timeout=<startup_time> (in 1/10 seconds) option, or specify the delay=<startup_time> (in 1/10 seconds) option without specifying the prompt option. In the following example, change only the bolded part.

Example 1: Output prompt. Startup time: 90 seconds

boot=/dev/sda map=/boot/map install=/boot/boot.b prompt linear timeout=900 image=/boot/vmlinuz label=linux root=/dev/sda1 initrd=/boot/initrd.img read-onlyExample 2: Not output prompt. Startup time: 90 seconds

boot=/dev/sda map=/boot/map install=/boot/boot.b #prompt linear delay=900 image=/boot/vmlinuz label=linux root=/dev/sda1 initrd=/boot/initrd.img read-only

Run the /sbin/lilo command to make the changes of the setting effective.

Note

When you are using an operating system loader other than LILO or GRUB is used, see the setup guide of the operating system loader.

When GRUB2 is used for the operating system loader

- Edit /etc/default/grub.Specify GRUB_TIMEOUT=<startup_time> (in seconds).

Example: Startup time: 90 seconds

GRUB_TIMEOUT=90

Run the command to make the changes of the setting effective.

For the BIOS base server

# grub2-mkconfig -o /boot/grub2/grub.cfg

For the UEFI base server

# grub2-mkconfig -o /boot/efi/EFI/redhat/grub.cfg

2.8.6. Verification of the network settings (Required)¶

On all servers in the cluster, verify the status of the following networks using the ifconfig or ping command. Verify if network devices (eth0, eth1, eth2, etc) are assigned to appropriate roles, such as public LAN and interconnect-dedicated LAN.

Public LAN (used for communication with all the other machines)

LAN dedicated to interconnect (used for communication between EXPRESSCLUSTER Servers)

Host name

Note

It is not necessary to specify the IP addresses of floating IP resources or virtual IP resources used in the cluster in the operating system.

2.8.7. Verification of the firewall settings (Required)¶

EXPRESSCLUSTER uses several port numbers for communication between the modules. For details about the port numbers to be used, see "Before 4. Installing EXPRESSCLUSTER" of "Notes and Restrictions" in the "Getting Started Guide".

2.8.8. Server clock synchronization (Required)¶

It is recommended to regularly synchronize the clocks of all the servers in the cluster. Make the settings that synchronize server clocks through protocol such as ntp on a daily basis.

Note

If the clock in each server is not synchronized, it may take time to analyze the problem when an error occurs.

3. Configuring a cluster system¶

This chapter provides information on applications to be duplicated, cluster topology, and explanation on cluster configuration data that are required to configure a cluster system.

This chapter covers:

3.1. Configuring a cluster system¶

This chapter provides information necessary to configure a cluster system, including the following topics:

Determining a cluster system topology

Determining applications to be duplicated

Creating the cluster configuration data

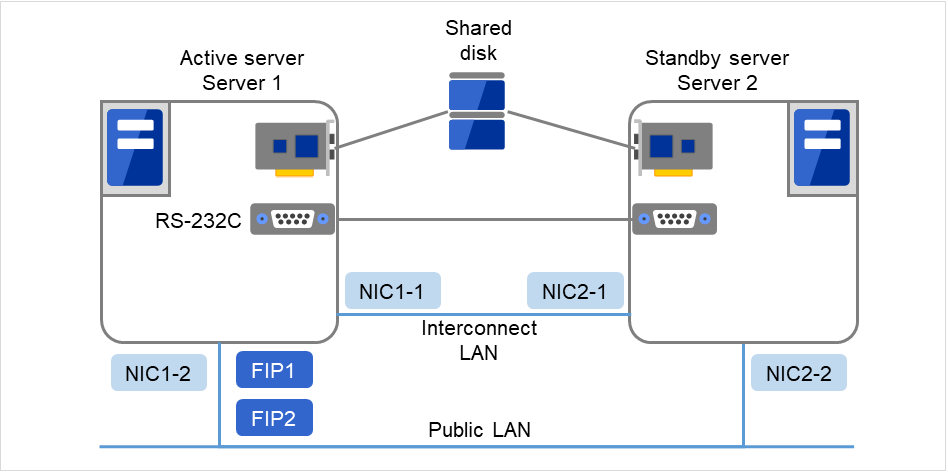

In this guide, explanations are given using a 2-node and uni-directional standby cluster environment as an example.

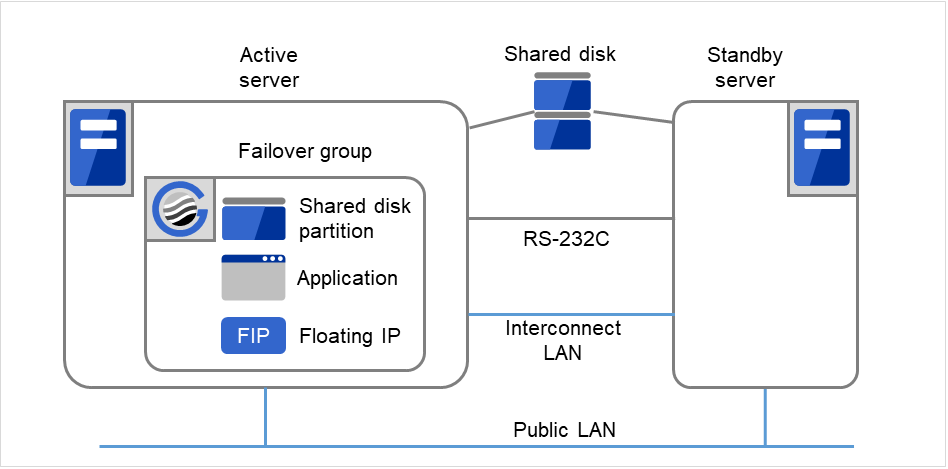

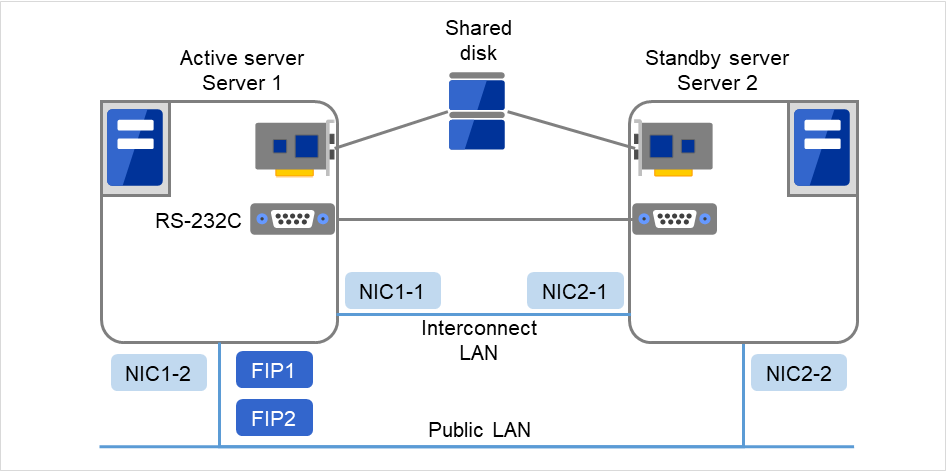

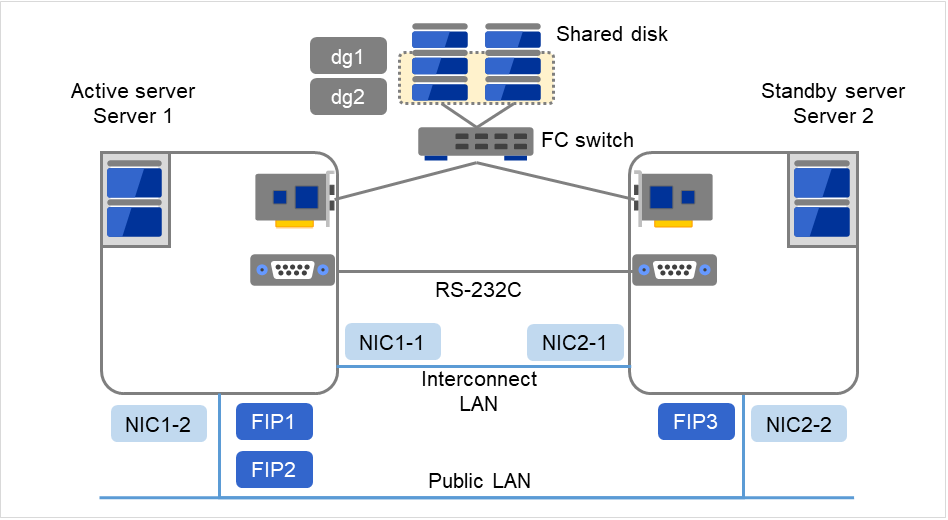

Fig. 3.1 Example of a 2-node and uni-directional standby cluster environment¶

FIP1 |

10.0.0.11

(to be accessed by Cluster WebUI clients)

|

FIP2 |

10.0.0.12

(to be accessed by operation clients)

|

NIC1-1 |

192.168.0.1 |

NIC1-2 |

10.0.0.1 |

NIC2-1 |

192.168.0.2 |

NIC2-2 |

10.0.0.2 |

RS-232C device |

/dev/ttyS0 |

Shared disk

Device name of the disk heartbeat

/dev/sdb1

Raw device name of the disk heartbeat

/dev/raw/raw1

Shared disk device

/dev/sdb2

Mount point

/mnt/sdb2

File system

ext3

Device name of the raw monitor

Not set

Name of the raw device to be monitored by raw monitor

/dev/raw/raw1

3.2. Determining a cluster topology¶

EXPRESSCLUSTER supports multiple cluster topologies. There are uni-directional standby cluster system that considers one server as an active server and other as standby server, and multi-directional standby cluster system in which both servers act as active and standby servers for different operations.

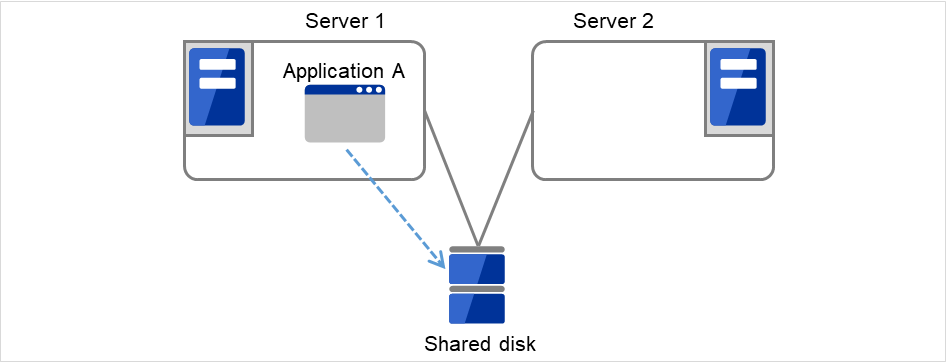

- Uni-directional standby cluster systemIn this operation, only one application runs on an entire cluster system. There is no performance deterioration even when a failover occurs. However, resources in a standby server will be wasted.

Fig. 3.2 Uni-directional standby cluster system¶

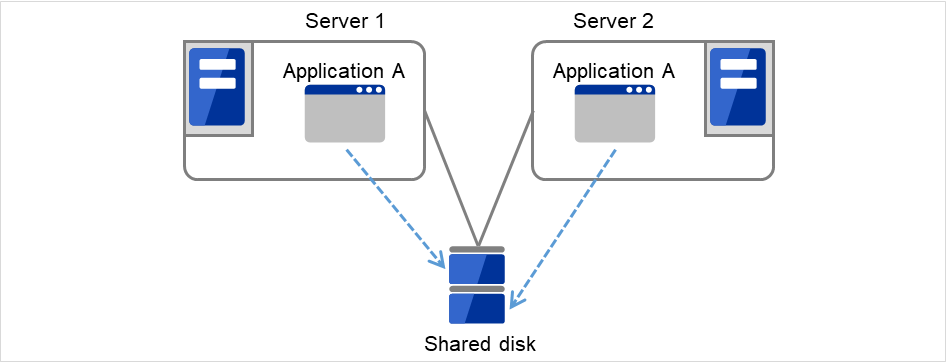

- Multi-directional standby cluster system with the same applicationIn this operation, the same applications run simultaneously on a cluster system. Applications used in this system must support multi-directional standby operations.

Fig. 3.3 Multi-directional standby cluster system with the same application¶

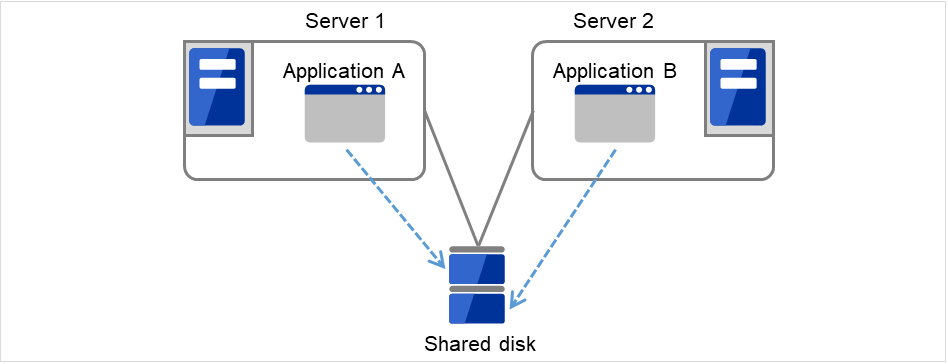

- Multi-directional standby cluster system with different applicationsIn this operation, different applications run on different servers and standby each other. Resources will not be wasted during normal operation; however, two applications run on one server after failing over and system performance deteriorates.

Fig. 3.4 Multi-directional standby cluster system with different applications¶

3.2.1. Failover in uni-directional standby cluster¶

On a uni-directional standby cluster system, the number of groups for an operation service is limited to one as described in the diagrams below:

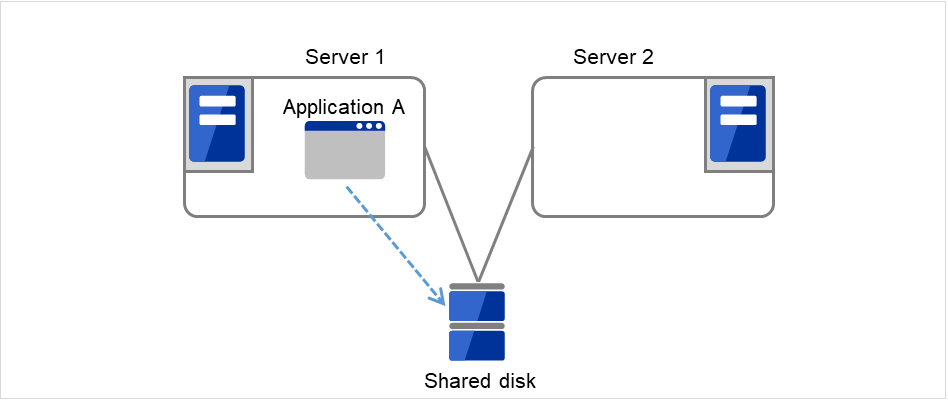

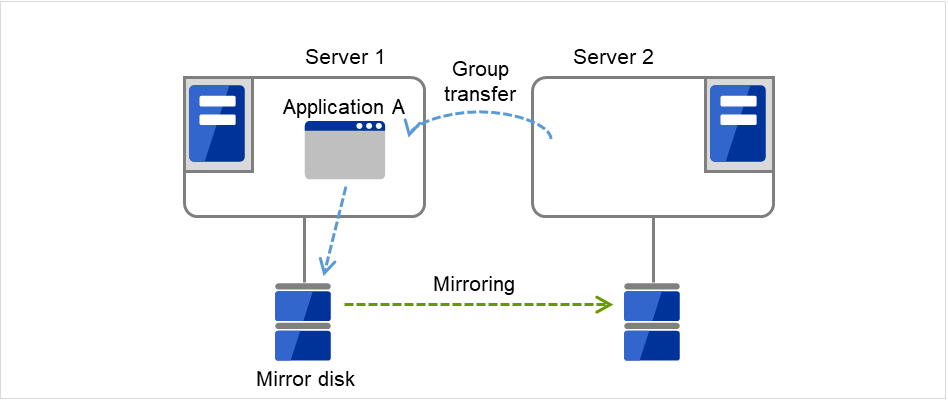

3.2.1.2. When mirror disks are used¶

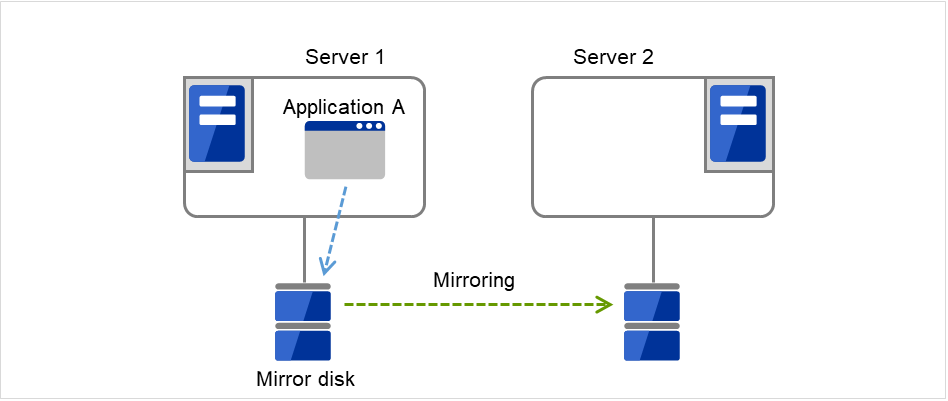

1. Server 1 runs Application A. Application A can be run on only one server in the same cluster.

Fig. 3.9 Uni-directional standby cluster with mirror disks (1): in normal operation¶

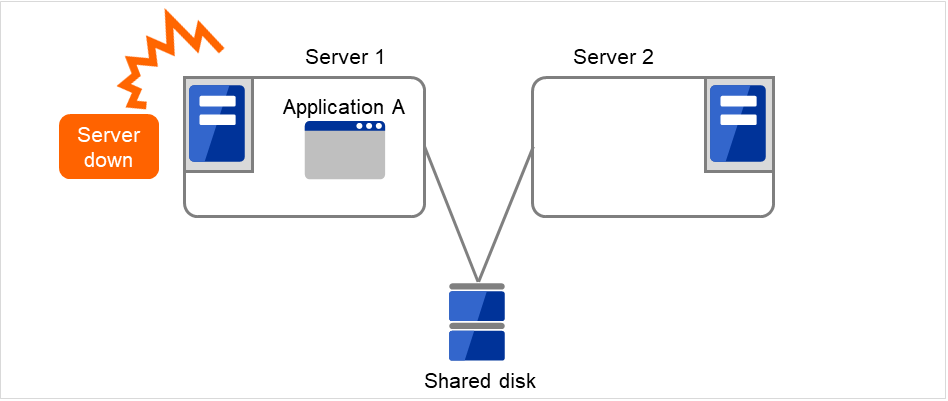

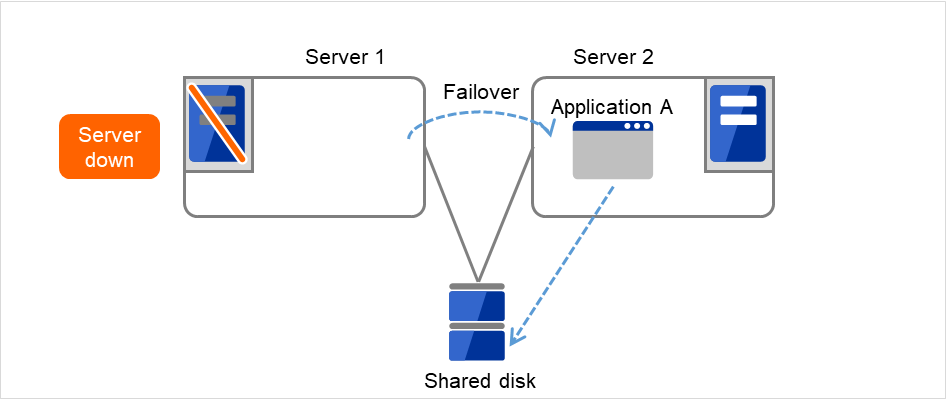

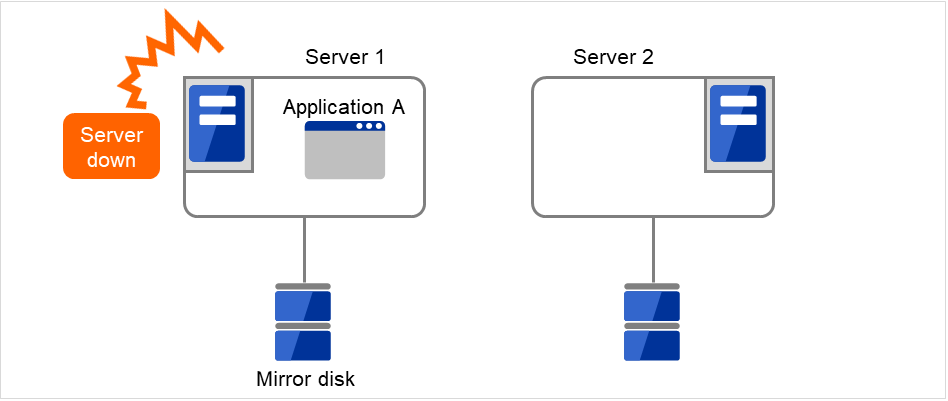

Server 1 crashes due to some error.

Fig. 3.10 Uni-directional standby cluster with mirror disks (2): when the server crashes¶

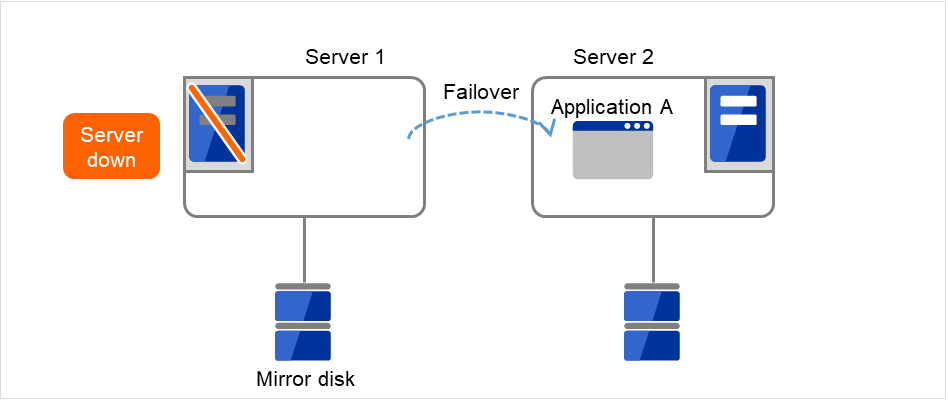

The application is failed over from Server 1 to Server 2.

Fig. 3.11 Uni-directional standby cluster with mirror disks (3): during a failover¶

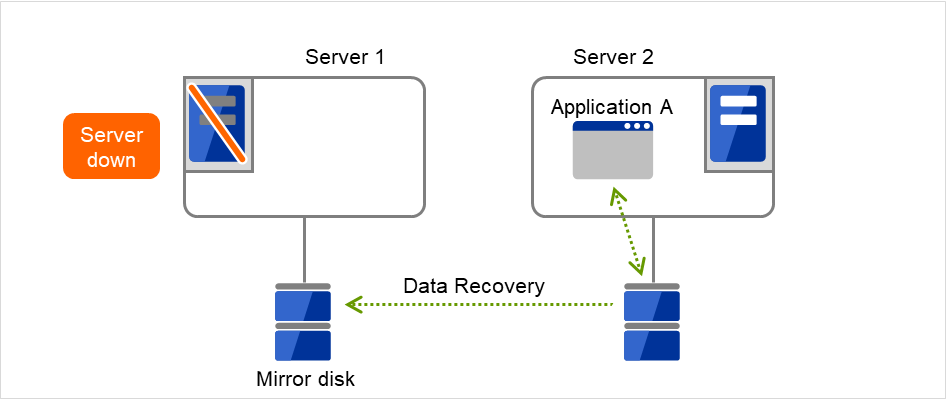

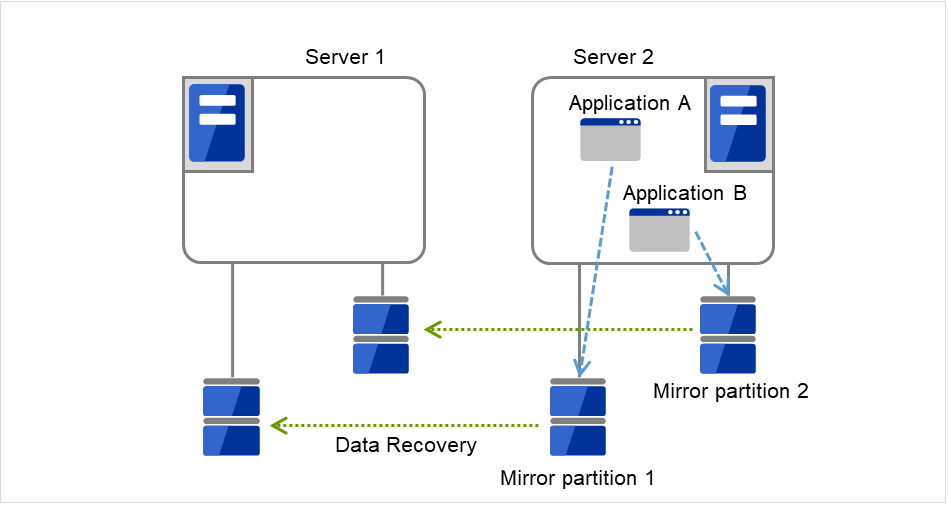

To resume the application, data is recovered from Server 2's mirror disk.

Fig. 3.12 Uni-directional standby cluster with mirror disks (4): during data recovery¶

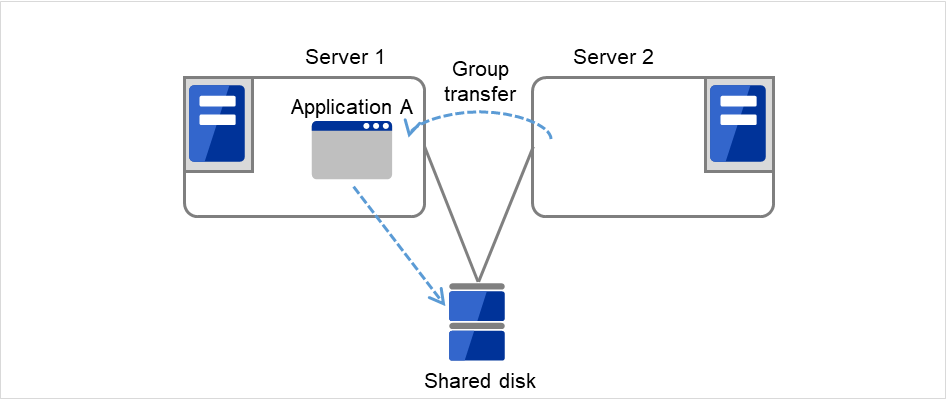

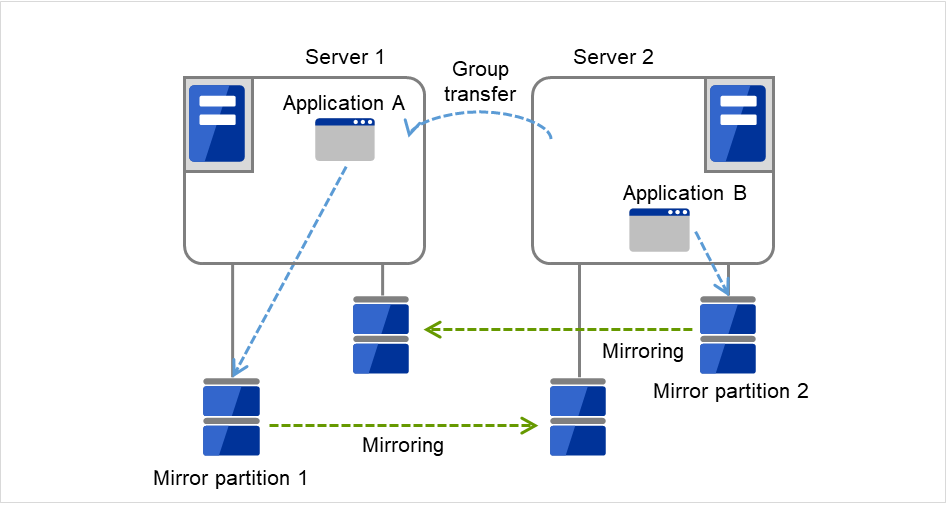

After Server 1 is restored, a group transfer can be made for Application A to be returned from Server 2 to Server 1.

Fig. 3.13 Uni-directional standby cluster with mirror disks (5): After the server is restored¶

3.2.2. Failover in multi-directional standby cluster¶

On a multi-directional standby cluster system, an application can simultaneously run on multiple servers. However, an active server gets heavily loaded when a failover occurs as described in the diagram below:

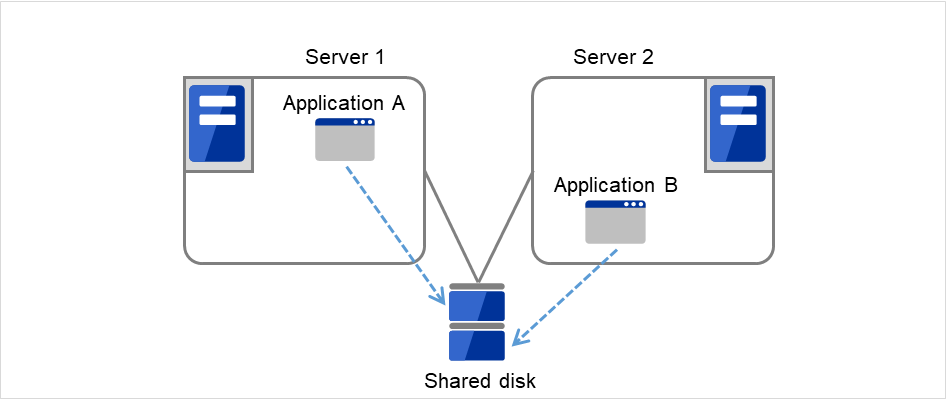

3.2.2.1. When a shared disk is used¶

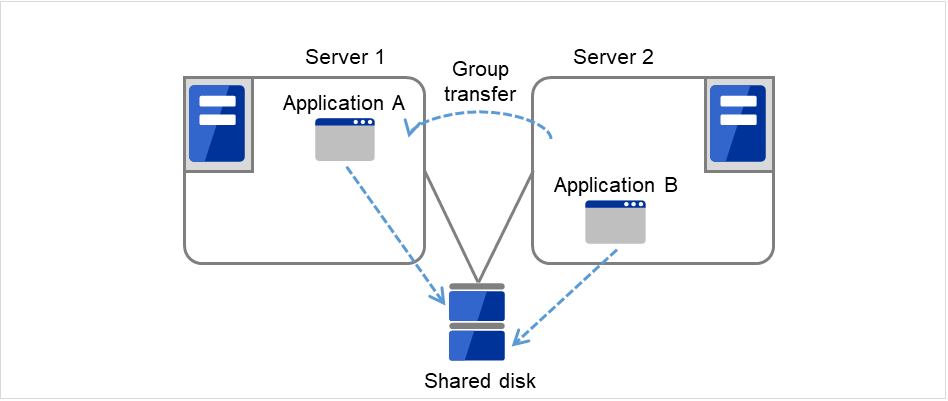

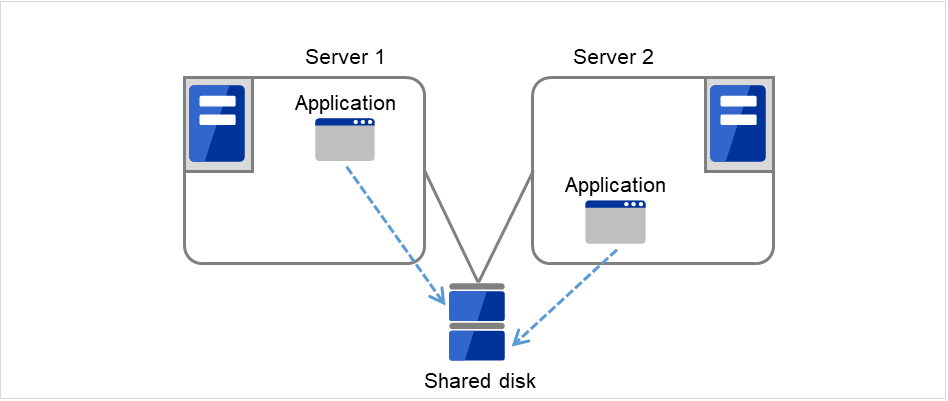

Server 1 runs Application A while Server 2 runs Application B.

Fig. 3.14 Multi-directional standby cluster with a shared disk (1): in normal operation¶

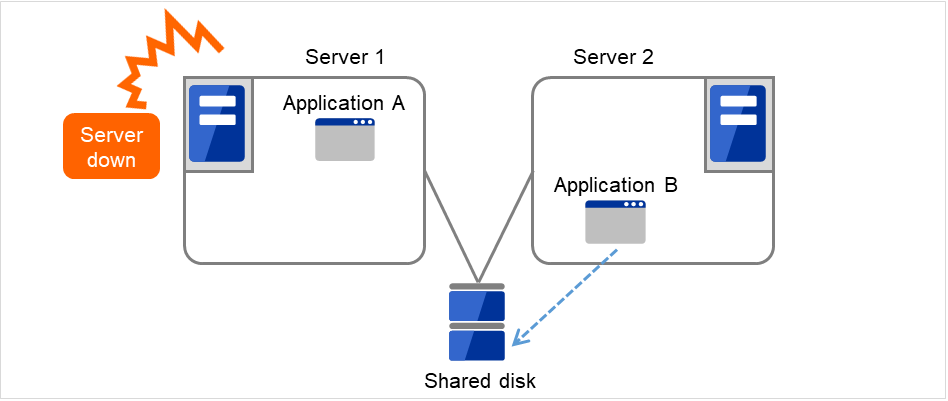

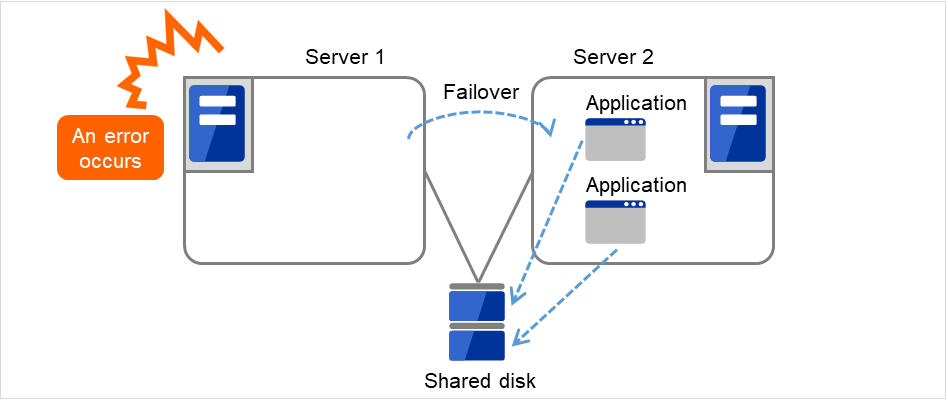

Server 1 crashes due to some error.

Fig. 3.15 Multi-directional standby cluster with a shared disk (2): when the server crashes¶

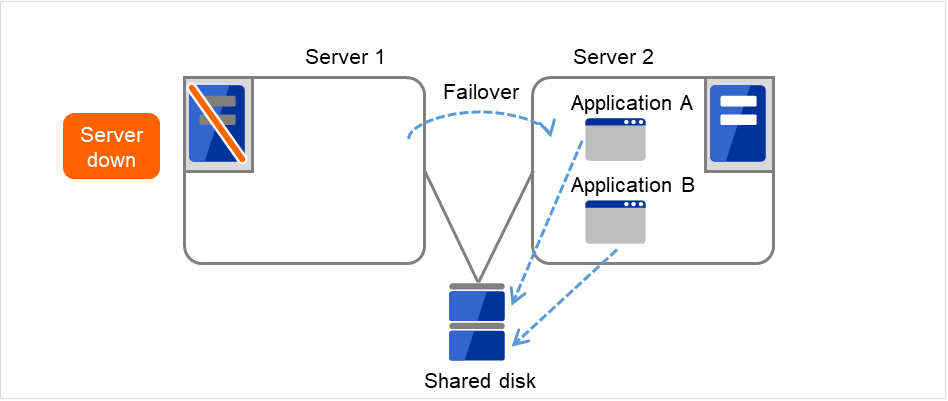

Application A is failed over from Server 1 to Server 2.

Fig. 3.16 Multi-directional standby cluster with a shared disk (3): during a failover¶

After Server 1 is restored, a group transfer can be made for Application A to be returned from Server 2 to Server 1.

Fig. 3.17 Multi-directional standby cluster with a shared disk (4): after the server is restored¶

3.2.2.2. When mirror disks are used¶

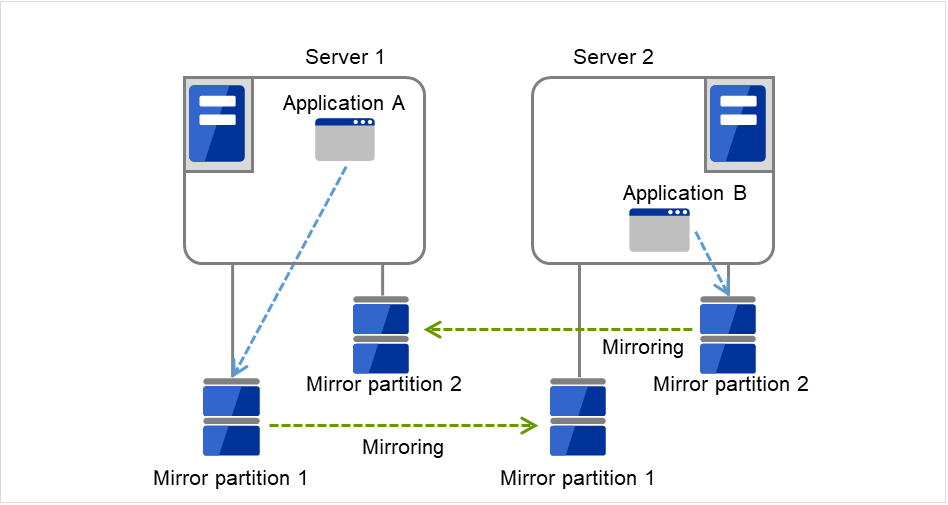

Server 1 runs Application A while Server 2 runs Application B.

Fig. 3.18 Multi-directional standby cluster with mirror disks (1): in normal operation¶

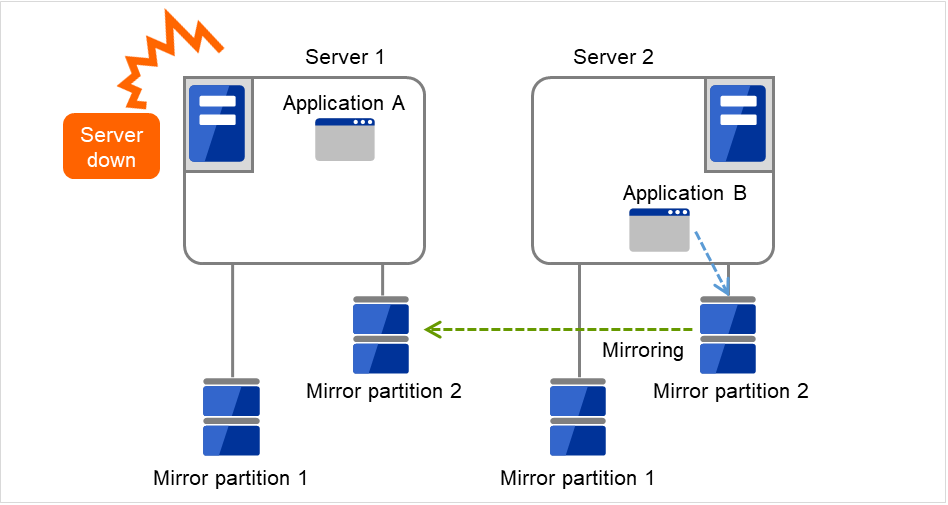

Server 1 crashes due to some error.

Fig. 3.19 Multi-directional standby cluster with mirror disks (2): when the server crashes¶

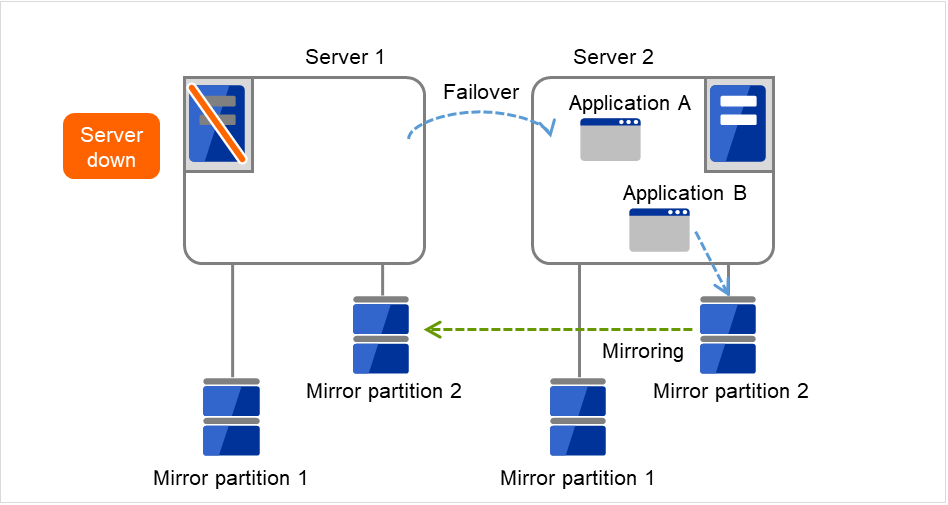

Application A is failed over from Server 1 to Server 2.

Fig. 3.20 Multi-directional standby cluster with mirror disks (3): during a failover¶

To resume Application A, data is recovered from Server 2's Mirror partition 1.

Fig. 3.21 Multi-directional standby cluster with mirror disks (4): during data recovery¶

After Server 1 is restored, a group transfer can be made for Application A to be returned from Server 2 to Server 1.

Fig. 3.22 Multi-directional standby cluster with mirror disks (5): after the server is restored¶

3.3. Determining applications to be duplicated¶

When you determine applications to be duplicated, study candidate applications considering the pointes described below to see whether they should be clustered in your EXPRESSCLUSTER cluster system.

3.3.1. Configuration relevant to the notes¶

What you need to consider differs depending on which standby cluster system is selected for an application. Following is the notes for each cluster system. The numbers correspond to the numbers of notes (1 through 5) described above:

Note for uni-directional standby [Active-Standby]: 1, 2, 3, and 5

Note for multi-directional standby [Active-Active]: 1, 2, 3, 4, and 5

- Note for co-existing behaviors: 5(Applications co-exist and run. The cluster system does not fail over the applications.)

3.3.2. Server applications¶

3.3.2.1. Note 1: Data recovery after an error¶

If an application was updating a file when an error has occurred, the file update may not be completed when the standby server accesses to that file after the failover.

The same problem can happen on a non-clustered server (single server) if it goes down and then is rebooted. In principle, applications should be ready to handle this kind of errors. A cluster system should allow recovery from this kind of errors without human interventions (from a script).

EXPRESSCLUSTER executes fsck if the file system on a shared disk or mirror disk requires fsck.

3.3.2.2. Note 2: Application termination¶

When EXPRESSCLUSTER stops or transfers (performs online failback of) a group for application, it unmounts the file system used by the application group. Therefore, you have to issue an exit command for applications so that they stop accessing files on a shared disk or mirror disk.

Typically, you give an exit command to applications in their stop scripts; however, you have to pay attention if an exit command completes asynchronously with termination of the application.

3.3.2.3. Note 3: Location to store the data¶

EXPRESSCLUSTER can pass the following types of data between severs:

Data type

Example

Where to store

Data to be shared among servers

User data, etc.

On shared disk or mirror disks

Data specific to a server

Programs, configuration data

On server's local disks

3.3.2.4. Note 4: Multiple application service groups¶

For multi-directional standby operation, you have to assume (in case of degeneration due to a failure) that multiple application groups are run by the same application on a server.Applications should have capabilities to take over the passed resources by one of the following methods described in the diagram below. A single server is responsible for running multiple application groups. The same is true for mirror disks:

Fig. 3.23 Application running normally on each server in a multi-directional standby cluster¶

- Starting up multiple instancesThis method invokes a new process. More than one application should co-exist and run.

Fig. 3.24 Starting up multiple instances¶

Fig. 3.25 Restarting the application¶

Fig. 3.26 Adding resources dynamically¶

3.3.2.5. Note 5: Mutual interference and compatibility with applications¶

Sometimes mutual interference between applications and EXPRESSCLUSTER functions or the operating system functions required to use EXPRESSCLUSTER functions prevents applications or EXPRESSCLUSTER from working properly.

Some applications like those responsible for system monitoring service periodically access all disk partitions. To use such applications in your cluster environment, they need a function that allows you to specify monitoring partitions.

3.3.3. Solution to the problems relevant to the notes¶

Problems |

Solution |

Note to refer |

|---|---|---|

When an error occurs while updating a data file, the application does not work properly on the standby server. |

Modify the program |

Note 1: Data recovery after an error |

The application keeps accessing a disk or file system for a certain period of time even after it is stopped. |

Execute the sleep command during stop script execution. |

Note 2: Application termination |

The same application cannot be started more than once on one server. |

In multi-directional operation, reboot the application at failover and pass the shared data. |

Note 3: Location to store the data |

3.3.4. How to determine a cluster topology¶

Carefully read this chapter and determine the cluster topology that suits your needs:

When to start which application

Actions that are required at startup and failover

Data to be placed in disk resources, mirror disk resources or hybrid disk resources.

3.4. Planning a cluster configuration¶

A group is a set of resources required to perform an independent operation service in a cluster system. Failover takes place by the unit of group. A group has its group name, group resources, and attributes.

Fig. 3.27 Failover group and group resources¶

Resources in each group are handled by the unit of the group. If a failover occurs in group1 that has Disk resource1 and Floating IP resource1, a failover of Disk resource1 and a failover of Floating IP resource1 are concurrent (failover of disk resource 1 never takes place without that of Floating IP resource1). Likewise, Disk resources1 is never contained in other groups, such as group2.

3.5. Understanding group resources¶

For a failover to occur in a cluster system, a group that works as a unit of failover must be created. A group consists of group resources. In order to create an optimal cluster, you must understand what group resources to be added to the group you create, and have a clear vision of your operation.

See also

For details on each resource, refer to the "Reference Guide".

Following is the currently supported group resources:

Group Resource Name |

Abbreviation |

|---|---|

EXEC resource |

exec |

Disk resource |

disk |

Floating IP resource |

fip |

Virtual IP resource |

vip |

Mirror disk resource |

md |

Hybrid disk resource |

hd |

NAS resource |

nas |

Volume manager resource |

volmgr |

VM resource |

vm |

Dynamic DNS resource |

ddns |

AWS Elastic IP resource |

awseip |

AWS Virtual IP resource |

awsvip |

AWS DNS resource |

awsdns |

Azure probe port resource |

azurepp |

Azure DNS resource |

azuredns |

Google Cloud Virtual IP resource |

gcvip |

Google Cloud DNS resource |

gcdns |

Oracle Cloud Virtual IP resource |

ocvip |

3.6. Understanding monitor resources¶

- Always monitors:

Monitoring is performed from when the cluster is started up until it is shut down.

- Monitors while activated:

Monitoring is performed from when a group is activated until it is deactivated.

Following is the currently supported monitor resource:

Monitor Resource Name |

Abbreviation |

Always monitors |

Monitors while activated |

|---|---|---|---|

Disk monitor resource |

diskw |

✓ |

✓ |

IP monitor resource |

ipw |

✓ |

✓ |

NIC Link Up/Down monitor resource |

miiw |

✓ |

✓ |

BMC monitor resource |

bmcw |

✓ |

|

Mirror disk connect monitor resource |

mdnw |

✓ |

|

Mirror disk monitor resource |

Mdw |

✓ |

|

Hybrid disk connect monitor resource |

Hdnw |

✓ |

|

Hybrid disk monitor resource |

hdw |

✓ |

|

PID monitor resource |

pidw |

✓ |

|

User mode monitor resource |

userw |

✓ |

|

Custom monitor resource |

genw |

✓ |

|

Volume manager monitor resource |

volmgrw |

✓ |

✓ |

Multi-target monitor resource |

mtw |

✓ |

|

Virtual IP monitor resource |

vipw |

✓ |

|

ARP monitor resource |

arpw |

✓ |

|

VM monitor resource |

vmw |

✓ |

|

Message receive monitor resource |

mrw |

✓ |

✓ |

Dynamic DNS monitor resource |

ddnsw |

✓ |

|

Process name monitor resource |

psw |

✓ |

✓ |

DB2 monitor resource |

db2w |

✓ |

|

Floating IP monitor resource |

fipw |

✓ |

|

FTP monitor resource |

ftpw |

✓ |

✓ |

HTTP monitor resource |

httpw |

✓ |

✓ |

IMAP4 monitor resource |

imap4 |

✓ |

✓ |

MySQL monitor resource |

mysqlw |

✓ |

|

NFS monitor resource |

nfsw |

✓ |

✓ |

Oracle monitor resource |

oraclew |

✓ |

|

Oracle Clusterware synchronization managemtnt monitor resource |

osmw |

✓ |

|

POP3 monitor resource |

pop3w |

✓ |

✓ |

PostgreSQL monitor resource |

psqlw |

✓ |

|

Samba monitor resource |

sambaw |

✓ |

✓ |

SMTP monitor resource |

smtpw |

✓ |

✓ |

Sybase monitor resource |

sybasew |

✓ |

|

Tuxedo monitor resource |

tuxw |

✓ |

✓ |

WebSphere monitor resource |

wasw |

✓ |

✓ |

WebLogic monitor resource |

wlsw |

✓ |

✓ |

WebOTX monitor resource |

otxw |

✓ |

✓ |

JVM monitor resource |

jraw |

✓ |

✓ |

System monitor resource |

sraw |

✓ |

|

Process resource monitor resource |

psrw |

✓ |

|

AWS Elastic IP monitor resource |

awseipw |

✓ |

|

AWS Virtual IP monitor resource |

awsvipw |

✓ |

|

AWS AZ monitor resource |

awsazw |

✓ |

|

AWS DNS monitor resource |

awsdnsw |

✓ |

|

Azure probe port monitor resource |

azureppw |

✓ |

|

Azure load balance monitor resource |

azurelbw |

✓ |

|

Azure DNS monitor resource |

azurednsw |

✓ |

|

Google Cloud Virtual IP monitor resource |

gcvipw |

✓ |

|

Google Cloud load balance monitor resource |

gclbw |

✓ |

|

Google Cloud DNS monitor resource |

gcdnsw |

✓ |

|

Oracle Cloud Virtual IP monitor resource |

ocvipw |

✓ |

|

Oracle Cloud load balance monitor resource |

oclbw |

✓ |

3.7. Understanding heartbeat resources¶

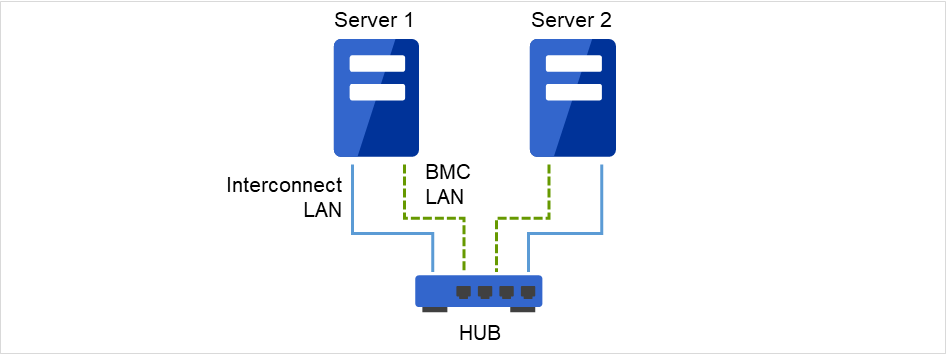

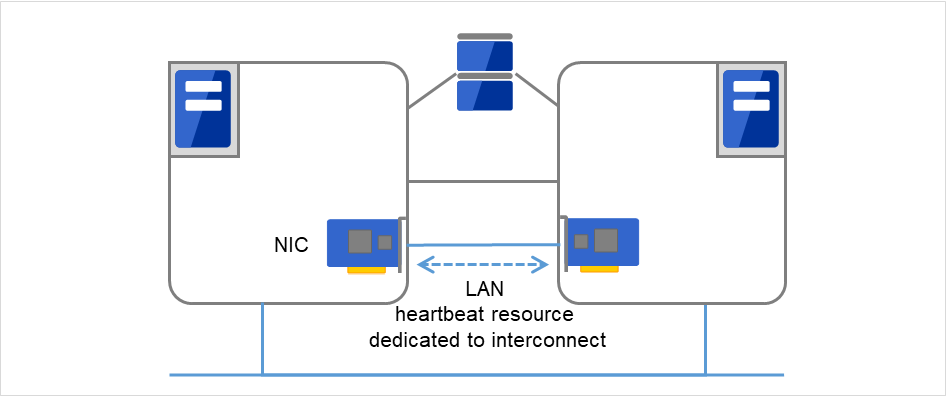

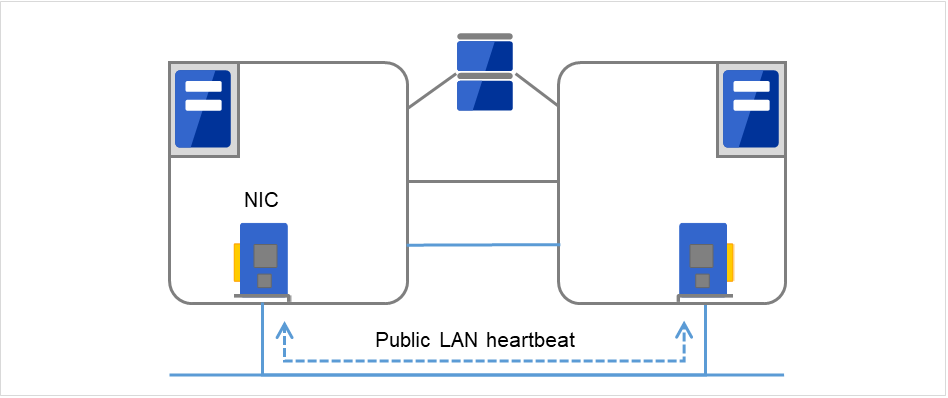

Servers in a cluster system monitor if other servers in the cluster are active. For this, heartbeat resources are used. Following is the heartbeat device types:

LAN heartbeat/kernel mode LAN heartbeat (primary interconnect)

Fig. 3.28 LAN heartbeat/kernel mode LAN heartbeat (primary interconnect)¶

LAN heartbeat/kernel mode LAN heartbeat (secondary interconnect)

Fig. 3.29 LAN heartbeat/kernel mode LAN heartbeat (secondary interconnect)¶

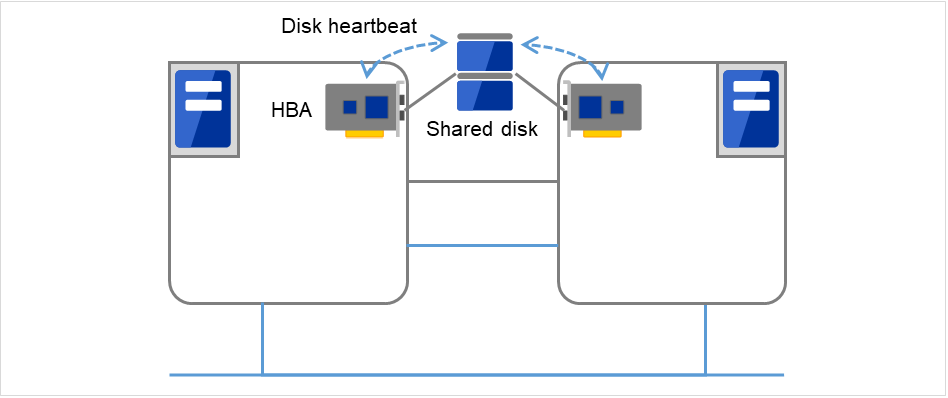

Disk heartbeat

Fig. 3.30 Disk heartbeat¶

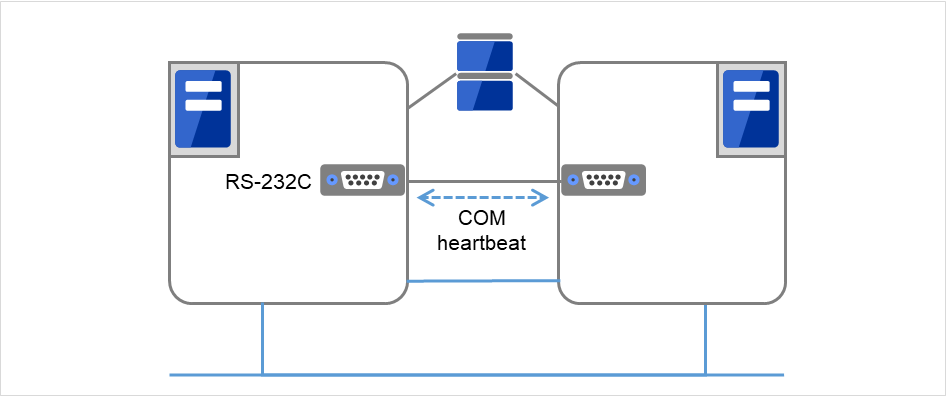

COM heartbeat

Fig. 3.31 COM heartbeat¶

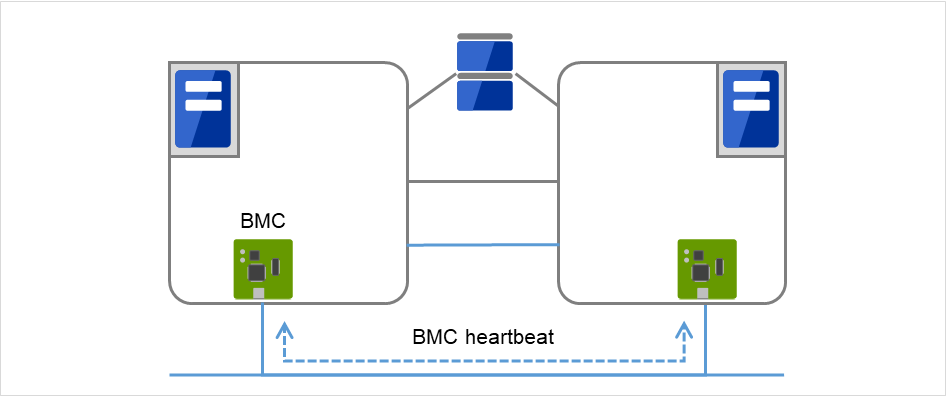

BMC heartbeat

Fig. 3.32 BMC heartbeat¶

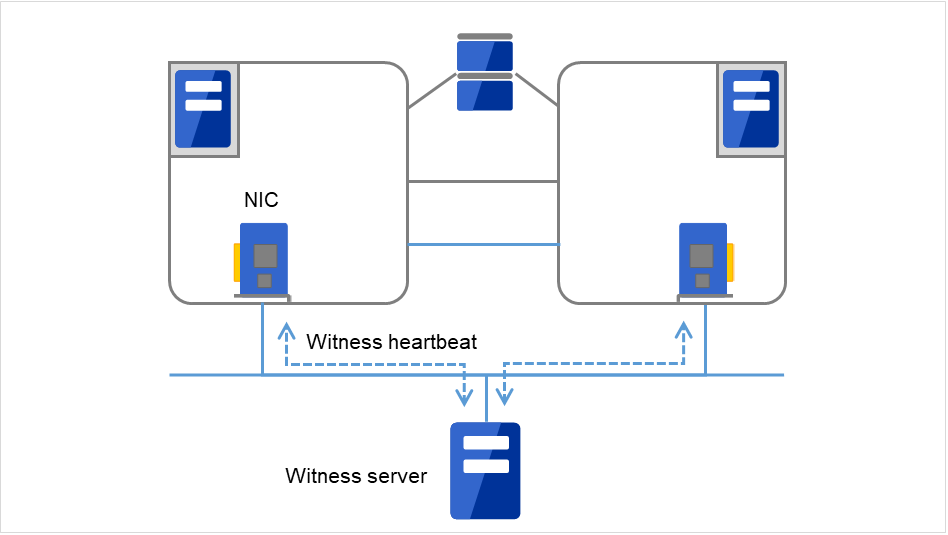

Witness heartbeat

Fig. 3.33 Witness heartbeat¶

Heartbeat Resource Name |

Abbreviation |

Functional Overview |

|---|---|---|

LAN heartbeat resource (1)(2)

|

lanhb

|

Uses a LAN to monitor if servers are active.

Used for communication within the cluster as well.

|

Kernel mode LAN heartbeat resource (1)(2)

|

lankhb |

A kernel mode module uses a LAN to monitor if servers are active.

Used for communication within the cluster as well.

|

Disk heartbeat resource (3) |

diskhb |

Uses a dedicated partition in the shared disk to monitor if servers are active. |

COM heartbeat resource (4) |

comhb |

Uses a COM cable to connect two servers to monitor if servers are active. |

BMC heartbeat resource (5) |

bmchb |

Uses BMC to monitor whether servers are active. |

Witness heartbeat resource (6) |

witnesshb |

Uses the Witness server to monitor whether servers are active. |

At least one LAN heartbeat resource or one kernel mode LAN heartbeat resource must be set. Setting up more than two LAN heartbeat resources is recommended.

Set up one or more LAN heartbeat resource or kernel mode LAN heartbeat resource to be used among all the servers.

Follow the specifications below to set the interface for disk heartbeat resource and COM heartbeat resource:

When a shared disk is used:

Up to two servers:In principle, COM interface and disk interfaceMore than three servers:Disk interfaceWhen a shared disk is not used:

Up to two servers: COM interface

3.8. Understanding network partition resolution resources¶

Network partitioning refers to the status where all communication channels have problems and the network between servers is partitioned.

In a cluster system that is not equipped with solutions for network partitioning, a failure on a communication channel cannot be distinguished from an error on a server. This can cause data corruption brought by access from multiple servers to the same resource. EXPRESSCLUSTER, on the other hand, distinguishes a failure on a server from network partitioning when the heartbeat from a server is lost. If the lack of heartbeat is determined to be caused by the server failure, the system performs a failover by activating each resource and rebooting applications on a server running normally. When the lack of heartbeat is determined to be caused by network partitioning, emergency shutdown is executed because protecting data has higher priority over continuity of the operation. Network partitions can be resolved by the following methods:

Ping method

A device that is always active to receive and respond to the ping command (hereafter described as ping device) is required.

More than one ping device can be specified.

When the heartbeat from the other server is lost, but the ping device is responding to the ping command, it is determined that the server without heartbeat has failed and a failover takes place. If there is no response to the ping command, the local server is isolated from the network due to network partitioning, and emergency shutdown takes place. This will allow a server that can communicate with clients to continue operation even if network partitioning occurs.

When the status where no response returns from the ping command continues before the heartbeat is lost, which is caused by a failure in the ping device, the network partitions cannot be resolved. If the heartbeat is lost in this status, a failover takes place in all servers. Because of this, using this method in a cluster with a shared disk can cause data corruption due to access to a resource from multiple servers.

HTTP method

A Web server that is always active is required.

When the heartbeat from the other server is lost, but there is a response to an HTTP HEAD request, it is determined that the server without heartbeat has failed and a failover takes place. If there is no response to an HTTP HEAD request, it is determined that the local server is isolated from the network due to network partitioning, and an emergency shutdown takes place. This will allow a server that can communicate with clients to continue operation even if network partitioning occurs.

When there remains no response to an HTTP HEAD request before the heartbeat is lost, which is caused by a failure in Web server, the network partitions cannot be resolved. If the heartbeat is lost in this status, emergency shutdowns occur in all the servers.

Not solving the network partition

If a failure occurs on all the network channels between servers in a cluster, all the servers fail over.

The following are the recommended methods to resolve the network partition:

Method to resolve a network partition |

Number of nodes |

Required hardware |

Circumstance where failover cannot be performed |

When all network channels are disconnected |

Circumstance where both servers fail over |

Time required to resolve network partition |

|---|---|---|---|---|---|---|

Ping |

No limit |

Device to receive the ping command and return a response |

None |

Server that responses to the ping command survives |

All networks are disconnected after the ping command timeouts the specified times consecutively. |

0 |

HTTP |

No limit |

Web server |

Web server failure |

A server that can communicate with the Web server survives |

None |

0 |

None |

No limit |

None |

None |

All servers fail over |

All the networks are disconnected |

0 |

4. Installing EXPRESSCLUSTER¶

See also

Refer to "Upgrading EXPRESSCLUSTER" in the "Getting Started Guide" to upgrade EXPRESSCLUSTER from a previous version.

4.1. Steps from Installing EXPRESSCLUSTER to creating a cluster¶

The following describes the steps of installing EXPRESSCLUSTER, registering the license, creating a cluster and verifying the installation.

Before proceeding to the steps, make sure to read "2. Determining a system configuration" and "3. Configuring a cluster system" and check system requirements and the configuration of a cluster.

- Set up the EXPRESSCLUSTER ServerInstall the EXPRESSCLUSTER Server, which is the core EXPRESSCLUSTER module, to each server that constitutes a cluster.(See "4. Installing EXPRESSCLUSTER.") Reboot the server

- Register the licenseRegister the license by running the clplcnsc command.(See "5. Registering the license.") Reboot the server

- Create the cluster configuration data using the Cluster WebUICreate the cluster configuration data using the Cluster WebUI.

- Create a clusterCreate a cluster using the configuration data created with the Cluster WebUI.

- Verify the cluster status using the Cluster WebUIVerify the status of a cluster that you have created using the Cluster WebUI(See "7. Verifying a cluster system.")

See also

Refer to the "Reference Guide" as you need when operating EXPRESSCLUSTER by following the procedures introduced in this guide. See the "Getting Started Guide" for installation requirements.

4.2. Setting up the EXPRESSCLUSTER Server¶

The EXPRESSCLUSTER Server, which is the core component of EXPRESSCLUSTER, consists of the following system services. It is set up by installing the EXPRESSCLUSTER Server RPM/deb package.

System Service Name |

Description |

|---|---|

clusterpro |

EXPRESSCLUSTER daemon:

A service of EXPRESSCLUSTER itself.

|

clusterpro_evt |

EXPRESSCLUSTER event:

A service to control syslog and logs being output from EXPRESSCLUSTER.

|

clusterpro_trn |

EXPRESSCLUSTER data transfer:

A service to control license synchronization and configuration data transfer in a cluster.

|

clusterpro_ib |

EXPRESSCLUSTER Information Base:

A service to manage EXPRESSCLUSTER information.

|

clusterpro_api |

EXPRESSCLUSTER API:

A service to control the EXPRESSCLUSTER RESTful API.

|

clusterpro_md |

EXPRESSCLUSTER mirror agent

A service to control mirror disk resource, hybrid disk resource and mirror driver of EXPRESSCLUSTER.

|

clusterpro_alertsync |

EXPRESSCLUSTER alert synchronization:

A service to synchronize alerts among servers in the cluster.

|

clusterpro_webmgr |

EXPRESSCLUSTER WebManager:

A WebManager server service.

|

4.2.1. Installing the EXPRESSCLUSTER RPM¶

Install the EXPRESSCLUSTER Server RPM on all servers that constitute the cluster by following the procedures below.

Note

Log in as root user when installing the EXPRESSCLUSTER Server RPM.

Mount the installation CD-ROM.

- Run the rpm command to install the package file.The installation RPM varies depending on the products.Navigate to the folder, /Linux/4.3/en/server, in the CD-ROM and run the following:

rpm -i expresscls-<version>.<architecture>.rpm

There are x86_64, IBM POWER and IBM POWER LE for architecture. Select one of them according to the environment where the server RPM is installed. Verify the architecture by running the arch command.The installation starts.Note

EXPRESSCLUSTER will be installed in the following directory. You will not be able to uninstall the EXPRESSCLUSTER if you change this directory.Installation Directory: /opt/nec/clusterpro When the installation is completed, unmount the installation CD-ROM.

- Remove the installation CD-ROM.Proceed to a license registration procedure.

See also

4.2.2. Installing the EXPRESSCLUSTER deb package¶

Install the EXPRESSCLUSTER Server deb package on all servers that constitute the cluster by following the procedures below.

Note

Log in as the root user when installing the EXPRESSCLUSTER Server deb package.

Mount the installation CD-ROM.

- Run the dpkg command to install the package file.The installation deb package varies depending on the product.Navigate to the folder, /Linux/4.3/en/server, in the CD-ROM and run the following:

dpkg -i expresscls-<version>.amd64.deb

The installation starts.Note

EXPRESSCLUSTER will be installed in the following directory. Do not change this directory. Do not replace this directory with a symbolic link.Installation Directory: /opt/nec/clusterpro When the installation is completed, unmount the installation CD-ROM.

- Remove the installation CD-ROM.Proceed to a license registration procedure.

See also

4.2.3. Setting up the SNMP linkage function¶

Note

If you are using only the SNMP trap transmission function, you do not need to perform this procedure.

Note

To set up the SNMP linkage function, you must log in as the root user.

The description related to Net-SNMP in the installation procedure may vary depending on the distribution.

Install Net-SNMP.

- Check the snmpd version.Run the following command:

snmpd -v

Stop the snmpd daemon.

Note

The daemon can usually be stopped by the following command:

For an init.d environment:

/etc/init.d/snmpd stop

For a systemd environment:

systemctl stop snmpd

- Register the SNMP linkage function of EXPRESSCLUSTER in the configuration file for the snmpd daemon.Open the configuration file with a text editor.Add the following description to the end of the file according to the snmpd version.

If the snmpd version is earlier than 5.7:

dlmod clusterManagementMIB /opt/nec/clusterpro/lib/libclpmgtmib.so

If the snmpd version is 5.7 or later:

dlmod clusterManagementMIB /opt/nec/clusterpro/lib/libclpmgtmib2.so

Note

The configuration file for the Net-SNMP snmpd daemon is usually located in the following directory:

/etc/snmp/snmpd.conf

- Add the OID of EXPRESSCLUSTER in the MIB view (view definition by snmpd.conf) permitted by the snmpd daemon.The OID of EXPRESSCLUSTER is .1.3.6.1.4.1.119.2.3.207.

- Create symbolic links to libraries needed by the SNMP linkage function.The following three symbolic links are needed.

libnetsnmp.so libnetsnmpagent.so libnetsnmphelpers.so

Follow the procedure below to create the symbolic links.5-1. Confirm the presence of the symbolic links.Change to following directory.If those symbolic links exist in the following directory, proceed to step 6./usr/lib64

5-2. Create symbolic linksRun the following commands.ln -s libnetsnmp.so.X libnetsnmp.so ln -s libnetsnmpagent.so.X libnetsnmpagent.so ln -s libnetsnmphelpers.so.X libnetsnmphelpers.so

Substitute a numeric value for X according to the environment. Start the snmpd daemon.

Note

The daemon can usually be started by the following command:

For an init.d environment:

/etc/init.d/snmpd start

For a systemd environment:

systemctl start snmpd

See also

You must cancel the settings of the SNMP function when uninstalling the EXPRESSCLUSTER Server. For how to cancel the settings of the SNMP linkage function, see "Canceling the SNMP linkage function settings."

Note

The settings required for SNMP communication are to be made on the SNMP agent.

5. Registering the license¶

5.1. Registering the CPU license¶

It is required to register the CPU license to run the cluster system you create.

See also

The names of the products to which the CPU license applies are listed below.

Licensed Product Name |

Product ID |

|---|---|

EXPRESSCLUSTER X 4.3 for Linux |

BASE43 |

EXPRESSCLUSTER X SingleServerSafe 4.3 for Linux |

XSSS43 |

EXPRESSCLUSTER X SingleServerSafe for Linux Upgrade |

UPGR43 |

Among servers that constitute the cluster, use the master server to register the CPU license. There are two ways of license registration; using the information on the license sheet and specifying the license file. These two ways are described for both the product and trial versions.

Product version

Specify the license file as the parameter of the license management command. Refer to "5.1.2. Registering the license by specifying the license file (for both product version and trial version)".

Register the license by running the license management command and interactively entering the license information that comes with the licensed product. Refer to "5.1.3. Registering the license interactively from the command line (Product version)".

Trial version

Specify the license file as the parameter of the license management command. Refer to "5.1.2. Registering the license by specifying the license file (for both product version and trial version)".

5.1.1. Notes on the CPU license¶

Notes on using the CPU license are as follows:

After registration of the CPU license on the master server, Cluster WebUI on the master server must be used in order to edit and reflect the cluster configuration data as described in "6. Creating the cluster configuration data".

5.1.2. Registering the license by specifying the license file (for both product version and trial version)¶

The following describes how to register the license by specifying the license file when you have a license for the product version or trial version.

Check the following before executing these steps.

Allow logon as root user to the server that will be set as a master server among servers that configures a cluster system.

Store the license file in the server that will be set as a master server among servers that constitute the cluster system.

Log on to the master server as root user and run the following command.

# clplcnsc -i <filepath>

Specify the path to the license file for filepath specified by the -i option.When the command is successfully executed, the message "License registration succeeded." is displayed in the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".Run the following command to verify the licenses registered.

# clplcnsc -l -a

When an optional product is used, refer to "5.3. Registering the node license" in this chapter.

- When an optional product is not used, run the OS shutdown command to reboot all servers. By doing this, the license registration becomes effective and you can start using the cluster.After rebooting all servers, proceed to "6. Creating the cluster configuration data" and follow the steps.

Note

You can ignore that clusterpro_md fails at the time the operating system is started up. It is because the cluster is yet to be created.

5.1.3. Registering the license interactively from the command line (Product version)¶

The following describes how you register a license for the product version interactively from the command line.

Before you register the license, make sure to:

Have the official license sheet that comes with the product. The license sheet is sent to you when you purchase the product. You will enter the values on the license sheet.

Allow logon as root user to the server that will be set as a mater server among servers that constitute the cluster system.

See also

The clplcnsc command is used in the following procedures. For more information on how to use the clplcnsc command, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

- Have the license sheet.The instruction here is given using the values in the following license sheet as an example. When actually entering the values, modify them according to the information on your license sheet.

Product name: EXPRESSCLUSTER X 4.3 for Linux License information: Type Product Version License Key A1234567-B1234567-C1234567-D1234567 Serial Number AAAAAAAA000000 Number Of Licensed CPUs 2

Log on to the master server as root user and run the following command.

# clplcnsc -i

The text that prompts you to enter the product division is displayed. Enter 1 to select "product version" for license version:

Selection of License Version. 1 Product version 2 Trial version e Exit Select License Version. [1, 2, or e (default:1)]...1

The text that prompts you to enter the serial number is displayed. Enter the serial number written in your license sheet. Note this is case sensitive.

Enter serial number [Ex. XXXXXXXX000000]... AAAAAAAA000000

The text that prompts you to enter the license key is displayed. Enter the license key written in your license sheet. Note this is case sensitive.

Enter license key [XXXXXXXX- XXXXXXXX- XXXXXXXX- XXXXXXXX]... A1234567-B1234567-C1234567-D1234567

When the command is successfully executed, the message "License registration succeeded." is displayed in the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

Run the following command to verify the licenses registered.

# clplcnsc -l -a

When an optional product is used, refer to "5.3. Registering the node license" in this chapter.

When an optional product is not used, run the OS shutdown command to reboot all servers. By doing this, the license registration becomes effective and you can start using the cluster. After rebooting all servers, proceed to "6. Creating the cluster configuration data" and follow the steps.

Note

You can ignore that clusterpro_md fails at the time the operating system is started up. It is because the cluster yet to be created.

5.2. Registering the VM node license¶

When the virtual server exists in the cluster system to be constructed, VM node license can be used not CPU license for the virtual server.

The names of the products to which the VM node license applies are listed below.

License Product Name |

Product ID |

|---|---|

EXPRESSCLUSTER X 4.3 for Linux VM |

BASE43 |

EXPRESSCLUSTER X SingleServerSafe 4.3 for Linux VM |

XSSS43 |

EXPRESSCLUSTER X SingleServerSafe for Linux VM Upgrade |

UPGR43 |

Registering the VM node license is done on all the virtual servers of the servers constructing the cluster. Of servers constituting the cluster, register the VM node license on all the virtual servers. There are two ways of license registration; using the information on the license sheet and specifying the license file.

Product version

Specify the license file as the parameter of the license management command. Refer to "Registering the VM node license by specifying the license file (for both product and trial versions)."

Register the license by running the license management command and interactively entering the license information that comes with the licensed product. Refer to "Registering the VM node license interactively from the command line (Product version)."

Trial version

Specify the license file as a parameter of the license management command, and register the license with the command. Refer to "Registering the VM node license by specifying the license file (for both product and trial versions)."

5.2.1. Registering the VM node license by specifying the license file (for both product and trial versions).¶

Be allowed to logon as root user to the virtual servers of servers constituting the cluster system.

Among virtual servers of which you intend to construct a cluster, log on to the server as root user and run the following command.

# clplcnsc -i filepath

Specify the path to the license file for filepath specified by the -i option.

When the command is successfully executed, the message "License registration succeeded." is displayed on the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

Run the following command to verify the licenses registered.

# clplcnsc -l -a

If there are other virtual servers in a cluster system, register the VM node license by following the same procedures.

When using option products, see "5.3. Registering the node license".

- When not using option products, run the OS shutdown command to reboot all the servers. By doing this, the license registration becomes effective and you can start using the cluster.After rebooting all servers, proceed to "6. Creating the cluster configuration data" and follow the steps.

Note

You can ignore that clusterpro_md fails at the time the operating system is starting up. It is because the cluster is yet to be created.

5.2.2. Registering the VM node license interactively from the command line (Product version)¶

Have the official license sheet that comes with the product. The license sheet is sent to you when you purchase the product. You will enter the values on the license sheet.

Be allowed to logon as root user to the virtual servers of servers constituting the cluster system.

See also

The clplcnsc command is used in the following procedures. For more information on how to use the clplcnsc command, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

- Have the license sheet.The instruction here is given using the values in the following license sheet as an example. When actually entering the values, modify them according to the information on your license sheet.

Product name: EXPRESSCLUSTER X 4.3 for Linux VM License information: Type Product Version License Key A1234567- B1234567- C1234567- D1234567 Serial Number AAAAAAAA000000 Number Of Licensed Server 1

Among virtual servers of which you intend to construct a cluster, log on to the server as root user and run the following command.

# clplcnsc -i

The text that prompts you to enter the license version is displayed. Enter 1 because it is a product version:

Selection of License Version. 1 Product version 2 Trial version e Exit Select License Version. [1, 2, or e (default:1)]...1

The text that prompts you to enter the serial number is displayed. Enter the serial number written in your license sheet. Note this is case sensitive.

Enter serial number [Ex. XXXXXXXX000000]... AAAAAAAA000000

The text that prompts you to enter the license key is displayed. Enter the license key written in your license sheet. Note this is case sensitive.

Enter serial number [Ex. XXXXXXXX000000]... AAAAAAAA000000

A1234567-B1234567-C1234567-D1234567

When the command is successfully executed, the message "License registration succeeded." is displayed on the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

Run the following command to verify the licenses registered.

# clplcnsc -l -a

If there is any other virtual server in the cluster, register the VM license by repeating the same steps.

When using option products, see "5.3. Registering the node license".

- When not using option products, run the OS shutdown command to reboot all the servers.After rebooting all servers, proceed to "6. Creating the cluster configuration data" and follow the steps.

Note

You can ignore that clusterpro_md fails at the time the operating system is starting up. It is because the cluster is yet to be created.

5.3. Registering the node license¶

It is required to register the node license for the X 4.3 Replicator, X 4.3 Replicator DR, X 4.3 Agent products, and X 4.3 Alert Service (hereafter referred to as "optional product") to operate the cluster system where those products are constituted.

The names of the optional products to which the node license applies are listed below.

License Product Name |

Product ID |

|---|---|

EXPRESSCLUSTER X Replicator 4.3 for Linux |

REPL43 |

EXPRESSCLUSTER X Database Agent 4.3 for Linux |

DBAG43 |

EXPRESSCLUSTER X Internet Server Agent 4.3 for Linux |

ISAG43 |

EXPRESSCLUSTER X File Server Agent 4.3 for Linux |

FSAG43 |

EXPRESSCLUSTER X Application Server Agent 4.3 for Linux |

ASAG43 |

EXPRESSCLUSTER X Java Resource Agent 4.3 for Linux |

JRAG43 |

EXPRESSCLUSTER X System Resource Agent 4.3 for Linux |

SRAG43 |

EXPRESSCLUSTER X Alert Service 4.3 for Linux |

ALRT43 |

EXPRESSCLUSTER X Replicator DR 4.3 for Linux |

RPDR43 |

EXPRESSCLUSTER X Replicator DR 4.3 for Linux Upgrade license |

RPUP43 |

Among servers constituting the cluster, register the node license on the server that uses an optional product. There are two ways of license registration; using the information on the license sheet and specifying the license file. These two ways are described for both the product and trial versions.

Product version

Specify the license file as a parameter of the license management command, and register the license with the command. Refer to "5.1.2. Registering the license by specifying the license file (for both product version and trial version)".

Register the license by running the license management command and interactively entering the license information that comes with the licensed product. Refer to "Registering the node license interactively from the command line (Product version)".

Trial version

Specify the license file as the parameter of the license management command. Refer to "Registering the node license by specifying the license file (for both product version and trial version)"

5.3.1. Registering the node license by specifying the license file (for both product version and trial version)¶

Allow logon as root user to the server for which you plan to use the option product among servers constituting the cluster system.

Among servers of which you intend to construct a cluster and use the optional product, log on to the server you plan to use as a master server as root user and run the following command.

# clplcnsc -i filepath

Specify the path to the license file for filepath specified by the -i option.

Note

If the licenses for optional products have not been installed, the resources and monitor resources corresponding to those licenses are not shown in the list on the Cluster WebUIIf the licenses are registered but the corresponding resources do not appear in the list, or if the licenses are registered after the Cluster WebUI is started, click the Get License Info button.When the command is successfully executed, the message "License registration succeeded." is displayed in the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

Run the following command to verify the licenses registered.

# clplcnsc -l -a

If there is other server in a cluster system that uses the optional product, register the node license by following the same procedures. Register the license for the Replicator / Replicator DR to both servers.

Run the OS shutdown command to reboot all the servers. By doing this, the license registration becomes effective and you can start using the cluster. After rebooting all servers, proceed to "6. Creating the cluster configuration data" and follow the steps.

Note

You can ignore that clusterpro_md fails at the time the operating system is started up. It is because the cluster is yet to be created.

Note

If the Replicator / Replicator DR license is registered after starting the cluster operation, once shutdown and reboot the cluster. After the reboot, the Replicator / Replicator DR is operable.

5.3.2. Registering the node license interactively from the command line (Product version)¶

Have the official license sheet that comes with the product. The license sheet is sent to you when you purchase the product. The number of license sheets required is the number of servers for which you use the optional product. You will enter the values on the license sheet.

Allow logon as root user to the server for which you plan to use the option product among servers constituting the cluster system.

See also

The clplcnsc command is used in the following procedures. For more information on how to use the clplcnsc command, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

- Have the license sheet.The instruction here is given using the values in the following license sheet (Replicator) as an example. When actually entering the values, modify them according to the information on your license sheet.

Product name: EXPRESSCLUSTER X Replicator 4.3 for Linux License information: Type Product Version License Key A1234567-B1234567-C1234567-D1234567 Serial Number AAAAAAAA000000 Number of Nodes 1

Among servers that constitute the cluster, log on as root user to the server for which you are intending to use the option product as root, and then run the following command:

# clplcnsc -i

Note

If the licenses for optional products have not been installed, the resources and monitor resources corresponding to those licenses are not shown in the list on the Cluster WebUI.

If the licenses are registered but the corresponding resources do not appear in the list, or if the licenses are registered after the Cluster WebUI is started, click the Get License Info button.

The text that prompts you to enter the license version is displayed. Enter 1 because it is a product version:

Selection of License Version. 1 Product Version 2 Trial Version e Exit Select License Version. [1, 2, or e (default:1)]...1

The text that prompts you to enter the serial number is displayed. Enter the serial number written in your license sheet. Note this is case sensitive.

Enter serial number [Ex. XXXXXXXX000000]... AAAAAAAA000000

The text that prompts you to enter the license key is displayed. Enter the license key written in your license sheet. Note this is case sensitive.

Enter license key [XXXXXXXX-XXXXXXXX-XXXXXXXX-XXXXXXXX]... A1234567-B1234567-C1234567-D1234567

When the command is successfully executed, the message "License registration succeeded." is displayed in the console. When a message other than this is displayed, see "EXPRESSCLUSTER command reference" in the "Reference Guide".

Run the following command to verify the licenses registered.

# clplcnsc -l -a

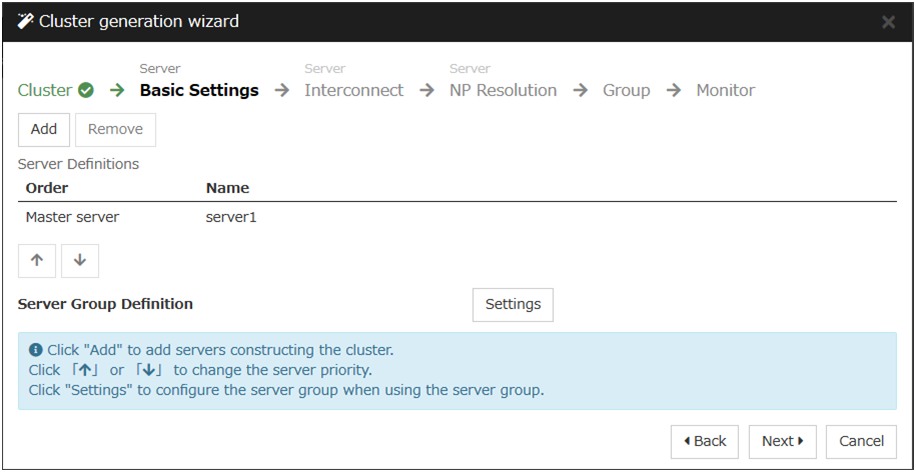

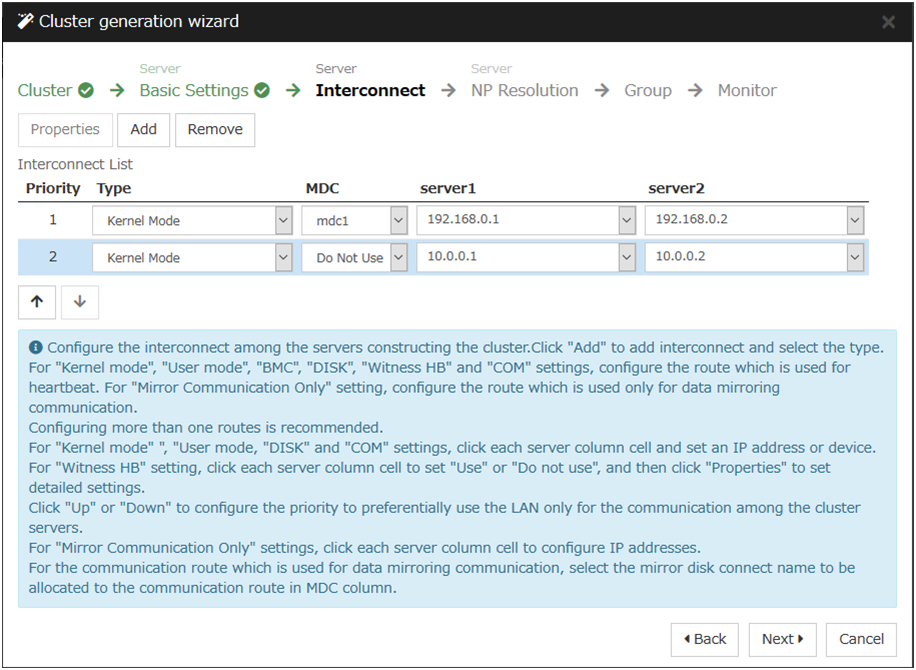

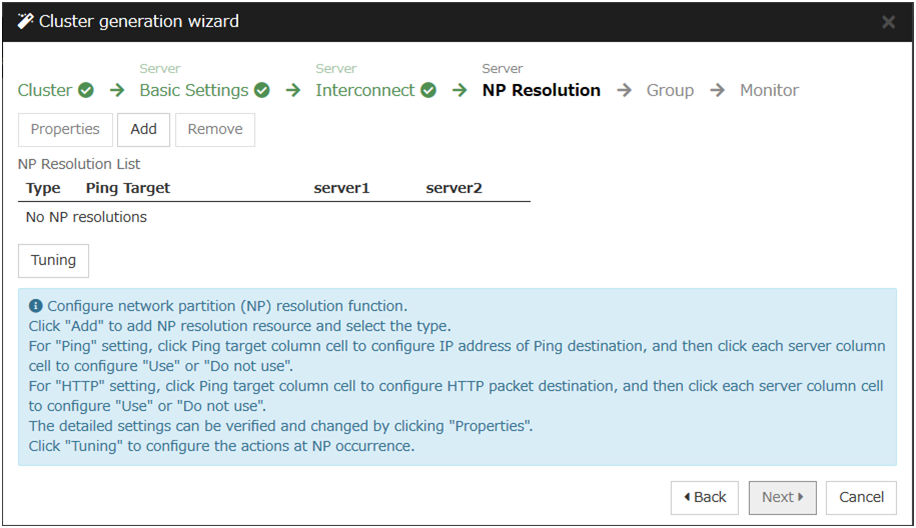

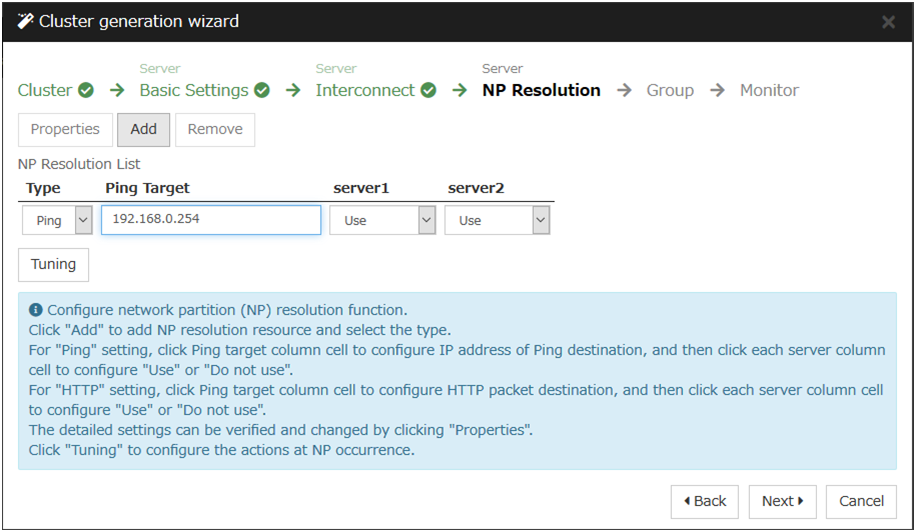

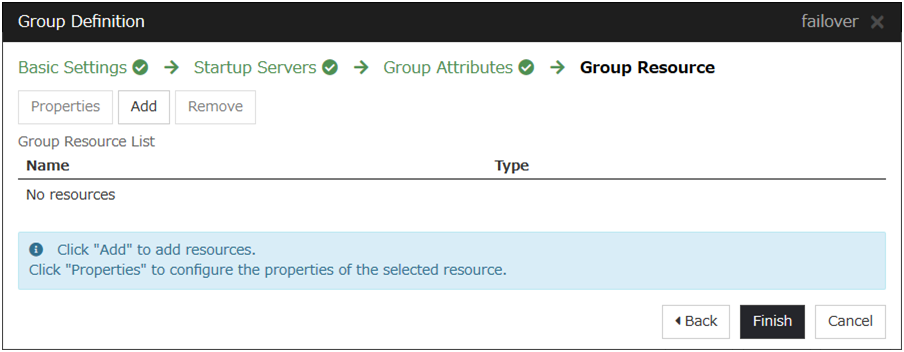

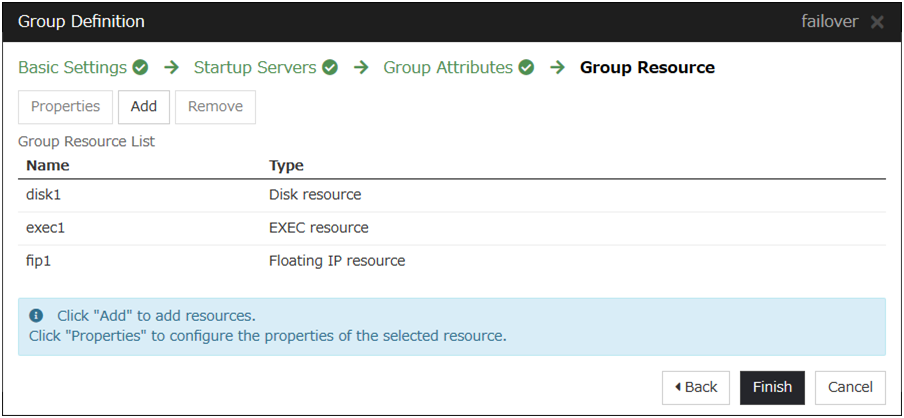

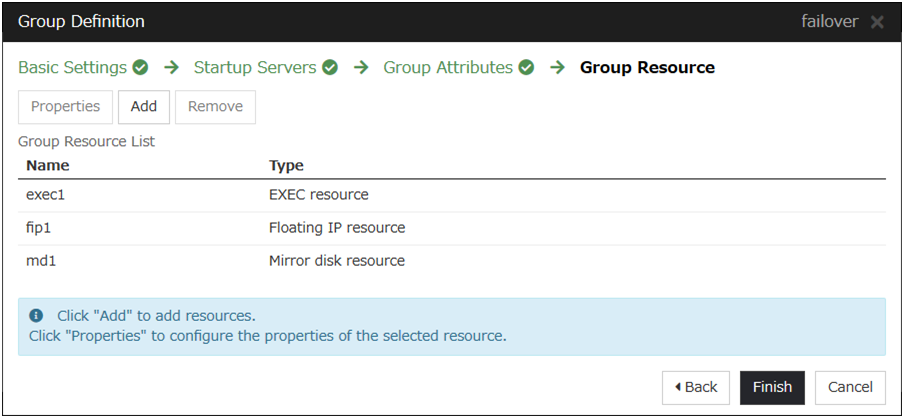

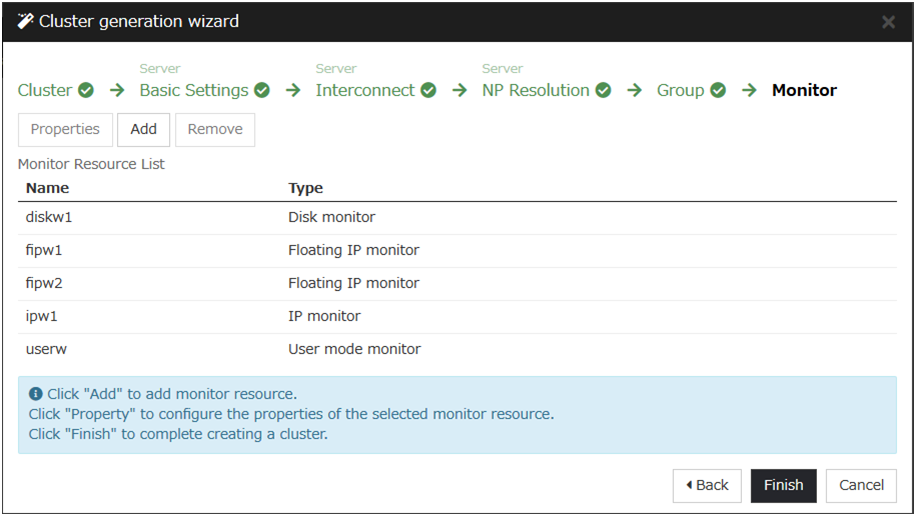

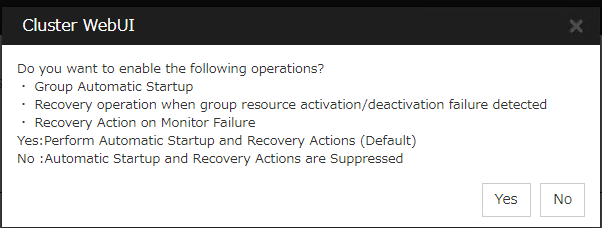

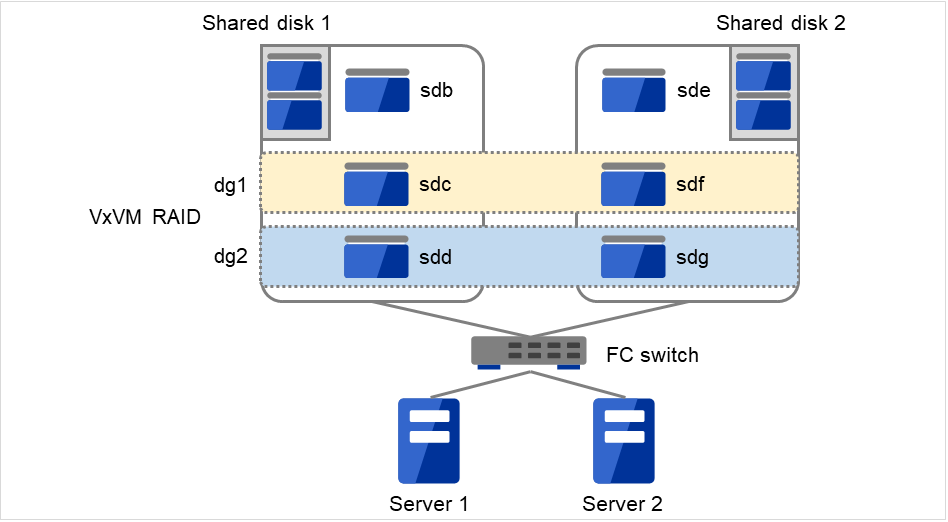

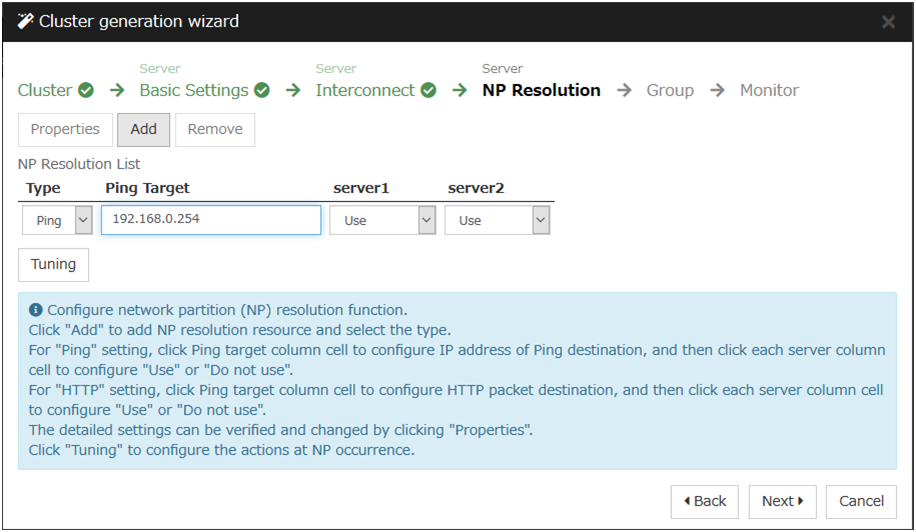

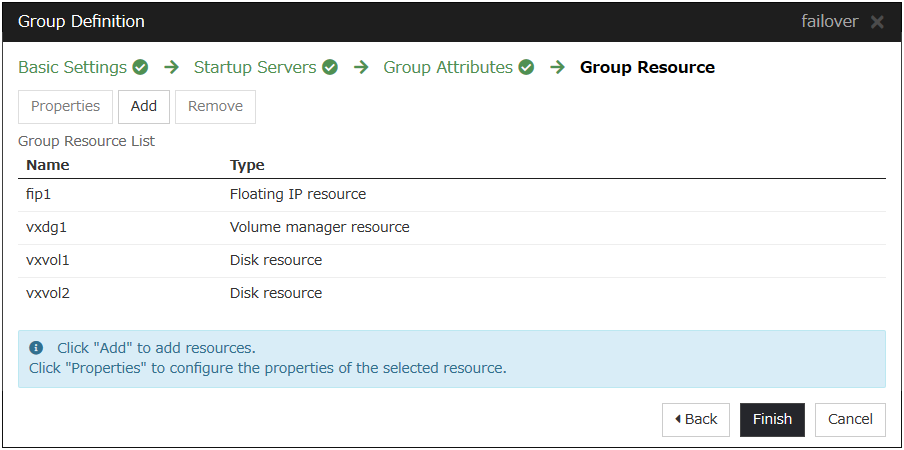

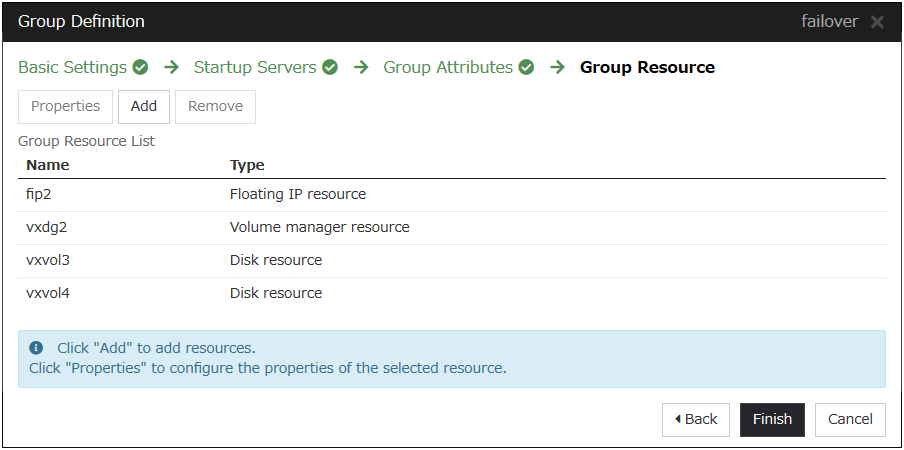

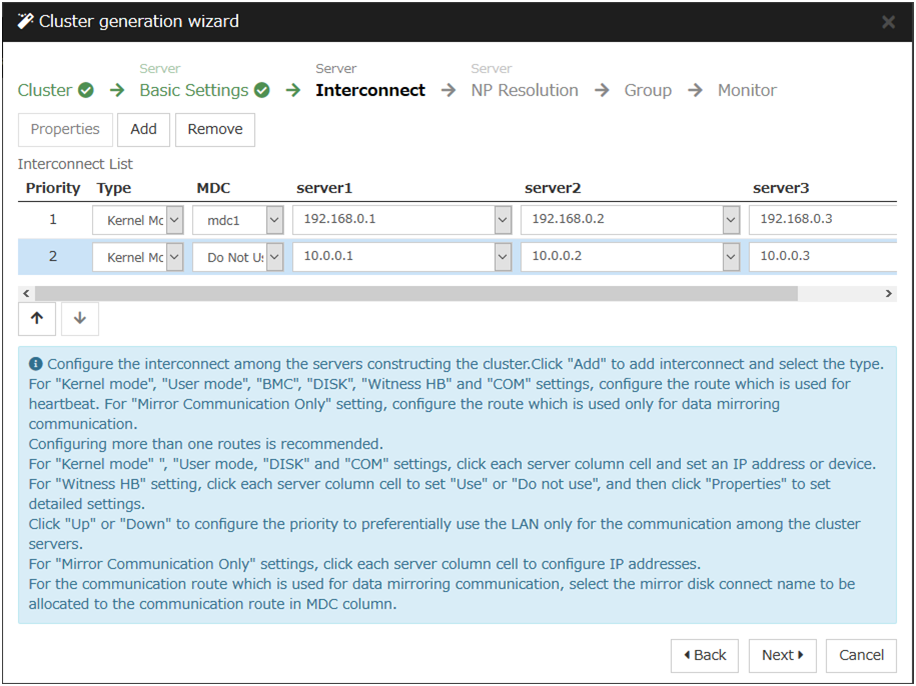

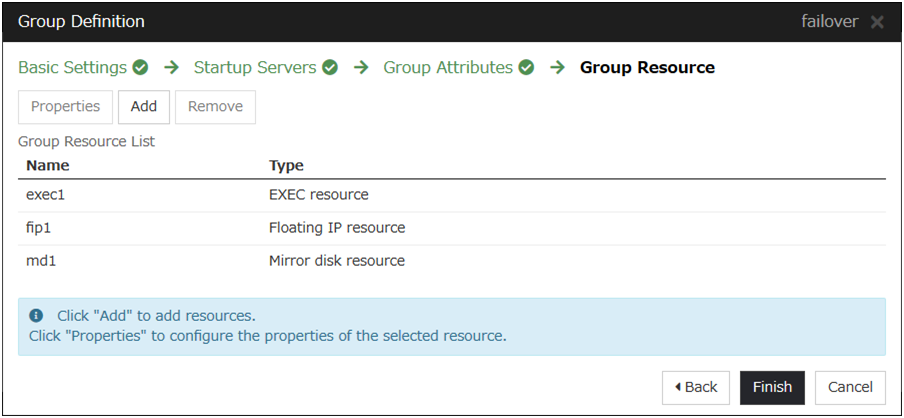

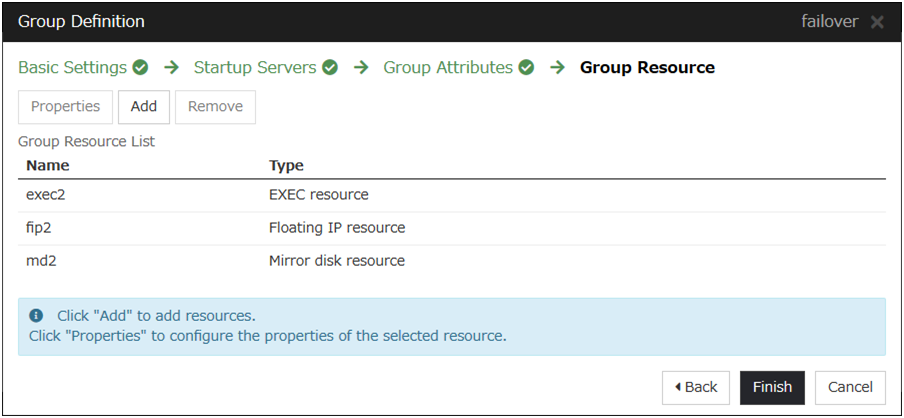

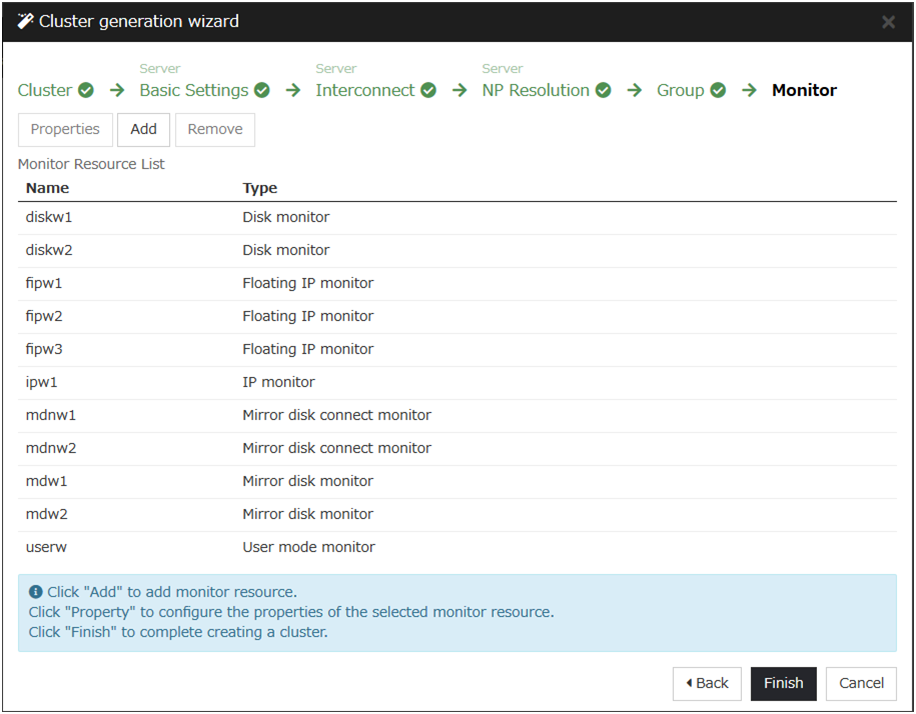

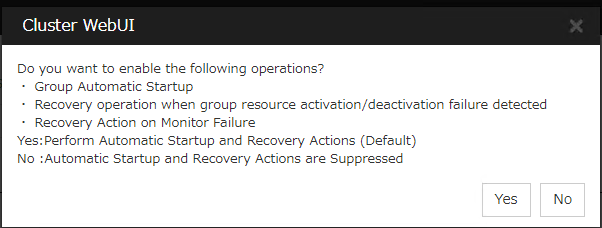

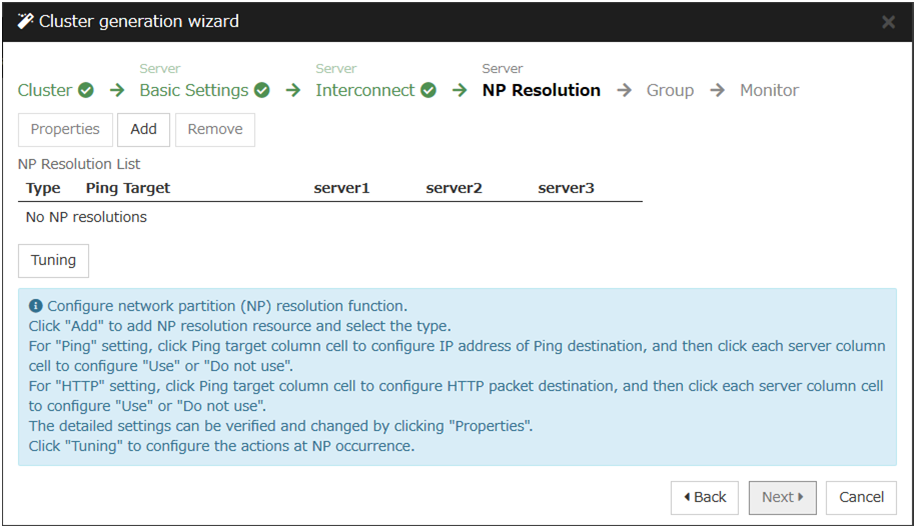

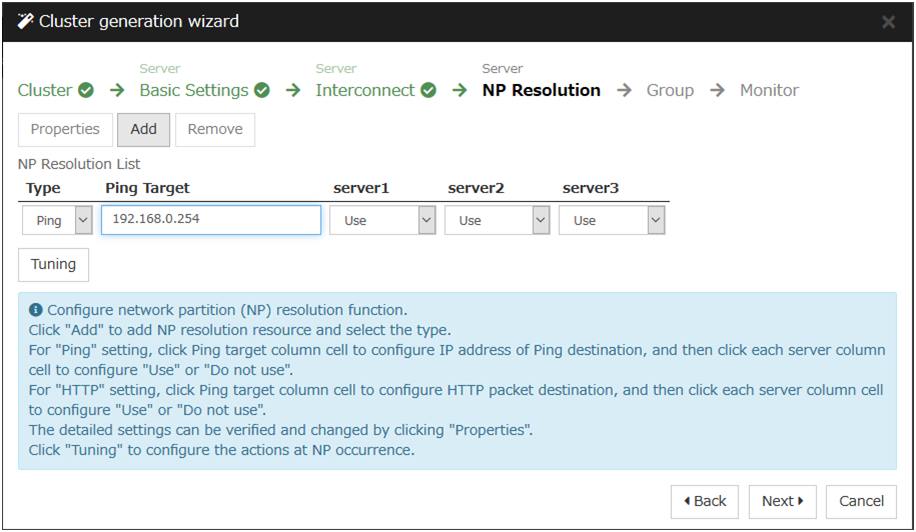

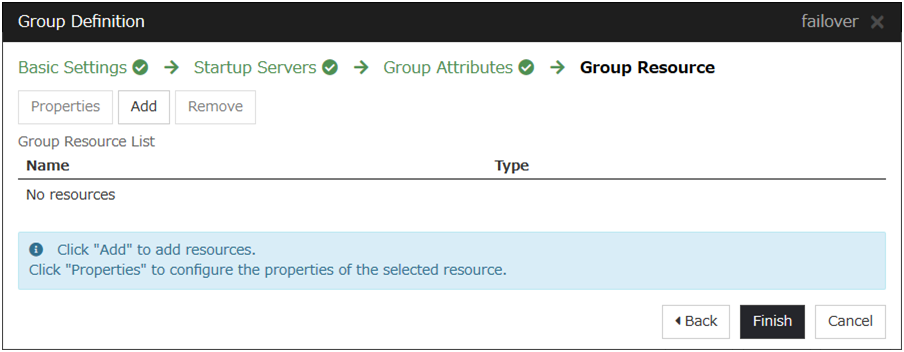

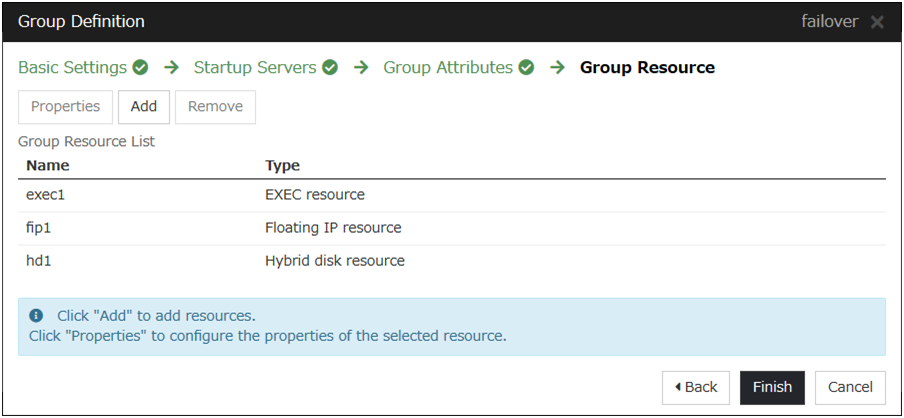

- If there is any other server in the cluster that uses an optional product, register the node license by repeating the same steps.Register the license for the Replicator / Replicator DR to both servers.